Dynamic programming: Difference between revisions

No edit summary |

|||

| Line 24: | Line 24: | ||

Dynamic programming usually takes one of two approaches: |

Dynamic programming usually takes one of two approaches: |

||

* '''[[top-down|Top-down approach]]''': The problem is broken into subproblems, and these subproblems are solved and the solutions remembered, in case they need to be solved again. This is recursion and memorization combined together. |

* '''[[top-down|Top-down approach]]''': The problem is broken into subproblems, and these subproblems are solved and the solutions remembered, in case they need to be solved again. This is recursion and memoization <!-- Yes, memoization, not memorization. Not a typo. --> combined together. |

||

* '''[[bottom-up|Bottom-up approach]]''': All subproblems that might be needed are solved in advance and then used to build up solutions to larger problems. This approach is slightly better in stack space and number of function calls, but it is sometimes not intuitive to figure out all the subproblems needed for solving the given problem. |

* '''[[bottom-up|Bottom-up approach]]''': All subproblems that might be needed are solved in advance and then used to build up solutions to larger problems. This approach is slightly better in stack space and number of function calls, but it is sometimes not intuitive to figure out all the subproblems needed for solving the given problem. |

||

Revision as of 11:52, 10 May 2007

In computer science, dynamic programming is a method of solving problems exhibiting the properties of overlapping subproblems and optimal substructure (described below) that takes much less time than naive methods.

The term was originally used in the 1940s by Richard Bellman to describe the process of solving problems where one needs to find the best decisions one after another. By 1953, he had refined this to the modern meaning. The field was founded as a systems analysis and engineering topic which is recognized by the IEEE. Bellman's contribution is remembered in the name of the Bellman equation, a key result.

The word "programming" in "dynamic programming" has no particular connection to computer programming at all. A program is, instead, the plan for action that is produced. For instance, a finalized schedule of events at an exhibition is sometimes called a program. Programming, in this sense, is finding an acceptable plan of action.

Overview

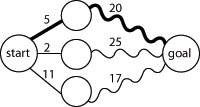

Optimal substructure means that optimal solutions of subproblems can be used to find the optimal solutions of the overall problem. For example, the shortest path to a goal from a vertex in a graph can be found by first computing the shortest path to the goal from all adjacent vertices, and then using this to pick the best overall path, as shown in Figure 1. In general, we can solve a problem with optimal substructure using a three-step process:

- Break the problem into smaller subproblems.

- Solve these problems optimally using this three-step process recursively.

- Use these optimal solutions to construct an optimal solution for the original problem.

The subproblems are, themselves, solved by dividing them into sub-subproblems, and so on, until we reach some simple case that is easy to solve.

To say that a problem has overlapping subproblems is to say that the same subproblems are used to solve many different larger problems. For example, in the Fibonacci sequence, F3 = F1 + F2 and F4 = F2 + F3 — computing each number involves computing F2. Because both F3 and F4 are needed to compute F5, a naïve approach to computing F5 may end up computing F2 twice or more. This applies whenever overlapping subproblems are present: a naïve approach may waste time recomputing optimal solutions to subproblems it has already solved.

In order to avoid this, we instead save the solutions to problems we have already solved. Then, if we need to solve the same problem later, we can retrieve and reuse our already-computed solution. This approach is called memoization (not memorization, although this term also fits). If we are sure we won't need a particular solution anymore, we can throw it away to save space. In some cases, we can even compute the solutions to subproblems we know that we'll need in advance.

In summary, dynamic programming makes use of:

Dynamic programming usually takes one of two approaches:

- Top-down approach: The problem is broken into subproblems, and these subproblems are solved and the solutions remembered, in case they need to be solved again. This is recursion and memoization combined together.

- Bottom-up approach: All subproblems that might be needed are solved in advance and then used to build up solutions to larger problems. This approach is slightly better in stack space and number of function calls, but it is sometimes not intuitive to figure out all the subproblems needed for solving the given problem.

Some programming languages with special extensions [1] can automatically memoize the result of a function call with a particular set of arguments, in order to speed up call-by-name evaluation (this mechanism is referred to as call-by-need). Some languages (e.g., Maple) have automatic memoization builtin. This is only possible for a function which has no side-effects, which is always true in pure functional languages but seldom true in imperative languages.

The steps for using dynamic program goes as follows:

- Intialization- The first step in setting up a global alignment sequence.

Set up a matrix filling in the first row and column with zeros since it's assumed there's no gap penalties

- Matrix Fill- Filling in the matrix requires finding a score for each sequence alignment.

To do this we look at the left, top, and above and diagonally left to reach the score of the alignment

If there's a match, +1, if there's a mismatch, 0, and if there's a gap, -1

Do this for all alignments until the matrix is filled up

- Traceback - This step determines the actual alignments that result in the maximum score

We do this by starting at the lower right corner and taking the upper box, the box to the left, and

the box diagonally up.

We pick the box that gives us the best score. Repeat this till we get to the top right corner, this

is our maximum alignment. Dynamic programming tutorial

Examples

A naive implementation of a function finding the nth member of the Fibonacci sequence, based directly on the mathematical definition:

function fib(n)

if n = 0 or n = 1

return 1

else

return fib(n − 1) + fib(n − 2)

Notice that if we call, say, fib(5), we produce a call tree that calls the function on the same value many different times:

fib(5)fib(4) + fib(3)(fib(3) + fib(2)) + (fib(2) + fib(1))((fib(2) + fib(1)) + (fib(1) + fib(0))) + ((fib(1) + fib(0)) + fib(1))(((fib(1) + fib(0)) + fib(1)) + (fib(1) + fib(0))) + ((fib(1) + fib(0)) + fib(1))

In particular, fib(2) was calculated twice from scratch. In larger examples, many more values of fib, or subproblems, are recalculated, leading to an exponential time algorithm.

Now, suppose we have a simple map object, m, which maps each value of fib that has already been calculated to its result, and we modify our function to use it and update it. The resulting function requires only O(n) time instead of exponential time:

var m := map(0 → 1, 1 → 1)

function fib(n)

if map m does not contain key n

m[n] := fib(n − 1) + fib(n − 2)

return m[n]

This technique of saving values that have already been calculated is called memoization; this is the top-down approach, since we first break the problem into subproblems and then calculate and store values.

In the bottom-up approach we calculate the smaller values of fib first, then build larger values from them. This method also uses linear (O(n)) time since it contains a loop that repeats n − 1 times:

function fib(n)

var previousFib := 0, currentFib := 1

repeat n − 1 times

var newFib := previousFib + currentFib

previousFib := currentFib

currentFib := newFib

return currentFib

In both these examples, we only calculate fib(2) one time, and then use it to calculate both fib(4) and fib(3), instead of computing it every time either of them is evaluated.

Checkerboard

Consider a checkerboard with n × n squares and a cost-function c(i, j) which returns a cost associated with square i,j (i being the row, j being the column). For instance (on a 5 × 5 checkerboard),

| 5 | 6 | 7 | 4 | 7 | 8 |

|---|---|---|---|---|---|

| 4 | 7 | 6 | 1 | 1 | 4 |

| 3 | 3 | 5 | 7 | 8 | 2 |

| 2 | 2 | 6 | 7 | 0 | 2 |

| 1 | 7 | 3 | 5 | 6 | 1 |

| 1 | 2 | 3 | 4 | 5 |

Thus c(1, 3) = 5

Let us say you had a checker that could start at any square on the first rank (i.e., row) and you wanted to know the shortest path (sum of the costs of the visited squares are at a minimum) to get to the last rank, assuming the checker could move only diagonally left forward, diagonally right forward, or straight forward. That is, a checker on (1,3) can move to (2,2), (2,3) or (2,4).

| 5 | |||||

|---|---|---|---|---|---|

| 4 | |||||

| 3 | |||||

| 2 | x | x | x | ||

| 1 | o | ||||

| 1 | 2 | 3 | 4 | 5 |

This problem exhibits optimal substructure. That is, the solution to the entire problem relies on solutions to subproblems. Let us define a function q(i, j) as

- q(i, j) = the minimum cost to reach square (i, j)

If we can find the values of this function for all the squares at rank n, we pick the minimum and follow that path backwards to get the shortest path.

It is easy to see that q(i, j) is equal to the minimum cost to get to any of the three squares below it (since those are the only squares that can reach it) plus c(i, j). For instance:

| 5 | |||||

|---|---|---|---|---|---|

| 4 | A | ||||

| 3 | B | C | D | ||

| 2 | |||||

| 1 | |||||

| 1 | 2 | 3 | 4 | 5 |

Now, let us define q(i, j) in little more general terms:

This equation is pretty straightforward. The first line is simply there to make the recursive property simpler (when dealing with the edges, so we need only one recursion). The second line says what happens in the first rank, so we have something to start with. The third line, the recursion, is the important part. It is basically the same as the A,B,C,D example. From this definition we can make a straightforward recursive code for q(i, j). In the following pseudocode, n is the size of the board, c(i, j) is the cost-function, and min() returns the minimum of a number of values:

function minCost(i, j)

if j < 1 or j > n

return infinity

else if i = 1

return c(i, j)

else

return min( minCost(i-1, j-1), minCost(i-1, j), minCost(i-1, j+1) ) + c(i, j)

It should be noted that this function just computes the path-cost, not the actual path. We will get to the path soon. This, like the Fibonacci-numbers example, is horribly slow since it spends mountains of time recomputing the same shortest paths over and over. However, we can compute it much faster in a bottom up-fashion if we use a two-dimensional array q[i, j] instead of a function. Why do we do that? Simply because when using a function we recompute the same path over and over, and we can choose what values to compute first.

We also need to know what the actual path is. The path problem we can solve using another array p[i, j], a predecessor array. This array basically says where paths come from. Consider the following code:

function computeShortestPathArrays()

for x from 1 to n

q[1, x] := c(1, x)

for y from 1 to n

q[y, 0] := infinity

q[y, n + 1] := infinity

for y from 2 to n

for x from 1 to n

m := min(q[y-1, x-1], q[y-1, x], q[y-1, x+1])

q[y, x] := m + c(y, x)

if m = q[y-1, x-1]

p[y, x] := -1

else if m = q[y-1, x]

p[y, x] := 0

else

p[y, x] := 1

Now the rest is a simple matter of finding the minimum and printing it.

function computeShortestPath()

computeShortestPathArrays()

minIndex := 1

min := q[n, 1]

for i from 2 to n

if q[n, i] < min

minIndex := i

min := q[n, i]

printPath(n, minIndex)

function printPath(y, x)

print(x)

print("<-")

if y = 2

print(x + p[y, x])

else

printPath(y-1, x + p[y, x])

Algorithms that use dynamic programming

- Many string algorithms including longest common subsequence

- The Cocke-Younger-Kasami (CYK) algorithm which determines whether and how a given string can be generated by a given context-free grammar

- The use of transposition tables and refutation tables in computer chess

- The Viterbi algorithm (used for hidden Markov models)

- The Earley algorithm (a type of chart parser)

- The Needleman-Wunsch and other sequence alignment algorithms used in bioinformatics

- Levenshtein distance (edit distance)

- Floyd's All-Pairs shortest path algorithm

- Optimizing the order for chain matrix multiplication

- Pseudopolynomial time algorithms for the Subset Sum and Knapsack Problems

- The dynamic time warping algorithm for computing the global distance between two time series

- The Selinger (a.k.a System R) algorithm for relational database query optimization

- De Boor algorithm for evaluating B-spline curves

- Duckworth-Lewis method for resolving the problem when games of cricket are interrupted

- The Value Iteration method for solving Markov decision processes

See also

External links

- Dyna, a declarative programming language for dynamic programming algorithms

- Wagner, David B., 1995, "Dynamic Programming." An introductory article on dynamic programming in Mathematica.

- Ohio State University: CIS 680: class notes on dynamic programming, by Eitan M. Gurari

- A Tutorial on Dynamic programming

- More DP Notes

- Algorithmist's Dynamic Programming Contains more examples of Dynamic Programming.

- King, Ian, 2002 (1987), "A Simple Introduction to Dynamic Programming in Macroeconomic Models." An introduction to dynamic programming as an important tool in economic theory.

- Dynamic Programming: from novice to advanced A TopCoder.com article by Dumitru on Dynamic Programming

- Algebraic Dynamic Programming - a formalized framework for dynamic programming, including an entry-level course to DP, University of Bielefeld

- Dreyfus, Stuart, "Richard Bellman on the birth of Dynamic Programming."

- Example of calculating Fibonacci numbers using dynamic programming, C++

- Dynamic programming tutorial

- An Introduction to Dynamic Programming

References

- Adda, Jerome, and Cooper, Russell, 2003. Dynamic Economics. MIT Press. An accessible introduction to dynamic programing in economics. The link contains sample programs.

- Bertsekas, D. P., 2000. Dynamic Programming and optimal Control, Vols. 1 & 2, 2nd ed. Athena Scientific. ISBN 1-886529-09-4.

- Thomas H. Cormen, Charles E. Leiserson, Ronald L. Rivest, and Clifford Stein, 2001. Introduction to Algorithms, 2nd ed. MIT Press & McGraw-Hill. ISBN 0-262-03293-7. Especially pp. 323–69.

- Giegerich, R., Meyer, C., and Steffen, P., 2004, "A Discipline of Dynamic Programming over Sequence Data," Science of Computer Programming 51: 215-263.

- Nancy Stokey, and Robert E. Lucas, with Edward Prescott, 1989. Recursive Methods in Economic Dynamics. Harvard Univ. Press.