Silhouette coefficient

The silhouette indicates an observation of how good is the assignment to the two nearest clusters. The silhouette coefficient indicates a measure of the quality of a clustering that is independent of the number of clusters . The silhouette plot visualizes all the silhouettes of a data set as well as the silhouette coefficient for the individual clusters and the entire data set.

silhouette

| structuring | Range of values from |

|---|---|

| strong | |

| medium | |

| weak | |

| no structure |

If the object belongs to the cluster , the silhouette of is defined as:

with the distance of an object to the cluster and the distance of an object to the closest cluster . The difference in the distance is normalized with the maximum distance. It follows that for an object lies between −1 and 1:

- If the silhouette is , then the objects in the closest cluster are closer to the object than the objects in the cluster to which the object belongs. This indicates that clustering can be improved.

- If the silhouette , then the object lies between two clusters and

- if the silhouette is close to one, the object is in a cluster.

The distance is calculated as

as the mean of the distance between all other objects in the cluster and the object ( is the number of objects in the cluster ). Similarly, the distance to the closest cluster is calculated as the minimum average distance

- .

The distance is calculated for all clusters that do not contain the object . The closest cluster is the one that is closest .

Silhouette coefficient

The silhouette coefficient is defined as

thus defined as the arithmetic mean of all the silhouettes in the cluster . The silhouette coefficient can be calculated for each cluster or for the entire data set.

With the k-means or k-medoid algorithm, it can be used to compare the results of several runs of the algorithm in order to obtain better parameters. This is particularly useful for the algorithms mentioned, since they start randomly and can thus find different local maxima. The influence of the parameter can thus be reduced, since the silhouette coefficient is independent of the number of clusters and can thus compare results that were obtained with different values for .

Silhouette plot

The graphical representation of the silhouettes takes place for all observations together in a silhouette plot . For all observations that belong to a cluster, the value of the silhouette is shown as a horizontal (or vertical) line. The observations in a cluster are arranged according to the size of the silhouettes.

The graph on the right shows the data for four different data sets, the dendrogram for a hierarchical cluster analysis (Euclidean distance, single linkage) and the silhouette plot for the solution with two clusters (from top to bottom). The assignment of the data points by the hierarchical cluster analysis in the two-cluster solution is symbolized by the colors red (assignment to cluster 1) and blue (assignment to cluster 2).

The better the two clusters are separated in the data (from left to right), the better the hierarchical cluster analysis can assign the data points correctly. The silhouette plot also changes. While negative silhouettes occur for the data set on the left, only positive silhouettes are found in the data set on the far right. The silhouette coefficients also increase from left to right, both for the individual clusters and for the entire data set.

example

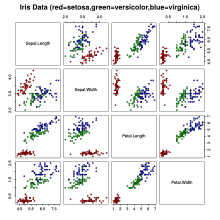

The Iris flower dataset consists of 50 observations of three species of irises (Iris Setosa, Iris Virginica and Iris Versicolor), on each of which four attributes of the flowers were recorded: the length and width of the sepalum (sepal) and the petalum (petal ). On the right, a scatter plot matrix shows the data for the four variables.

A hierarchical cluster analysis with the Euclidean distance and the single linkage method was carried out for the four quantities . The following graphics are shown above:

- Top left : A dendrogram of the cluster solution. Here you can see that a two- or four-cluster solution would be appropriate.

- Top right : Graphical representation of the silhouettes of the two-cluster solution. Negative silhouettes can be found in the first cluster so that these observations are more likely to be assigned incorrectly. A solution with more clusters may be more suitable.

- Bottom left : Graphic representation of the silhouettes of the three-cluster solution. The first cluster is split into two sub-clusters ( ); Although the negative silhouettes in the first cluster have disappeared, observations in the second cluster now have negative silhouettes.

- Bottom right : Graphic representation of the silhouettes of the four-cluster solution. The second cluster of the two-cluster solution is now split into two sub-clusters ( ). There are almost no negative silhouettes left.

The following silhouette coefficients result

| Silhouette coefficients |

|||||

|---|---|---|---|---|---|

| Number of clusters | Total | ||||

| 2 | 150 / 0.52 | 78 / 0.39 | 72 / 0.66 | ||

| 3 | 150 / 0.51 | 50 / 0.76 | 28 / 0.59 | 72 / 0.31 | |

| 4th | 150 / 0.50 | 50 / 0.76 | 28 / 0.52 | 60 / 0.27 | 12 / 0.51 |

literature

- Martin Ester, Jörg Sander: Knowledge Discovery in Databases: Techniques and Applications . Springer, Hamburg / Berlin 2000, ISBN 3-540-67328-8 , pp. 66 . Online: limited preview in Google Book search

- Peter J. Rousseeuw: Silhouettes: a Graphical Aid to the Interpretation and Validation of Cluster Analysis . In: Computational and Applied Mathematics. 20, 1987, pp. 53-65. doi : 10.1016 / 0377-0427 (87) 90125-7 .

Web links

- silhouette of: calculating coefficients and silhouette -plots with R .