Average

A mean value (in short just mean ; another word mean ) is a further number determined from given numbers according to a certain calculation rule . Some of any number of calculable mean values are the arithmetic , the geometric and the quadratic mean .

Mean values are most often used in statistics , with mean or average mostly referring to the arithmetic mean. The mean value is a characteristic value for the central tendency of a distribution. The mean is closely related to the expected value of a distribution. The expected value is based on the theoretically expected frequency, while the (arithmetic) mean value is determined from specific data.

history

In mathematics, mean values, especially the three classical mean values (arithmetic, geometric and harmonic mean), already appeared in ancient times. Pappos of Alexandria denotes ten different mean values of two numbers and ( ) by special values of the distance ratio . The inequality between harmonic, geometric and arithmetic mean is also known and interpreted geometrically in ancient times. In the 19th and 20th centuries, mean values play a special role in analysis, mainly in connection with famous inequalities and important functional properties such as convexity ( Hölder inequality , Minkowski inequality , Jensen's inequality , etc.). The classic means were generalized in several steps, first to the Potency values (see section generalized mean below) and these in turn to the arithmetic quasi-averages . The classic inequality between harmonic, geometric and arithmetic mean turns into more general inequalities between power means or quasi-arithmetic means.

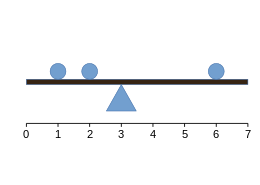

Visualization of the arithmetic mean

Recalculation without dimension :

ball weight equals distances to pivot point equals and results

The most commonly used mean, the arithmetic mean, can e.g. B. visualize with equally heavy balls on a seesaw, which are balanced by a triangle (pivot point) due to the laws of leverage . Assuming that the weight of the beam can be neglected, the position of the triangle that creates the balance is the arithmetic mean of the ball positions.

Definitions of the three classic mean values

In the following, given real numbers , in statistics for example measured values , the mean value of which is to be calculated.

Arithmetic mean

The arithmetic mean is the sum of the given values divided by the number of values.

Geometric mean

In the case of numbers that are interpreted on the basis of their product rather than their sum, the geometric mean can be calculated. To do this, the numbers are multiplied with one another and the nth root is taken, where n corresponds to the number of numbers to be averaged.

Harmonic mean

The harmonic mean is used when the numbers are defined in relation to a unit. To do this, the number of values is divided by the sum of the reciprocal values of the numbers.

Examples of using different means

| Feature carrier | value |

|---|---|

| 3 | |

| 2 | |

| 2 | |

| 2 | |

| 3 | |

| 4th | |

| 5 |

In the following, the seven entries on the right in the table of values are intended to show where which definition of the mean is useful.

The arithmetic mean is used, for example, to calculate the average speed, so the values are interpreted as speeds: If a turtle first walks three meters per hour for an hour, then for three hours every two meters and accelerates again to three, four and for one hour five meters per hour, the arithmetic mean for a distance of 21 meters in 7 hours is:

The harmonic mean can also be useful for calculating an average speed if measurements are not taken over the same times but over the same distances. In this case, the values in the table indicate the times in which a uniform distance is covered: The turtle runs the 1st meter at 3 meters per hour, another 3 m at 2 m / h each and accelerates again on the last 3 meters to 3, 4 and 5 m / h respectively. The average speed results in a distance of 7 meters in hours:

The mean growth factor is calculated using the geometric mean . The table of values is thus interpreted as specifying growth factors. For example, a bacterial culture grows five-fold on the first day, four-fold on the second, then three-fold twice, and for the last three days it doubles daily. The stock after the seventh day is calculated using the alternative, the end stock can be determined with the geometric mean, because

and thus is

A daily growth of the bacterial culture by 2.83-fold would have led to the same result after seven days.

Common definition of the three classic mean values

The idea on which the three classic mean values are based can be formulated generally in the following way:

With the arithmetic mean you look for the number for which

applies, whereby the sum extends over summands on the left . The arithmetic mean therefore averages with regard to the arithmetic link “sum”. Using the arithmetic mean of bars of different lengths, one can clearly determine one with an average or medium length.

In the geometric mean one looks for the number for which

applies, with the product on the left extending over factors. The geometric mean therefore averages with regard to the arithmetic link “product”.

The harmonic mean solves the equation

Connections

Connection with expected value

The general difference between a mean value and the expected value is that the mean value is applied to a specific data set, while the expected value provides information about the distribution of a random variable . What is important is the connection between these two parameters. If the data set to which the mean is applied is a sample of the distribution of the random variable, the arithmetic mean is the unbiased and consistent estimate of the expected value of the random variable. Since the expected value corresponds to the first moment of a distribution, the mean value is therefore often used to restrict the distribution based on empirical data. In the case of the frequently used normal distribution, which is completely determined by the first two moments, the mean value is therefore of decisive importance.

Relationship between arithmetic, harmonic and geometric mean

The reciprocal of the harmonic mean is equal to the arithmetic mean of the reciprocal values of the numbers.

For the mean values are related to each other in the following way:

or resolved according to the geometric mean

Inequality of the means

The inequality of the arithmetic and geometric mean compares the values of the arithmetic and geometric mean of two given numbers: It always applies to positive variables

The inequality can also be extended to other mean values, e.g. B. (for positive variable)

There is also a graphic illustration for two (positive) variables:

The geometric mean follows directly from the Euclidean height theorem and the harmonic mean from the Euclidean cathetus theorem with the relationship

Compared to other measures of central tendency

A mean value is often used to describe a central value of a data set. There are other parameters that also fulfill this function, median and mode . The median describes a value that divides the data set in half, while the mode specifies the value with the highest frequency in the data set. Compared to the median, the mean is more prone to outliers and therefore less robust . It is also possible, since the median describes a quantile of the distribution, that this describes a value from the initial quantity. This is particularly interesting if the numbers between the given data are not meaningful for other - for example physical - considerations. The median is generally determined using the following calculation rule.

Other mean values and similar functions

Weighted means

The weighted or also weighted mean values arise when one assigns different weights to the individual values with which they flow into the overall mean ; For example, when oral and written performance in an examination have different degrees of influence in the overall grade.

The exact definitions can be found here:

Square and cubic mean

Other means that can be used are the quadratic mean and cubic mean . The root mean square is calculated using the following calculation rule:

The cubic mean is determined as follows:

Logarithmic mean

The logarithmic mean of and is defined as

For the logarithmic mean lies between the geometric and the arithmetic mean (for it is not defined because of the division by zero ).

Winsored and trimmed mean

If one can assume that the data are contaminated by “ outliers ”, that is, a few values that are too high or too low, the data can either be pruned or by “winsorize” (named after Charles P. Winsor ) and the trimmed (or truncated) (engl. truncated mean ) or winsorisierten mean (engl. Winsorized mean calculated). In both cases , the observation values are sorted first according to increasing size. When trimming, you then cut off an equal number of values at the beginning and at the end of the sequence and calculate the mean value from the remaining values. On the other hand, when "winsize" the outliers at the beginning and end of the sequence are replaced by the next lower (or higher) value of the remaining data.

Example: If you have 10 real numbers sorted in ascending order , the 10% trimmed mean is the same

However, the 10% winsorized mean is the same

That is, the trimmed mean lies between the arithmetic mean (no truncation) and the median (maximum truncation). Usually a 20% trimmed mean is used; That is, 40% of the data are not taken into account for the mean value calculation. The percentage is essentially based on the number of suspected outliers in the data; for conditions for a trim of less than 20%, reference is made to the literature.

Quartile mean

The quartile mean is defined as the mean of the 1st and 3rd quartile :

Here refers to the 25 -% - quantile (1st quartile) and according to the 75 -% - quantile (3rd quartile) of the measured values.

The quartile mean is more robust than the arithmetic mean, but less robust than the median .

Middle of the shortest half

Let be the shortest interval among all intervals with , then its middle is (middle of the shortest half). In the case of unimodal symmetric distributions , this value converges towards the arithmetic mean.

Gastwirth-Cohen funds

The Gastwirth-Cohen mean uses three quantiles of the data: the -quantile and the -quantile with weight and the median with weight :

with and .

Are special cases

- the quartile mean with , and

- the Trimean with , .

Area means

The range mean ( English mid-range ) is defined as the arithmetic mean of the largest and the smallest observation value:

This is equivalent to:

The "a-means"

For a given real vector with the expression

where over all permutations of are summed up, referred to as the “ mean” [ ] of the nonnegative real numbers .

In that case , that gives exactly the arithmetic mean of the numbers ; in this case , the geometric mean results exactly.

The Muirhead inequality applies to the means .

Example: Be and

- then holds and the set of permutations (in shorthand) of is

This results in

Moving averages

Moving averages are used in the dynamic analysis of measured values . They are also a common means of technical analysis in financial mathematics . With moving averages, the stochastic noise can be filtered out from time-advancing signals . Often these are FIR filters . However, it should be noted that most moving averages will chase the real signal. For predictive filters see e.g. B. Kalman filters .

Moving averages usually require an independent variable that denotes the size of the trailing sample or the weight of the previous value for the exponential moving averages.

Common moving averages are:

- arithmetic moving averages ( Simple Moving Average - SMA),

- exponential moving averages ( Exponential Moving Average - EMA)

- double exponential moving averages ( Double EMA , DEMA),

- triple, triple exponential moving averages ( Triple EMA - TEMA),

- linear weighted moving averages (linearly decreasing weighting),

- squared weighted moving averages and

- further weightings: sine, triangular, ...

In the financial literature, so-called adaptive moving averages can also be found, which automatically adapt to a changing environment (different volatility / spread, etc.):

- Kaufmann's Adaptive Moving Average (KAMA) as well

- Variable Index Dynamic Average (VIDYA).

For the application of moving averages, see also Moving Averages (Chart Analysis) and MA-Model .

Combined means

Mean values can be combined; this is how the arithmetic-geometric mean , which lies between the arithmetic and geometric mean, arises .

Generalized means

There are a number of other functions with which the known and other mean values can be generated.

Holder means

For positive numbers defining the -Potenzmittelwert also generalized mean ( English -th power mean ) and

For the value is defined by continuous addition :

Note that both the notation and the label are inconsistent.

For example, this results in the harmonic, geometric, arithmetic, quadratic and cubic mean. For there is the minimum, for the maximum of the numbers.

In addition, the following applies to fixed numbers : the larger is, the larger is ; from this follows the generalized inequality of the mean values

Clay means

The Lehmer mean is another generalized mean; to the stage it is defined by

It has the special cases

- is the harmonic mean;

- is the geometric mean of and ;

- is the arithmetic mean;

Stolarsky means

The Stolarsky mean of two numbers is defined by

Integral representation according to Chen

The function

results for various arguments the known average values of and :

- is the harmonic mean.

- is the geometric mean.

- is the arithmetic mean.

The mean value equation follows from the continuity and monotony of the function so defined

Mean of a function

The arithmetic mean of a continuous function in a closed interval is

- , where is the number of support points.

The root mean square of a continuous function is

These are given considerable attention in technology, see equivalence and effective value .

literature

- F. Ferschl: Descriptive Statistics. 3. Edition. Physica-Verlag Würzburg, ISBN 3-7908-0336-7 .

- PS Bulls: Handbook of Means and Their Inequalities. Kluwer Acad. Pub., 2003, ISBN 1-4020-1522-4 (comprehensive discussion of mean values and the inequalities associated with them).

- GH Hardy, JE Littlewood, G. Polya: Inequalities. Cambridge Univ. Press, 1964.

- E. Beckenbach, R. Bellman: Inequalities. Springer, Berlin 1961.

- F. Sixtl: The myth of the mean. R. Oldenbourg Verlag, Munich / Vienna 1996, 2nd edition, ISBN 3-486-23320-3

Web links

- Averaging on Scholarpedia (English)

Individual evidence

- ↑ a b F. Ferschl: Descriptive Statistics. 3. Edition. Physica-Verlag Würzburg, ISBN 3-7908-0336-7 . Pp. 48-74.

- ↑ RK Kowalchuk, HJ Keselman, RR Wilcox, J. Algina: Multiple comparison procedures, trimmed and Means Transformed statistics . In: Journal of Modern Applied Statistical Methods . tape 5 , 2006, p. 44-65 , doi : 10.22237 / jmasm / 1146456300 .

- ↑ RR Wilcox, HJ Keselman: Power analysis When comparing trimmed Means . In: Journal of Modern Applied Statistical Methods . tape 1 , 2001, p. 24-31 , doi : 10.22237 / jmasm / 1020254820 .

- ^ L. Davies: Data Features . In: Statistica Neerlandica . tape 49 , 1995, pp. 185–245 , doi : 10.1111 / j.1467-9574.1995.tb01464.x .

- ↑ Gastwirth JL, Cohen ML (1970) Small sample behavior of some robust linear estimators of location . J Amer Statist Assoc 65: 946-973, doi : 10.1080 / 01621459.1970.10481137 , JSTOR 2284600

- ↑ Eric W. Weisstein : Lehmer Mean . In: MathWorld (English).

- ↑ H. Chen: Means Generated by an Integral. In: Mathematics Magazine. Vol. 78, No. 5 (Dec. 2005), pp. 397-399, JSTOR 30044201 .

![{\ displaystyle {\ bar {x}} _ {\ mathrm {geom}} = {\ sqrt [{n}] {\ prod _ {i = 1} ^ {n} {x_ {i}}}} = { \ sqrt [{n}] {x_ {1} x_ {2} \ dotsm x_ {n}}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/7790eec550dcf694321ac5210907b364118dbdf1)

![{\ displaystyle {\ bar {x}} _ {\ mathrm {geom}} = {\ sqrt [{7}] {5 \ times 4 \ times 3 \ times 3 \ times 2 \ times 2 \ times 2}} = {\ sqrt [{7}] {1440}} \ approx 2 {,} 83}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3405b0e615cbfb9533c53aa3b6bd216b494cf75e)

![{\ displaystyle {\ bar {x}} _ {\ mathrm {cubic}} = {\ sqrt [{3}] {{\ frac {1} {n}} \ sum _ {i = 1} ^ {n} {x_ {i} ^ {3}}}} = {\ sqrt [{3}] {\ frac {x_ {1} ^ {3} + x_ {2} ^ {3} + \ dotsb + x_ {n} ^ {3}} {n}}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/aea994bd137c461530a509d73334de2105a106f2)

![{\ displaystyle [a] = {\ frac {1} {n!}} \ sum _ {\ sigma} x _ {\ sigma (1)} ^ {a_ {1}} \ dotsm x _ {\ sigma (n)} ^ {a_ {n}},}](https://wikimedia.org/api/rest_v1/media/math/render/svg/8dec3158ab3680b2bb8b33fb23da43eee63f82df)

![{\ displaystyle {\ begin {aligned} {[a]} & = {\ frac {1} {3!}} \ left (x_ {1} ^ {\ frac {1} {2}} x_ {2} ^ {\ frac {1} {3}} x_ {3} ^ {\ frac {1} {6}} + x_ {1} ^ {\ frac {1} {2}} x_ {3} ^ {\ frac { 1} {3}} x_ {2} ^ {\ frac {1} {6}} + x_ {2} ^ {\ frac {1} {2}} x_ {1} ^ {\ frac {1} {3 }} x_ {3} ^ {\ frac {1} {6}} + x_ {2} ^ {\ frac {1} {2}} x_ {3} ^ {\ frac {1} {3}} x_ { 1} ^ {\ frac {1} {6}} + x_ {3} ^ {\ frac {1} {2}} x_ {1} ^ {\ frac {1} {3}} x_ {2} ^ { \ frac {1} {6}} + x_ {3} ^ {\ frac {1} {2}} x_ {2} ^ {\ frac {1} {3}} x_ {1} ^ {\ frac {1 } {6}} \ right) \\ & = {\ frac {1} {6}} \ left (4 ^ {\ frac {1} {2}} {\ cdot} 5 ^ {\ frac {1} { 3}} {\ cdot} 6 ^ {\ frac {1} {6}} + 4 ^ {\ frac {1} {2}} {\ cdot} 6 ^ {\ frac {1} {3}} {\ cdot} 5 ^ {\ frac {1} {6}} + 5 ^ {\ frac {1} {2}} {\ cdot} 4 ^ {\ frac {1} {3}} {\ cdot} 6 ^ { \ frac {1} {6}} + 5 ^ {\ frac {1} {2}} {\ cdot} 6 ^ {\ frac {1} {3}} {\ cdot} 4 ^ {\ frac {1} {6}} + 6 ^ {\ frac {1} {2}} {\ cdot} 4 ^ {\ frac {1} {3}} {\ cdot} 5 ^ {\ frac {1} {6}} + 6 ^ {\ frac {1} {2}} {\ cdot} 5 ^ {\ frac {1} {3}} {\ cdot} 4 ^ {\ frac {1} {6}} \ right) \\ & \ approx 4 {,} 94. \ end {aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/07a89f2fb99ea070520e0b2b03b19179264a10d6)

![{\ bar {x}} (k) = {\ sqrt [{k}] {{\ frac {1} {n}} \ sum _ {{i = 1}} ^ {n} {x_ {i} ^ {k}}}}.](https://wikimedia.org/api/rest_v1/media/math/render/svg/0c4435d3556fd1f0faa31752055077355d7aac10)

![[from]](https://wikimedia.org/api/rest_v1/media/math/render/svg/9c4b788fc5c637e26ee98b45f89a5c08c85f7935)