Complexity class

In complexity theory , problems or algorithms are examined in terms of how complex they are to calculate with regard to a certain resource, mostly with regard to the time required or the (memory) space required. In the event of problems, the “most cost-effective” solution method is always adopted. The effort generally depends on the “size” of the input and how many elements it comprises. The asymptotic effort is estimated, i.e. for a large number of input elements. This shows that the problems with regard to their effort form groups, the effort of which increases in a similar way for large input quantities; these groups are called complexity classes . A complexity class is a set of problems that can be calculated in a certain resource-limited calculation model .

A complexity class is defined by an upper limit for the demand for a specific resource, provided that a calculation model is used . The resources most frequently considered are the number of computation steps required to solve a problem ( time complexity ) or the need for storage space (space or space complexity ). The resource requirement is usually measured by its asymptotic behavior at the upper limit (the worst case ) depending on the length of the input (problem size).

Other measures of the resource requirement are also possible, for example the statistical mean value over all possible inputs. However, it is difficult to analyze formally.

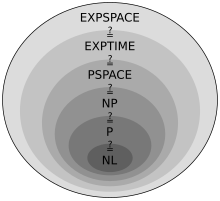

Since a complexity class only defines an upper limit for the resource requirement, a hierarchy of complexity classes is thus formed for a given calculation model, with less powerful classes being completely contained in the higher complexity classes. In addition, formal methods can also be used to relate classes defined using different calculation models or resources.

Classification of complexity classes

The complexity is often described with the help of Landau symbols in order to abstract from the peculiarities of certain implementations and calculation models. The difficulty in determining the exact complexity of a problem is that one would have to consider all of the possible algorithms for that problem. So you have to prove that a certain algorithm is optimal for this problem.

The complexity class of an algorithm is only important in a specific implementation on a machine, e.g. B. on a Turing machine or in the lambda calculus . However, the complexity classes of the implementation of an algorithm on different machine models are mostly similar or even - depending on the level of abstraction - the same.

It is found that only certain classes of this size can be sensibly differentiated, all of which are described with a characteristic equation. So interest z. For example, constant factors in the complexity of an algorithm are not - after all, in reality ( computers ) there are also machines whose execution speed differs by a constant factor. Here it also becomes clear why no units are needed and how the Landau notation is to be understood.

The distinction between time complexity and space complexity plays an important role in the classification of the complexity classes. When these are considered, time and space are limited. Furthermore, one differentiates between deterministic machines and nondeterministic ones . Informally, it can be said that space is more powerful than time and nondeterminism is usually more powerful than determinism, but only exponentially more powerful in each case. The Savitch theorem and the Immerman and Szelepcsényi theorem give more precise estimates .

A problem A, which can be reduced ( reduced ) to a problem B with little effort , belongs at least to the complexity class of B. B is then named more difficult than A. A problem A is K -difficult if all other problems of class K can be traced back to A. If a K -difficult problem A is itself in class K , it is called K -complete .

example

- Lawn mowing has at least linear complexity (in terms of area), because you have to drive over the entire area at least once. This means that the time required to mow m² lawn is ( * constant) seconds. For a lawn that is 3 * as large, it takes 3 * as long to mow - the time required increases linearly.

- Simple ("stupid") sorting methods often have a quadratic time complexity . E.g. 15 seconds to sort them would take (with this simple procedure) 100 * as long for 10 * as many books, and 2500 * as much time for 50 * as many books - the time expenditure increases quadratically.

- Searching in the phone book, on the other hand, has only logarithmic complexity in a " binary search " if you always open the remaining search area in the middle, whether you are too far ahead or behind the name you are looking for.

If the phone book is twice as thick, you only open it 1 * more, because that just halves the search area. The time it takes to search for entries grows with it . Searching in twice as many phone numbers does not need twice as many search steps, just one additional step.

See also

Individual evidence

- ↑ Natalia Kliewer: Complexity Theory . In: Lexikon der Wirtschaftsinformatik , Online-Lexikon, Oldenbourg Wissenschaftsverlag.