Phrase structure grammar

The term phrase structure grammar (engl. Phrase Structure Grammar) denotes formal grammars that after the constituents principle a set gradually decompose into smaller units. This model, which essentially corresponds to that of constituent grammar, is used in both theoretical computer science and linguistics and has detailed differences depending on the area of application. This is to be distinguished from the dependency grammar with its stringent mother-daughter assignment of the words during decomposition.

Definition of phrase structure grammar

Noam Chomsky formally defined phrase structure grammar as a set of production rules (= phrase structure rules) over an alphabet and a set of initial character strings. Chomsky restricted the production rules in such a way that only one symbol of a character string can be replaced in one replacement step and must not be deleted. With this restriction, the phrase structure grammars correspond to the context-sensitive grammars.

The production rules are context-sensitive in the sense of the Chomsky hierarchy, usually even context-free. Accordingly, type 1 and type 2 grammars (i.e. context-sensitive and context-free) are sometimes also regarded as phrase structure grammars in theoretical computer science. However, other authors understand phrase structure grammars to be all unrestricted formal grammars.

Phrase Structure Rules

The production rules of Chomsky's early phrase structure grammar are known as phrase structure rules. With these rules one could create syntactic structures according to the following scheme:

- A → BC

This rule specifies that a constituent A is replaced by the constituents B and C. Using this scheme, entire sentences can be generated:

- S → NP VP

- NP → DN

- D → that

- N → child

- VP → V NP

- V → drinks

- NP → DN

- D → a

- N → kola

With these rules one can generate the following sentence: The child drinks a kola . In linguistics, phrase structure grammars are therefore grammars that consist of such (or similar) rules. The structure of the sentence can be illustrated with the following tree diagram; the tree in turn, the derivation history (indicating Derivation ) of the set:

This tree thus also shows the structure of a sentence from phrases and their further breakdown down to the smallest constituents, usually the words. This process takes place according to the principle of constituency, which is also the basis of IC analysis (immediate constituent analysis).

The following grammars are based on the constituent model:

- Phrase structure grammars (= constituent grammars )

- Generalized Phrase Structure Grammar

- Head-driven Phrase Structure Grammar

- Categorical grammar

- Lexical-functional grammar

- Minimalist program

- Rection and attachment theory

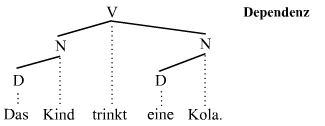

Dependency grammars

There are authors who strictly differentiate between phrase structure and dependency grammars : While the first is based on the principle of constituency, the second is based on the principle of dependence. In a dependency grammar, the decomposition of the above sentence looks like this:

The following grammars are based on the principle of dependence:

- Dependency grammars

- Meaning text model

- Extensible Dependency Grammar

- Functional generative description

- Lexicase Grammar

- Word Grammar

literature

- Vilmos Ágel et al. (Ed.): Dependency and Valence. Handbooks for language and communication studies 25 / 1–2, 188–229. Berlin, New York: Mouton de Gruyter, 2003/6.

- David Crystal: The Cambridge Encyclopedia of Language. , Campus Verlag Frankfurt 1993, pp. 95–97. ISBN 3-593-34824-1 .

- Franz Hundsnurscher: Syntax. In: Lexicon of German Linguistics. 2nd, completely revised and enlarged edition. Edited by Hans Peter Althaus, Helmut Henne, Herbert Ernst Wiegand. Niemeyer, Tübingen 1980, pp. 211-242. ISBN 3-484-10389-2 .

- Theodor Lewandowski: Linguistic Dictionary . Volume II. 4th, revised edition Quelle & Meyer, Heidelberg 1985. ISBN 3-494-02021-3 .

Web links

Individual evidence

- ^ Noam Chomsky: Three models for the description of language . In: IRE Transactions on Information Theory . Vol. 2, 1956, pp. 117 ( PDF ). PDF ( Memento from September 19, 2010 in the Internet Archive )

- ↑ DJ Hand: Artificial Intelligence and Psychiatry . In: The Scientific Basis of Psychiatry . No. 1 . Cambridge University Press, 1993, ISBN 978-0-521-25871-5 ( limited preview in Google Book Search).

- ↑ Grzegorz Rozenberg, Arto Salomaa: Word, language, grammar . In: Handbook of Formal Languages . Vol. 1. Springer, 1997, p. 176 ( limited preview in Google Book search).

- ^ Roland Hausser: Human-machine communication in natural language . In: Fundamentals of Computational Linguistics . Springer, Berlin 2000, ISBN 3-540-67187-0 , pp. 156 ( limited preview in Google Book search).

- ↑ Jule Philippi: Introduction to Generative Grammar . In: Study books on linguistics . tape 12 . Vandenhoeck + Ruprecht, 2008, ISBN 3-525-26548-4 , pp. 35 ( limited preview in Google Book search).

- ↑ Otto Vollnhals: A multilingual dictionary of artificial intelligence: English, German, French, Spanish, Italian . Routledge Chapman & Hall, 1992, ISBN 0-415-07465-7 , pp. 185 ( limited preview in Google Book search).

- ↑ As for the dependency grammar, cf. Ágel et al. (2003/6)