The two-sample t-test is a significance test from mathematical statistics . In the usual form, it uses the mean values of two samples to check whether the mean values of two populations are the same or different from one another.

There are two flavors of the two-sample t-test:

- those for two independent samples with equal standard deviations in both populations and

- those for two dependent samples.

If there are two independent samples with unequal standard deviations in both populations, the Welch test must be used.

Basic idea

The two-sample t-test uses the mean values and two samples to check (in the simplest case) whether the mean values and the associated populations are different.

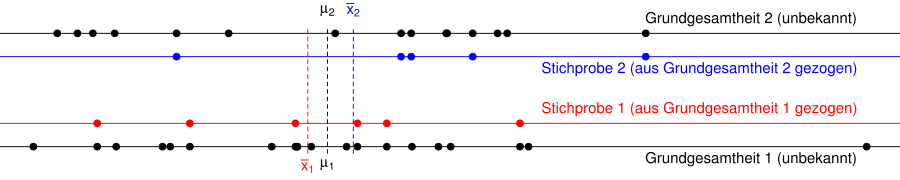

The graph below shows two populations (black dots) and two samples (blue and red dots) that were randomly drawn from the populations. The mean values of the samples and can be calculated from the samples, but the mean values of the populations and are unknown. The graph shows the populations are so constructed that the two means are equal, so .

We now suspect z. B. on the basis of historical results or theoretical considerations that the mean values and the population are different, and would like to check this.

In the simplest case, the two-sample t-test checks

- the null hypothesis that population means are equal ( )

- against the alternative hypothesis that the population means are unequal ( ).

If the samples are appropriately drawn, for example as simple random samples , the mean of sample 1 will be very likely to be close to the mean of population 1 and the mean of sample 2 will be very likely to be close to the mean of population 2. That is, the distance between the dashed red and black lines or the dashed blue and black lines will most likely be small.

- If the distance between and (dashed blue and red line) is small, then are the mean values of the populations and close together. We cannot reject the null hypothesis.

- If the distance between and (dashed blue or red line) is large, then the mean values of the populations and are also far apart. We can reject the null hypothesis.

The exact mathematical calculations can be found in the following sections.

Two-sample t-test for independent samples

The two-sample t-test is used to examine differences in mean values between two populations with the same unknown standard deviation . For this, each of the populations must be normally distributed or the sample sizes must be large enough for the central limit theorem to be applicable. For the test, a sample of the size is drawn from the 1st population and, independently of this, a sample of the size from the 2nd population. For the associated independent sample variables and then applies and with the means and the two populations. If a number is given for the difference between the mean values, then the null hypothesis is

and the alternative hypothesis

-

.

.

The test statistic results in

There are and the respective sample mean values and

the weighted variance, calculated as the weighted mean of the respective sample variances and .

The test statistic is t-distributed with degrees of freedom under the null hypothesis . The test value, i.e. the realization of the test statistics based on the sample, is then calculated as

Where and are the mean values and calculated from the sample

the realization of the weighted variance, calculated from the sample variances and . It is also known as pooled sample variance .

At the level of significance , the null hypothesis is rejected in favor of the alternative, if

Alternatively, the following hypotheses can be tested with the same test statistic :

-

vs. and the null hypothesis is rejected if resp.

vs. and the null hypothesis is rejected if resp.

-

vs. and the null hypothesis is rejected if .

vs. and the null hypothesis is rejected if .

comment

If the variances in the populations are not equal, then the Welch test must be carried out.

example 1

Two types of fertilizer are to be compared. For this purpose, 25 plots of the same size are fertilized, namely plots with variety A and plots with variety B. It is assumed that the harvest yields are normally distributed with the same variances. The former results in a mean crop yield with sample variance and the other plots the mean with variance . This is used to calculate the weighted variance

-

.

.

The test variable is obtained from this

-

.

.

This value is greater than the 0.975 quantile of the t-distribution with degrees of freedom . So it can be said with a confidence of that there is a difference in the effect of the two fertilizers.

Compact display

| Two-sample t-test for two independent samples

|

| requirements

|

-

and independent of each other and independent of each other

-

or with or with

-

or with or with

-

unknown unknown

|

| Hypotheses

|

(right side)

|

(two-sided)

|

(left side)

|

| Test statistics

|

|

| Test value

|

with , , ,

and

|

Rejection area

|

|

or

|

|

Two-sample t-test for dependent samples

Goodness of connected and unconnected t-test as a function of the correlation. The simulated random numbers come from a bivariate normal distribution with a variance of 1 and a difference between the

expected values of 0.4. The level of significance is 5% and the sample size is 60.

Here and are two random samples, connected in pairs, which were obtained, for example, from two measurements on the same examination units (repeated measurements). The samples can also be paired for other reasons, for example if the and values are measured by women or men in a partnership and differences between the sexes are of interest.

If the null hypothesis is to be tested that the two expected values of the underlying normally distributed populations are the same, the differences can be tested for zero with the one- sample t-test . In practice, with smaller sample sizes ( ), the prerequisite must be met that the differences in the population are normally distributed. With sufficiently large samples, the differences between the pairs are distributed approximately normally around the arithmetic mean of the difference in the population. Overall, the t-test reacts rather robustly to an assumption violation.

Example 2

In order to test a new therapy for lowering the cholesterol level, the cholesterol levels are determined in ten test subjects before and after the treatment. The following measurement results are obtained:

| Before treatment: |

223 |

259 |

248 |

220 |

287 |

191 |

229 |

270 |

245 |

201

|

| After treatment: |

220 |

244 |

243 |

211 |

299 |

170 |

210 |

276 |

252 |

189

|

| Difference: |

3 |

15th |

5 |

9 |

−12 |

21st |

19th |

−6 |

−7 |

12

|

The differences in the measured values have the arithmetic mean and the sample standard deviation . This results as a test variable value

-

.

.

It is , therefore, applies . Thus, the null hypothesis that the expected values of the cholesterol values before and after the treatment are the same, i.e. that the therapy has no effect , cannot be rejected at the level of significance . Because of this , the one-sided alternative that the therapy lowers the cholesterol level is not significant either. If the treatment has any effect at all, it is not big enough to detect with such a small sample size.

Compact display

| Two-sample t-test for two paired samples

|

| requirements

|

-

independent of each other independent of each other

-

(at least approximately) (at least approximately)

|

| Hypotheses

|

(right side)

|

(two-sided)

|

(left side)

|

| Test statistics

|

|

| Test value

|

with , , and

|

Rejection area

|

|

![(- \ infty, -t _ {{1 - {\ frac {\ alpha} 2}; n-1}}] \ cup [t _ {{{1 - {\ frac {\ alpha} 2}; n-1}} , \ infty) \,](https://wikimedia.org/api/rest_v1/media/math/render/svg/4ab7c6fbe467ecfe1e82c05d15f6cac557aa551f)

|

![(- \ infty, -t _ {{1- \ alpha; n-1}}] \,](https://wikimedia.org/api/rest_v1/media/math/render/svg/9051b0d59c34e12df9dd79c75e1579d29069850e)

|

Welch test

The Welch test calculates the test statistic similar to the two-sample t-test:

However, this test statistic is not distributed under the null hypothesis , but is approximated by means of a t-distribution with a modified number of degrees of freedom (see also Behrens-Fisher problem ):

Where and are the standard deviations of the populations estimated from the sample as well as and the sample sizes.

Although the Welch test was developed specifically for the case , the test does not work well if at least one of the distributions is abnormal, the case numbers are small and very different ( ).

Compact display

| Welch test

|

| requirements

|

-

and independent of each other and independent of each other

-

or with or with

-

or with or with

-

unknown unknown

|

| Hypotheses

|

(right side)

|

(two-sided)

|

(left side)

|

| Test statistics

|

|

| Test value

|

with , , , , and .

|

Rejection area

|

|

or

|

|

Alternative tests

As stated above, the t-test is used to test hypotheses about expected values of one or two samples from normally distributed populations with an unknown standard deviation.

Web links

Individual evidence

-

^ Jürgen Bortz: Statistics for human and social scientists . 6th edition, Springer, Berlin 2005, ISBN 3-540-21271-X , p. 142.

-

^ RR Wilcox: Statistics for the Social Sciences . Academic Press Inc, 1996, ISBN 0-12-751540-2 .

-

^ DG Bonnet, RM Price: Statistical inference for a linear function of medians: Confidence intervals, hypothesis testing, and sample size requirements . In: Psychological Methods . tape 7 , no. 3 , 2002, doi : 10.1037 / 1082-989X.7.3.370 .

![(- \ infty, -t _ {{1 - {\ frac {\ alpha} 2}; n-1}}] \ cup [t _ {{{1 - {\ frac {\ alpha} 2}; n-1}} , \ infty) \,](https://wikimedia.org/api/rest_v1/media/math/render/svg/4ab7c6fbe467ecfe1e82c05d15f6cac557aa551f)

![(- \ infty, -t _ {{1- \ alpha; n-1}}] \,](https://wikimedia.org/api/rest_v1/media/math/render/svg/9051b0d59c34e12df9dd79c75e1579d29069850e)