Eigenvalues and eigenvectors

- This article is a general article on the topic of eigenvalues, eigenvectors and eigenspace.

- For more specific information regarding the eigenvalues, eigenvectors of matrices see Eigendecomposition (matrix).

In mathematics, a vector may be thought of as an arrow. It has a length, called its magnitude, and it points in some particular direction. A linear transformation may be considered to operate on a vector to change it, usually changing both its magnitude and its direction. An ⓘ of a given linear transformation is a vector which is multiplied by a constant called the ⓘ during that transformation. The direction of the eigenvector is either unchanged by that transformation (for positive eigenvalues) or reversed (for negative eigenvalues).

For example, an eigenvalue of +2 means that the eigenvector is doubled in length and points in the same direction. An eigenvalue of +1 means that the eigenvector stays the same, while an eigenvalue of −1 means that the eigenvector is reversed in direction. An eigenspace of a given transformation is the set of all eigenvectors of that transformation that have the same eigenvalue, together with the zero vector (which has no direction). An eigenspace is an example of a subspace of a vector space.

In linear algebra, every linear transformation between finite-dimensional vector spaces can be given by a matrix, which is a rectangular array of numbers arranged in rows and columns. Standard methods for finding eigenvalues, eigenvectors, and eigenspaces of a given matrix are discussed below.

These concepts play a major role in several branches of both pure and applied mathematics — appearing prominently in linear algebra, functional analysis, and to a lesser extent in nonlinear mathematics.

Many kinds of mathematical objects can be treated as vectors: functions, harmonic modes, quantum states, and frequencies, for example. In these cases, the concept of direction loses its ordinary meaning, and is given an abstract definition. Even so, if this abstract direction is unchanged by a given linear transformation, the prefix "eigen" is used, as in eigenfunction, eigenmode, eigenstate, and eigenfrequency.

History

Eigenvalues are often introduced in the context of matrix theory. Historically, however, they arose in the study of quadratic forms and differential equations.

In the first half of the 18th century, Johann and Daniel Bernoulli, d'Alembert and Euler encountered eigenvalue problems when studying the motion of a rope, which they considered to be a weightless string loaded with a number of masses. Laplace and Lagrange continued their work in the second half of the century. They realized that the eigenvalues are related to the stability of the motion. They also used eigenvalue methods in their study of the solar system.[1]

Euler had also studied the rotational motion of a rigid body and discovered the importance of the principal axes. As Lagrange realized, the principal axes are the eigenvectors of the inertia matrix.[2] In the early 19th century, Cauchy saw how their work could be used to classify the quadric surfaces, and generalized it to arbitrary dimensions.[3] Cauchy also coined the term racine caractéristique (characteristic root) for what is now called eigenvalue; his term survives in characteristic equation.[4]

Fourier used the work of Laplace and Lagrange to solve the heat equation by separation of variables in his famous 1822 book Théorie analytique de la chaleur.[5] Sturm developed Fourier's ideas further and he brought them to the attention of Cauchy, who combined them with his own ideas and arrived at the fact that symmetric matrices have real eigenvalues.[3] This was extended by Hermite in 1855 to what are now called Hermitian matrices.[4] Around the same time, Brioschi proved that the eigenvalues of orthogonal matrices lie on the unit circle,[3] and Clebsch found the corresponding result for skew-symmetric matrices.[4] Finally, Weierstrass clarified an important aspect in the stability theory started by Laplace by realizing that defective matrices can cause instability.[3]

In the meantime, Liouville had studied similar eigenvalue problems as Sturm; the discipline that grew out of their work is now called Sturm-Liouville theory.[6] Schwarz studied the first eigenvalue of Laplace's equation on general domains towards the end of the 19th century, while Poincaré studied Poisson's equation a few years later.[7]

At the start of the 20th century, Hilbert studied the eigenvalues of integral operators by considering them to be infinite matrices.[8] He was the first to use the German word eigen to denote eigenvalues and eigenvectors in 1904, though he may have been following a related usage by Helmholtz. "Eigen" can be translated as "own", "peculiar to", "characteristic" or "individual"—emphasizing how important eigenvalues are to defining the unique nature of a specific transformation. For some time, the standard term in English was "proper value", but the more distinctive term "eigenvalue" is standard today.[9]

The first numerical algorithm for computing eigenvalues and eigenvectors appeared in 1929, when Von Mises published the power method. One of the most popular methods today, the QR algorithm, was proposed independently by Francis and Kublanovskaya in 1961.[10]

Definitions

Linear transformations of space—such as rotation, reflection, stretching, compression, shear or any combination of these—may be visualized by the effect they produce on vectors. Vectors can be visualized as arrows pointing from one point to another.

- An eigenvector of a linear transformation is a non-zero vector that is either left unaffected or simply multiplied by a scale factor after the transformation (the former corresponds to a scale factor of 1).

- The eigenvalue of a non-zero eigenvector is the scale factor by which it has been multiplied.

- A number λ is an eigenvalue of a linear transformation T : V → V if there is a non-zero vector x such that T(x) = λx.

- The eigenspace corresponding to a given eigenvalue of a linear transformation is the vector space of all eigenvectors with that eigenvalue.

- The geometric multiplicity of an eigenvalue is the dimension of the associated eigenspace.

- The spectrum of a transformation on a finite dimensional vector space is the set of all its eigenvalues. (In the infinite-dimensional case, the concept of spectrum is more subtle and depends on the topology of the vector space).

For instance, an eigenvector of a rotation in three dimensions is a vector located within the axis about which the rotation is performed. The corresponding eigenvalue is 1 and the corresponding eigenspace contains all the vectors along the axis. As this is a one-dimensional space, its geometric multiplicity is one. This is the only eigenvalue of the spectrum (of this rotation) that is a real number.

Examples

Mona Lisa

For the example shown on the right, the matrix that would produce a shear transformation similar to this would be.

The set of eigenvectors for is defined as those vectors which, when multiplied by , result in a simple scaling of . Thus,

If we restrict ourselves to real eigenvalues, the only effect of the matrix on the eigenvectors will be to change their length, and possibly reverse their direction. So multiplying the right hand side by the Identity matrix I, we have

and therefore

In order for this equation to have non-trivial solutions, we require the determinant which is called the characteristic polynomial of the matrix A to be zero. In our example we can calculate the determinant as

and now we have obtained the characteristic polynomial of the matrix A. There is in this case only one distinct solution of the equation , . This is the eigenvalue of the matrix A. As in the study of roots of polynomials, it is convenient to say that this eigenvalue has multiplicity 2.

Having found an eigenvalue , we can solve for the space of eigenvectors by finding the nullspace of . In other words by solving for vectors which are solutions of

Substituting our obtained eigenvalue ,

Solving this new matrix equation, we find that vectors in the nullspace have the form

where c is an arbitrary constant. All vectors of this form, i.e. pointing straight up or down, are eigenvectors of the matrix A. The effect of applying the matrix A to these vectors is equivalent to multiplying them by their corresponding eigenvalue, in this case 1.

In general, 2-by-2 matrices will have two distinct eigenvalues, and thus two distinct eigenvectors. Whereas most vectors will have both their lengths and directions changed by the matrix, eigenvectors will only have their lengths changed, and will not change their direction, except perhaps to flip through the origin in the case when the eigenvalue is a negative number. Also, it is usually the case that the eigenvalue will be something other than 1, and so eigenvectors will be stretched, squashed and/or flipped through the origin by the matrix.

Other examples

As the Earth rotates, every arrow pointing outward from the center of the Earth also rotates, except those arrows which are parallel to the axis of rotation. Consider the transformation of the Earth after one hour of rotation: An arrow from the center of the Earth to the Geographic South Pole would be an eigenvector of this transformation, but an arrow from the center of the Earth to anywhere on the equator would not be an eigenvector. Since the arrow pointing at the pole is not stretched by the rotation of the Earth, its eigenvalue is 1.

Another example is provided by a rubber sheet expanding omnidirectionally about a fixed point in such a way that the distances from any point of the sheet to the fixed point are doubled. This expansion is a transformation with eigenvalue 2. Every vector from the fixed point to a point on the sheet is an eigenvector, and the eigenspace is the set of all these vectors.

However, three-dimensional geometric space is not the only vector space. For example, consider a stressed rope fixed at both ends, like the vibrating strings of a string instrument (Fig. 2). The distances of atoms of the vibrating rope from their positions when the rope is at rest can be seen as the components of a vector in a space with as many dimensions as there are atoms in the rope.

Assume the rope is a continuous medium. If one considers the equation for the acceleration at every point of the rope, its eigenvectors, or eigenfunctions, are the standing waves. The standing waves correspond to particular oscillations of the rope such that the acceleration of the rope is simply its shape scaled by a factor—this factor, the eigenvalue, turns out to be where is the angular frequency of the oscillation. Each component of the vector associated with the rope is multiplied by a time-dependent factor . If damping is considered, the amplitude of this oscillation decreases until the rope stops oscillating, corresponding to a complex ω. One can then associate a lifetime with the imaginary part of ω, and relate the concept of an eigenvector to the concept of resonance. Without damping, the fact that the acceleration operator (assuming a uniform density) is Hermitian leads to several important properties, such as that the standing wave patterns are orthogonal functions.

Eigenvalue equation

Suppose T is a linear transformation of a finite-dimensional space, that is for all scalars a, b, and vectors v, w. Then is an eigenvector and λ the corresponding eigenvalue of T if the equation:

is true, where T(vλ) is the vector obtained when applying the transformation T to vλ.

Consider a basis of the vector space that T acts on. Then T and vλ can be represented relative to that basis by a matrix AT—a two-dimensional array—and respectively a column vector vλ—a one-dimensional vertical array. The eigenvalue equation in its matrix representation is written

where the juxtaposition is matrix multiplication. Since, once a basis is fixed, T and its matrix representation AT are equivalent, we can often use the same symbol T for both the matrix representation and the transformation. This is equivalent to a set of n linear equations, where n is the number of basis vectors in the basis set. In this equation both the eigenvalue λ and the n components of vλ are unknowns.

However, it is sometimes unnatural or even impossible to write down the eigenvalue equation in a matrix form. This occurs for instance when the vector space is infinite dimensional, for example, in the case of the rope above. Depending on the nature of the transformation T and the space to which it applies, it can be advantageous to represent the eigenvalue equation as a set of differential equations. If T is a differential operator, the eigenvectors are commonly called eigenfunctions of the differential operator representing T. For example, differentiation itself is a linear transformation since

(f(t) and g(t) are differentiable functions, and a and b are constants).

Consider differentiation with respect to . Its eigenfunctions h(t) obey the eigenvalue equation:

- ,

where λ is the eigenvalue associated with the function. Such a function of time is constant if , grows proportionally to itself if is positive, and decays proportionally to itself if is negative. For example, an idealized population of rabbits breeds faster the more rabbits there are, and thus satisfies the equation with a positive lambda.

The solution to the eigenvalue equation is , the exponential function; thus that function is an eigenfunction of the differential operator d/dt with the eigenvalue λ. If λ is negative, we call the evolution of g an exponential decay; if it is positive, an exponential growth. The value of λ can be any complex number. The spectrum of d/dt is therefore the whole complex plane. In this example the vector space in which the operator d/dt acts is the space of the differentiable functions of one variable. This space has an infinite dimension (because it is not possible to express every differentiable function as a linear combination of a finite number of basis functions). However, the eigenspace associated with any given eigenvalue λ is one dimensional. It is the set of all functions , where A is an arbitrary constant, the initial population at t=0.

Spectral theorem

In its simplest version, the spectral theorem states that, under certain conditions, a linear transformation of a vector can be expressed as a linear combination of the eigenvectors, in which the coefficient of each eigenvector is equal to the corresponding eigenvalue times the scalar product (or dot product) of the eigenvector with the vector . Mathematically, it can be written as:

where and stand for the eigenvectors and eigenvalues of . The simplest case in which the theorem is valid is the case where the linear transformation is given by a real symmetric matrix or complex Hermitian matrix; more generally the theorem holds for all normal matrices.

If one defines the nth power of a transformation as the result of applying it n times in succession, one can also define polynomials of transformations. A more general version of the theorem is that any polynomial P of is given by

The theorem can be extended to other functions of transformations like analytic functions, the most general case being Borel functions.

Eigenvalues and eigenvectors of matrices

Eigenvectors and eigenvalues

A vector of dimension n is an eigenvector of a matrix if and only if it satisfies the linear equation

where is a square () matrix and λ is a scalar, termed the eigenvalue corresponding to . The above equation is called the eigenvalue equation.

Eigendecomposition

The spectral theorem for matrices can be stated as follows. Let be a square () matrix. Let be an eigenvector basis, i.e. an indexed set of k linearly independent eigenvectors, where k is the dimension of the space spanned by the eigenvectors of . If k=n, then can be written

where is the square () matrix whose ith column is the basis eigenvector of and is the diagonal matrix whose diagonal elements are the corresponding eigenvalues, i.e. .

Infinite-dimensional spaces

If the vector space is an infinite dimensional Banach space, the notion of eigenvalues can be generalized to the concept of spectrum. The spectrum is the set of scalars λ for which is not defined; that is, such that has no bounded inverse.

Clearly if λ is an eigenvalue of T, λ is in the spectrum of T. In general, the converse is not true. There are operators on Hilbert or Banach spaces which have no eigenvectors at all. This can be seen in the following example. The bilateral shift on the Hilbert space (the space of all sequences of scalars such that converges) has no eigenvalue but has spectral values.

In infinite-dimensional spaces, the spectrum of a bounded operator is always nonempty. This is also true for an unbounded self adjoint operator. Via its spectral measures, the spectrum of any self adjoint operator, bounded or otherwise, can be decomposed into absolutely continuous, pure point, and singular parts. (See Decomposition of spectrum.)

Applications

Schrödinger equation

An example of an eigenvalue equation where the transformation is represented in terms of a differential operator is the time-independent Schrödinger equation in quantum mechanics:

where H, the Hamiltonian, is a second-order differential operator and , the wavefunction, is one of its eigenfunctions corresponding to the eigenvalue E, interpreted as its energy.

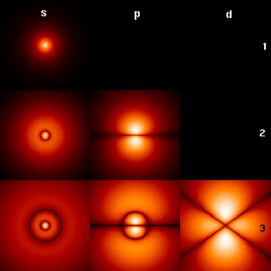

However, in the case where one is interested only in the bound state solutions of the Schrödinger equation, one looks for within the space of square integrable functions. Since this space is a Hilbert space with a well-defined scalar product, one can introduce a basis set in which and H can be represented as a one-dimensional array and a matrix respectively. This allows one to represent the Schrödinger equation in a matrix form. (Fig. 4 presents the lowest eigenfunctions of the Hydrogen atom Hamiltonian.)

The Dirac notation is often used in this context. A vector, which represents a state of the system, in the Hilbert space of square integrable functions is represented by . In this notation, the Schrödinger equation is:

where is an eigenstate of H. It is a self adjoint operator, the infinite dimensional analog of Hermitian matrices (see Observable). As in the matrix case, in the equation above is understood to be the vector obtained by application of the transformation H to .

Molecular orbitals

In quantum mechanics, and in particular in atomic and molecular physics, within the Hartree-Fock theory, the atomic and molecular orbitals can be defined by the eigenvectors of the Fock operator. The corresponding eigenvalues are interpreted as ionization potentials via Koopmans' theorem. In this case, the term eigenvector is used in a somewhat more general meaning, since the Fock operator is explicitly dependent on the orbitals and their eigenvalues. If one wants to underline this aspect one speaks of implicit eigenvalue equation. Such equations are usually solved by an iteration procedure, called in this case self-consistent field method. In quantum chemistry, one often represents the Hartree-Fock equation in a non-orthogonal basis set. This particular representation is a generalized eigenvalue problem called Roothaan equations.

Factor analysis

In factor analysis, the eigenvectors of a covariance matrix correspond to factors, and eigenvalues to factor loadings. Factor analysis is a statistical technique used in the social sciences and in marketing, product management, operations research, and other applied sciences that deal with large quantities of data. The objective is to explain most of the covariability among a number of observable random variables in terms of a smaller number of unobservable latent variables called factors. The observable random variables are modeled as linear combinations of the factors, plus unique variance terms.

Eigenfaces

In image processing, processed images of faces can be seen as vectors whose components are the brightnesses of each pixel. The dimension of this vector space is the number of pixels. The eigenvectors of the covariance matrix associated to a large set of normalized pictures of faces are called eigenfaces. They are very useful for expressing any face image as a linear combination of some of them. In the facial recognition branch of biometrics, eigenfaces provide a means of applying data compression to faces for identification purposes. Research related to eigen vision systems determining hand gestures has also been made. More on determining sign language letters using eigen systems can be found here: http://www.geigel.com/signlanguage/index.php

Similar to this concept, eigenvoices concept is also developed which represents the general direction of variability in human pronounciations of a particular utterance, such as a word in a language. Based on a linear combination of such eigenvoices, a new voice pronounciation of the word can be constructed. These concepts have been found useful in automatic speech recognition systems, for speaker adaptation.

Tensor of inertia

In mechanics, the eigenvectors of the inertia tensor define the principal axes of a rigid body. The tensor of inertia is a key quantity required in order to determine the rotation of a rigid body around its center of mass.

Stress tensor

In solid mechanics, the stress tensor is symmetric and so can be decomposed into a diagonal tensor with the eigenvalues on the diagonal and eigenvectors as a basis. Because it is diagonal, in this orientation, the stress tensor has no shear components; the components it does have are the principal components.

Eigenvalues of a graph

In spectral graph theory, an eigenvalue of a graph is defined as an eigenvalue of the graph's adjacency matrix A, or (increasingly) of the graph's Laplacian matrix, which is either T−A or , where T is a diagonal matrix holding the degree of each vertex, and in , 0 is substituted for . The kth principal eigenvector of a graph is defined as either the eigenvector corresponding to the kth largest eigenvalue of A, or the eigenvector corresponding to the kth smallest eigenvalue of the Laplacian. The first principal eigenvector of the graph is also referred to merely as the principal eigenvector.

The principal eigenvector is used to measure the centrality of its vertices. An example is Google's PageRank algorithm. The principal eigenvector of a modified adjacency matrix of the World Wide Web graph gives the page ranks as its components. This vector corresponds to the stationary distribution of the Markov chain represented by the row-normalized adjacency matrix; however, the adjacency matrix must first be modified to ensure a stationary distribution exists. The second principal eigenvector can be used to partition the graph into clusters, via spectral clustering.

See also

Notes

- ^ See Hawkins (1975), §2; Kline (1972), pp. 807+808.

- ^ See Hawkins (1975), §2.

- ^ a b c d See Hawkins (1975), §3.

- ^ a b c See Kline (1972), pp. 807+808.

- ^ See Kline (1972), p. 673.

- ^ See Kline (1972), pp. 715+716.

- ^ See Kline (1972), pp. 706+707.

- ^ See Kline (1972), p. 1063.

- ^ See Aldrich (2006).

- ^ See Golub and Van Loan (1996), §7.3; Meyer (2000), §7.3.

References

- Abdi, H (2007). "[1] (2007). Eigen-decomposition: eigenvalues and eigenvecteurs.In N.J. Salkind (Ed.): Encyclopedia of Measurement and Statistics. Thousand Oaks (CA): Sage".

{{cite journal}}: Cite has empty unknown parameter:|1=(help); Cite journal requires|journal=(help); External link in|title= - John Aldrich, Eigenvalue, eigenfunction, eigenvector, and related terms. In Jeff Miller (Editor), Earliest Known Uses of Some of the Words of Mathematics, last updated 7 August 2006, accessed 22 August 2006.

- Claude Cohen-Tannoudji, Quantum Mechanics, Wiley (1977). ISBN 0-471-16432-1. (Chapter II. The mathematical tools of quantum mechanics.)

- John B. Fraleigh and Raymond A. Beauregard, Linear Algebra (3rd edition), Addison-Wesley Publishing Company (1995). ISBN 0-201-83999-7 (international edition).

- Gene H. Golub and Charles F. van Loan, Matrix Computations (3rd edition), Johns Hopkins University Press, Baltimore, 1996. ISBN 978-0-8018-5414-9.

- T. Hawkins, Cauchy and the spectral theory of matrices, Historia Mathematica, vol. 2, pp. 1–29, 1975.

- Roger A. Horn and Charles R. Johnson, Matrix Analysis, Cambridge University Press, 1985. ISBN 0-521-30586-1 (hardback), ISBN 0-521-38632-2 (paperback).

- Morris Kline, Mathematical thought from ancient to modern times, Oxford University Press, 1972. ISBN 0-19-501496-0.

- Carl D. Meyer, Matrix Analysis and Applied Linear Algebra, Society for Industrial and Applied Mathematics (SIAM), Philadelphia, 2000. ISBN 978-0-89871-454-8.

- Valentin, D.,Abdi, H, Edelman, B., O'Toole A. "[2] (1997). Principal Component and Neural Network Analyses of Face Images: What Can Be Generalized in Gender Classification? Journal of Mathematical Psychology, 41, 398-412".

{{cite journal}}: Cite has empty unknown parameter:|1=(help); Cite journal requires|journal=(help); External link in|title=

External links

- MIT Video Lecture on Eigenvalues and Eigenvectors at Google Video, from MIT OpenCourseWare

- ARPACK is a collection of FORTRAN subroutines for solving large scale (sparse) eigenproblems.

- IRBLEIGS, has MATLAB code with similar capabilities to ARPACK. (See this paper for a comparison between IRBLEIGS and ARPACK.)

- LAPACK is a collection of FORTRAN subroutines for solving dense linear algebra problems

- ALGLIB includes a partial port of the LAPACK to C++, C#, Delphi, etc.

- "Eigenvalue (of a matrix)". PlanetMath.

- MathWorld: Eigenvector

- Online calculator for Eigenvalues and Eigenvectors

- Online Matrix Calculator Calculates eigenvalues, eigenvectors and other decompositions of matrices online

- Vanderplaats Research and Development - Provides the SMS eigenvalue solver for Structural Finite Element. The solver is in the GENESIS program as well as other commercial programs. SMS can be easily use with MSC.Nastran or NX/Nastran via DMAPs.

- What are Eigen Values? from PhysLink.com's "Ask the Experts"

- Templates for the Solution of Algebraic Eigenvalue Problems Edited by Zhaojun Bai, James Demmel, Jack Dongarra, Axel Ruhe, and Henk van der Vorst (a guide to the numerical solution of eigenvalue problems)