Search engine

A search engine is a program for research of documents stored in a computer or a computer network such. B. the World Wide Web are stored. After creating a search query, often by entering a search term in text, a search engine provides a list of references to possibly relevant documents, usually shown with a title and a short excerpt of the respective document. Various search methods can be used for this.

The essential components or areas of responsibility of a search engine are:

- Creation and maintenance of an index ( data structure with information about documents),

- Processing of search queries (finding and sorting results) and

- Preparation of the results in the most meaningful way possible.

As a rule, data is obtained automatically, on the Internet using web crawlers , on a single computer by regularly reading all files in user-specified directories in the local file system .

features

Search engines can be categorized according to a number of characteristics. The following features are largely independent. When designing a search engine, one can therefore choose one option from each of the groups of characteristics without this influencing the choice of the other characteristics.

Type of data

Different search engines can search different types of data. First of all, these can be roughly divided into “document types” such as text, image, sound, video and others. Result pages are designed depending on this category. When searching for text documents, a text fragment that contains the search terms (often called a snippet ) is usually displayed . Image search engines display a thumbnail of the matching images. A people search engine finds publicly available information on names and people, which is displayed as a list of links. Other specialized types of search engines include job search engines , industry searches or product search engines . The latter are primarily used for online price comparisons, but there are also local offers searches that display products and offers from stationary retailers online.

Another finer breakdown deals with data-specific properties that are not shared by all documents within a category. If you stick to the example text, you can search for certain authors for Usenet articles, for websites in HTML format for the document title.

Depending on the data category, a restriction to a subset of all data of a category is possible as a further function . This is generally implemented using additional search parameters that exclude part of the recorded data. Alternatively, a search engine can limit itself to only including suitable documents from the start. Examples are a search engine for weblogs (instead of the entire web) or search engines that only process documents from universities, or only documents from a certain country, in a certain language or in a certain file format .

Data Source

Another characteristic of categorization is the source from which the data collected by the search engine comes. Usually the name of the search engine type already describes the source.

- Web search engines collect documents from the World Wide Web,

- vertical search engines look at a selected area of the World Wide Web and only collect web documents on a specific topic such as football, health or law.

- Usenet search engine contributions from the worldwide Usenet discussion medium .

- Intranet search engines are limited to the computers on a company's intranet .

- Enterprise Search Search engines enable a central search via various data sources within a company, such as B. file servers, wikis, databases and intranet.

- As a desktop search programs are called, make the local database of a single computer searchable.

If data is obtained manually by registering or by editors, it is called a catalog or directory . In such directories as the Open Directory Project , the documents are organized hierarchically in a table of contents according to topic.

realization

This section describes differences in the implementation of the search engine operation.

- The most important group today are index-based search engines . These read in suitable documents and create an index. This is a data structure that is used in a later search query. The disadvantage is the complex maintenance and storage of the index, the advantage is the acceleration of the search process. The most common form of this structure is an inverted index . The computer scientist Karen Spärck Jones , who combined statistical and linguistic processes, carried out the basic preparatory work for the development .

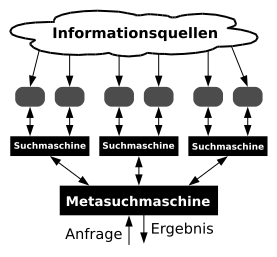

- Meta search engines send search queries in parallel to several index-based search engines and combine the individual results. The advantage is the larger amount of data and the simpler implementation, as no index has to be kept. The disadvantage is the relatively long time it takes to process the request. In addition, the ranking is of questionable value through pure majority finding. The quality of the results may be reduced to the quality of the worst surveyed search engine. Meta search engines are particularly useful for rarely occurring search terms.

- Hybrid forms also exist . These have their own, often relatively small, index, but they also survey other search engines and ultimately combine the individual results. So-called real-time search engines start the indexing process only after a request. The pages found are always up to date, but the quality of the results is poor due to the lack of a broad database, especially for less common search terms.

- A relatively new approach are distributed search engines or federated search engines . A search query is forwarded to a large number of individual computers, each of which operates its own search engine, and the results are merged. The advantage is the high level of security against failure due to the decentralization and - depending on your point of view - the lack of the ability to censor centrally . However, it is difficult to solve the ranking , i.e. the sorting of the basically suitable documents according to their relevance for the query.

- A special type of distributed search engines are those based on the peer-to-peer principle, which build up a distributed index. On each of these peers, independent crawlers can censor-resistantly record those parts of the web that the respective peer operator defines through simple local configuration. The best-known system, in addition to some primarily academic projects (e.g. Minerva), is the YaCy software, which is free under the GNU GPL .

Interpretation of the input

A user's search query is interpreted before the actual search and put into a form that is understandable for the internally used search algorithm . This is used to keep the syntax of the request as simple as possible and still allow complex requests. Many search engines support the logical combination of different search terms using Boolean operators . This makes it possible to find websites that contain certain terms but not others.

A more recent development is the ability of a number of search engines to develop implicitly available information from the context of the search query itself and also to evaluate it. The ambiguities of the search query that are typically present in the case of incomplete search queries can thus be reduced, and the relevance of the search results (that is, the correspondence with the conscious or unconscious expectations of the searcher) can be increased. From the semantic similarities of the entered search terms (see also: Semantic search ) one or more underlying meanings of the query are deduced. The result set is expanded to include hits on semantically related search terms that are not explicitly entered in the query. As a rule, this not only leads to a quantitative improvement, but also to a qualitative improvement (relevance) of the results, especially in the case of incomplete inquiries and not optimally chosen search terms, because the search intentions, which in these cases are rather blurred by the search terms, result from the Statistical procedures used by search engines are reproduced surprisingly well in practice. (See also: semantic search engine and latent semantic indexing ).

Invisible information provided (location details and other information in the case of inquiries from the mobile network) or inferred 'meaning preferences' from the stored search history of the user are further examples of not explicitly specified in the search terms entered by a number of search engines to modify and improve the results information used.

There are also search engines that can only be queried with strictly formalized query languages , but which can usually also answer very complex queries very precisely.

A capability of search engines that has so far only been feasible to a limited extent or on a limited basis of information is the ability to process natural-language and fuzzy search queries. (See also: semantic web ).

Problems

ambiguity

Search queries are often imprecise. For example, the search engine cannot decide independently whether to search for a truck or a bad habit with the term truck ( semantic correctness). Conversely, the search engine should not insist too stubbornly on the term entered. You should also synonyms include indicate that the term computer Linux will also find pages that take computer word computer included.

grammar

Many possible hits are lost because the user is looking for a certain grammatical form of a search term. A search for the term car will find all pages in the search index that contain this term, but not those with the term cars . Some search engines allow searches using wildcards , which can partially circumvent this problem (e.g. the search query car * also takes into account the term cars or automatism ), but the user must also be familiar with the possibility. Stemming is also often used, in which words are reduced to their basic stem. So on the one hand, a query for similar word forms possible ( beautiful flowers is so also beautiful flower ), as well as the number of terms is reduced in the index. The disadvantages of stemming can be compensated for by a linguistic search in which all word variants are generated. Another possibility is the use of statistical methods , with which the search engine the query z. B. by the appearance of various related terms on websites to evaluate whether the search for auto repair could also have meant the search for auto repair or automatism repaired .

Punctuation marks

Technical terms and product names whose proper names include a punctuation mark (e.g. Apple's web service .Mac or C / net) cannot be effectively searched for and found with common search engines. Exceptions have only been made for a few very common terms (e.g. .Net, C #, or C ++).

Amount of data

The amount of data often grows very quickly. Big data deals with amounts of data that are too large, too complex, too fast-moving or too weakly structured to be evaluated using manual and conventional data processing methods .

Topicality

Many documents are updated frequently, which forces the search engines to re-index these pages again and again according to definable rules. This is also necessary in order to recognize documents that have been removed from the database in the meantime and no longer offer them as a result.

technology

Implementing searches on very large amounts of data in such a way that availability is high (despite hardware failures and network bottlenecks) and response times are low (although reading and processing several 100 MB of index data is often necessary for each search query), places great demands on the search engine operator . Systems have to be designed very redundantly , on the one hand on the computers on site in a data center, on the other hand there should be more than one data center that offers the complete search engine functionality.

Web search engines

Web search engines have their origins in information retrieval systems. The data acquisition is carried out by the web crawler of the respective search engine such as Googlebot . Around a third of all searches on the Internet are related to people and their activities.

Search behavior

Search queries can be categorized in different ways. This classification plays a role in online marketing and search engine optimization ( search engine marketing ).

- Navigation-oriented search queries

- In navigational queries, the user searches specifically for pages that he already knows or that he believes exist. The user's need for information is satisfied after finding the page.

- Information-oriented search queries

- In the case of informational inquiries, the user looks for a large number of information on a specific topic. The search is ended when the information is received. There is usually no further work with the used pages.

- Transaction-oriented search queries (or commercial search queries)

- In the case of transactional inquiries, the user looks for websites with which he intends to work. These are, for example, internet shops, chats, etc.

- Query before making a purchase

- For example, the user searches specifically for test reports or reviews on certain products, but is not yet looking for specific offers on a product.

- Action-oriented searches

- With their search query, the user signals that they want to do something (download something or watch a video).

Presentation of the results

The page on which the search results are displayed to the user (sometimes also referred to as the Search engine results page , SERP for short) is divided (often also spatially) in many web search engines into the Natural Listings and the sponsor links . While the latter are only included in the search index for a fee, the former lists all websites that match the search term.

In order to make it easier for the user to use the web search engines, results are sorted according to relevance ( main article: search engine ranking ), for which each search engine uses its own, mostly secret criteria. This includes:

- The fundamental meaning of a document, measured by the link structure, the quality of the referring documents and the text contained in the references.

- Frequency and position of the search terms in the respective document found.

- Scope and quality of the document.

- Classification and number of documents cited.

See also: Regulation on the promotion of fairness and transparency of online intermediation services and online search engines of the European Union .

Problems

Law

Web search engines are mostly operated internationally and thus offer users results from servers located in other countries. Since the laws of the different countries have different understandings of what content is allowed, operators of search engines often come under pressure to exclude certain pages from their results. For example, since 2006, the market-leading web search engines have not shown any websites as hits for search queries from Germany that have been classified as harmful to young people by the Federal Testing Office for media harmful to young people. This practice takes place voluntarily on the part of the search engines as an automated process (filter module) within the framework of the voluntary self-regulation of multimedia service providers .

Topicality

Regularly downloading the billions of documents that a search engine has in the index places great demands on the search engine operator's network resources ( traffic ).

Spam

Using search engine spamming , some website operators try to outsmart the search engine's ranking algorithm in order to get a better ranking for certain search queries. This harms both the operators of the search engine and their customers, as the most relevant documents are no longer displayed first.

privacy

Data protection is a particularly sensitive issue with a people search engine. When a search for a name is started from a people search engine, the results of the search concern only data that is generally accessible. These data are accessible to the general public without having to register with a service or the like, even without the search engine. The people search engine itself does not hold any information of its own, it only provides access to it. Corrections or deletions must be made to the respective original source. Further legal questions arise with regard to the display of data by auto-completion (see legal situation in Germany ).

environmental Protection

Since every search query consumes (server) electricity, there are providers (so-called " green search engines ") who rely on measures to offset or save CO 2 (e.g. planting trees, reforesting the rainforest).

Market shares

Germany

| Surname | Share of search queries in Germany in July 2020 | percent | ||

|---|---|---|---|---|

|

|

92.6% | |||

| Bing |

|

4.5% | ||

| Ecosia (uses Bing) |

|

0.8% | ||

| DuckDuckGo |

|

0.7% | ||

| Yahoo (uses Bing) |

|

0.6% | ||

| T-Online (uses Google) |

|

0.4% | ||

| Others |

|

0.4% |

Worldwide

| Surname | Share of searches worldwide in December 2014 | percent | ||

|---|---|---|---|---|

|

|

68.54% | |||

| Baidu |

|

10.18% | ||

| Bing |

|

9.86% | ||

| Yahoo! |

|

9.26% | ||

| AOL |

|

0.47% | ||

| Ask |

|

0.27% | ||

| Other |

|

1.43% |

See also

literature

- David Gugerli: Search Engines. The world as a database (= Edition Unseld. Vol. 19). Suhrkamp, Frankfurt am Main 2009, ISBN 978-3-518-26019-7 .

- Nadine Höchstötter, Dirk Lewandowski: What the users see - Structures in search engine results pages. In: Information Sciences Vol. 179, No. 12, 2009, ISSN 0020-0255 , pp. 1796-1812, doi : 10.1016 / j.ins.2009.01.028 .

- Konrad Becker, Felix Stalder (Hrsg.): Deep search: Politics of search beyond Google. Innsbruck Vienna Bozen: StudienVerlag 2009. ISBN 978-3-7065-4794-9 .

- Dirk Lewandowski: Search Engines . In: Rainer Kuhlen, Wolfgang Semar, Dietmar Strauch (eds.): Basics of practical information and documentation . 6th edition. Walter de Gruyter, Berlin 2013, ISBN 978-3-11-025826-4 .

- Dirk Lewandowski: Understanding search engines . 2nd Edition. Springer, Heidelberg 2018, ISBN 978-3-662-56410-3 .

- Dirk Lewandowski (Ed.): Handbook Internet Search Engines. 3 volumes. AKA, Academic Publishing Society, Heidelberg 2009–2013;

- Volume 1: Dirk Lewandowski: User orientation in science and practice. 2009, ISBN 978-3-89838-607-4 ;

- Volume 2: Dirk Lewandowski: New Developments in Web Search. 2011, ISBN 978-3-89838-651-7 .

- Volume 3: Dirk Lewandowski: Search engines between technology and society. 2013, ISBN 978-3-89838-680-7 .

- Sven Konstantin: Poster “On the trail of search - the history of search engines” . In: Search Studies. April 23, 2018.

Web links

- Link catalog on the subject of the list of search engines at curlie.org (formerly DMOZ )

- Dossier: The politics of searching at the bpb

Individual evidence

- ^ Artur Hoffmann: Search engines for PCs . In: PC Professionell 2/2007, p. 108ff.

- ↑ https://www.nytimes.com/2019/01/02/obituaries/karen-sparck-jones-overlooked.html

- ↑ Google search help

- ↑ People Search Engines: The Traces of Others on the Internet . In: Stern digital

- ^ Lewandowski, Dirk ,: Web 2.0 services as a supplement to algorithmic search engines . Logos-Verl, Berlin 2008, ISBN 978-3-8325-1907-0 , p. 57 .

- ^ Andrei Broder: A taxonomy of web search. In: ACM SIGIR Forum. Vol. 36, No. 2, 2002, ISSN 0163-5840 , pp. 3-10, doi : 10.1145 / 792550.792552 .

- ↑ Types of search queries (transactional / navigational / informational) | Content Marketing Glossary. In: textbroker.de. Accessed July 1, 2019 (German).

- ^ Vanessa Fox: Marketing in the Age of Google . John Wiley & Sons, 2012, p. 67-68 .

- ↑ Yasni: people search engine at the start basicthinking.de, October 29, 2007

- ^ Search Engine Market Share Germany. Retrieved January 12, 2019 .

- ↑ Desktop Search Engine Market Share. NetMarketShare, accessed June 23, 2015 .