Flynn's classification

| Single instruction |

Multiple instruction |

|

|---|---|---|

| Single data |

SISD | MISD |

| Multiple data |

SIMD | MIMD |

The Flynn classification (also called Flynn taxonomy ) is a subdivision of computer architectures , which was published in 1966 by Michael J. Flynn . The architectures are subdivided according to the number of instruction streams and data streams available . The four-letter abbreviations used SISD, SIMD, MISD and MIMD were derived from the initial letters of the English descriptions, for example SISD stands for " S ingle I nstruction, S ingle D ata".

SISD (single instruction, single data)

SISD computers are traditional single-core processor computers that process their tasks sequentially. SISD computers are z. B. Personal computers (PCs) or workstations, which are built according to the Von Neumann or Harvard architecture . With the former, the same memory connection is used for commands and data, with the latter they are separate.

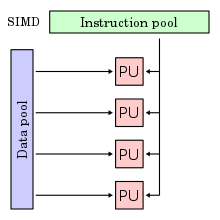

SIMD (Single Instruction, Multiple Data)

An architecture of mainframes or supercomputers . SIMD computers, also known as array processors or vector processors , are used for the fast execution of similar arithmetic operations on several simultaneously arriving or available input data streams and are mainly used in the processing of image, sound and video data.

This makes sense because in these areas the data to be processed can usually be parallelized to a high degree. So are z. B. in video editing, the operations for the many individual pixels are identical. Theoretically, it would be optimal here to execute a single command that can be applied to all points.

Furthermore, the operations required in the multimedia and communication sector are often not simple, individual operations, but rather extensive chains of commands. Fading in an image against a background is, for example, a complex process consisting of mask formation using XOR , preparation of the background using AND and NOT , and the superimposition of the partial images using OR . This requirement is met by providing new, complex commands. So united z. B. the MMX command PANDN an inversion and AND operation of the form x = y AND (NOT x ).

Many modern processor architectures (such as PowerPC and x86 ) now contain SIMD extensions, that is, special additional instruction sets that process several similar data sets simultaneously with one command call.

However, a distinction must be made between commands that only perform similar arithmetic operations and others that extend into the area of DSP functionality (for example, AltiVec is much more powerful than 3DNow in this regard ).

With today's processors, single instruction multiple data units are state of the art:

| developer | Processor architecture | SIMD unit |

|---|---|---|

| ARM Ltd. | ARM 32/64 | NEON |

| IBM | Power / PowerPC | AltiVec / VSX |

| Intel | x86 / AMD 64 | 3DNow / SSE / AVX |

See also:

- MMX , SSE , 3DNow , AltiVec , SSE2 , SSE3 , SSSE3 , SSE4 , SSE5 and AVX .

- Field computer - several computing units calculate the same operation in parallel on different data

- Vector processor - quasi-parallel processing of multiple data through pipelining

MISD (Multiple Instruction, Single Data)

An architecture of mainframes or supercomputers . The assignment of systems to this class is difficult and is therefore controversial. Many are of the opinion that such systems should not actually exist. However, fault-tolerant systems that perform redundant calculations can be classified in this class. An example of this processor system is a chess computer .

One implementation is macro pipelining , in which several processing units are connected in series. Another is redundant data streams for error detection and correction.

MIMD (Multiple Instruction, Multiple Data)

An architecture of mainframes or supercomputers . MIMD computers simultaneously carry out various operations on different types of input data streams, with the tasks being distributed to the available resources, usually by one or more processors in the processor group, even during runtime . Each processor has access to the data of other processors.

A distinction is made between closely coupled systems and loosely coupled systems. Tightly coupled systems are multiprocessor systems , while loosely coupled systems are multicomputer systems.

Multiprocessor systems share the available memory and are therefore a shared memory system. These shared memory systems can be further subdivided into UMA (uniform memory access), NUMA (non-uniform memory access) and COMA (cache-only memory access).

MIMD tries to get a problem under control by solving sub-problems. In turn, the problem arises that different sub-strands of the problem have to be synchronized with one another.

An example in this case would be the UNIX make command . Several associated program codes can be translated into machine language simultaneously with several processors.

See also:

- Transputer

- Distributed system - autonomous processors that simultaneously process different commands on different data

See also

- MSIMD , an architecture that moves between the SIMD and MIMD classes

Individual evidence

- ↑ M. Flynn: Some Computer Organizations and Their Effectiveness , IEEE Trans. Comput., Vol. C-21, pp. 948-960, 1972.

- ↑ Ralph Duncan: A Survey of Parallel Computer Architectures , IEEE Computer. February 1990, pp. 5-16.

- ↑ Sigrid Körbler: Parallel Computing - System Architectures and Methods of Programming , page 12.