The calculus of variations is a branch of mathematics that was developed around the middle of the 18th century by Leonhard Euler and Joseph-Louis Lagrange in particular .

The central element of the calculus of variations is the Euler-Lagrange equation

-

,

,

which just becomes the Lagrange equation from classical mechanics .

Basics

The calculus of variations deals with real functions of functions, which are also called functionals . Such functionals can be integrals over an unknown function and its derivatives. One is interested in stationary functions, i.e. those for which the functional assumes a maximum , a minimum (extremal) or a saddle point . Some classic problems can be elegantly formulated with the help of functional.

The key theorem of the calculus of variations is the Euler-Lagrange equation, more precisely "Euler-Lagrange's differential equation". This describes the stationarity condition of a functional. As with the task of determining the maxima and minima of a function, it is derived from the analysis of small changes around the assumed solution. The Euler-Lagrangian differential equation is just a necessary condition . Adrien-Marie Legendre and Alfred Clebsch as well as Carl Gustav Jacob Jacobi provided further necessary conditions for the existence of an extremal . A sufficient but not necessary condition comes from Karl Weierstrass .

The methods of calculus of variations appear in Hilbert space techniques, Morse code and symplectic geometry . The term variation is used for all extremal problems of functions. Geodesy and differential geometry are areas of mathematics in which variation plays a role. A lot of work has been done, in particular, on the problem of the minimal surfaces that occur with soap bubbles.

application areas

The calculus of variations is the mathematical basis of all physical extremal principles and is therefore particularly important in theoretical physics , for example in the Lagrange formalism of classical mechanics or orbit determination , in quantum mechanics using the principle of the smallest effect and in statistical physics in the context of Density functional theory . In mathematics, for example, the calculus of variations was used in the Riemannian treatment of the Dirichlet principle for harmonic functions . The calculation of variations is also used in control and regulation theory when it comes to determining optimal controllers .

A typical application example is the brachistochron problem : On which curve in a gravitational field from a point A to a point B that is below, but not directly below A, does an object need the least time to traverse the curve? Of all the curves between A and B, one minimizes the term describing the time it takes to run the curve. This expression is an integral that contains the unknown, searched function that describes the curve from A to B, and its derivatives.

A tool from the analysis of real functions in a real variable

In the following, an important technique of calculus of variations is demonstrated, in which a necessary statement for a local minimum place of a real function with only one real variable is transferred into a necessary statement for a local minimum place of a functional. This statement can then often be used to set up descriptive equations for stationary functions of a functional.

Let a functional be given on a function space ( must be at least a topological space ). The functional has a local minimum at this point .

The following simple trick replaces the “difficult to handle” functional with a real function that only depends on one real parameter “and is correspondingly easier to handle”.

With one had any steadily through the real parameter parameterized family of functions . Let the function (i.e. for ) be equal to the stationary function . Also, let that be by the equation

defined function differentiable at the point .

The continuous function then assumes a local minimum at this point, since there is a local minimum of .

From analysis for real functions in a real variable it is known that then holds. Applied to the functional, this means

When setting up the desired equations for stationary functions is then utilized that the above equation for any ( "benign") Family with must apply.

This will be demonstrated in the next section using the Euler equation.

Euler-Lagrange equation; Derivative of variation; other necessary or sufficient conditions

Given are two points in time with and a function that is twofold continuously differentiable in all arguments, the Lagrangian

-

.

.

For example, in the Lagrangian of the free relativistic particle with mass and

the area is the Cartesian product of and the interior of the unit sphere .

The set of all twofold continuously differentiable functions becomes the function space

![{\ displaystyle x \ colon [t_ {a}, t_ {e}] \ to \ mathbb {R} ^ {n}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/461206b20d0ca8b7ea8fbce2fe12cfdc66d85137)

selected that occupy the predefined locations or at the end time :

and whose values are together with the values of their derivation in ,

-

![{\ displaystyle \ forall t \ in [t_ {a}, t_ {e}] \ colon \ left (x (t), {\ frac {\ mathrm {d} x} {\ mathrm {d} t}} ( t) \ right) \ in G}](https://wikimedia.org/api/rest_v1/media/math/render/svg/914dedf83c57715698f8b4ba969d5b0c70067b61) .

.

With the Lagrangian , the functional , the effect, becomes through

Are defined. We are looking for the function that minimizes the effect .

According to the technique presented in the previous section, we investigate all differentiable single-parameter families that go through the stationary function of the functional (it is therefore true ). The equation derived in the last section is used

-

![{\ displaystyle 0 = \ left. {\ frac {\ mathrm {d}} {\ mathrm {d} \ alpha}} I (x _ {\ alpha}) \ right | _ {\ alpha = 0} = \ left [ {\ frac {\ mathrm {d}} {\ mathrm {d} \ alpha}} \ int _ {t_ {a}} ^ {t_ {e}} {\ mathcal {L}} (t, x _ {\ alpha } (t), {\ dot {x}} _ {\ alpha} (t)) \, \ mathrm {d} t \ right] _ {\ alpha = 0}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/0dc64a927b2d446ed187a75c8a350febf53bfc42) .

.

Including the differentiation according to the parameter in the integral yields with the chain rule

![{\ displaystyle {\ begin {aligned} 0 & = \ left [\ int _ {t_ {a}} ^ {t_ {e}} \ left (\ partial _ {2} {\ mathcal {L}} (t, x_ {\ alpha} (t), {\ dot {x}} _ {\ alpha} (t)) \ partial _ {\ alpha} x _ {\ alpha} (t) + \ partial _ {3} {\ mathcal { L}} (t, x _ {\ alpha} (t), {\ dot {x}} _ {\ alpha} (t)) \ partial _ {\ alpha} {\ dot {x}} _ {\ alpha} (t) \ right) \, \ mathrm {d} t \ right] _ {\ alpha = 0} \\ & = \ left [\ int _ {t_ {a}} ^ {t_ {e}} \ partial _ {2} {\ mathcal {L}} (t, x _ {\ alpha} (t), {\ dot {x}} _ {\ alpha} (t)) \ partial _ {\ alpha} x _ {\ alpha} (t) \, \ mathrm {d} t + \ int _ {t_ {a}} ^ {t_ {e}} \ partial _ {3} {\ mathcal {L}} (t, x _ {\ alpha} (t ), {\ dot {x}} _ {\ alpha} (t)) \ partial _ {\ alpha} {\ dot {x}} _ {\ alpha} (t) \, \ mathrm {d} t \ right ] _ {\ alpha = 0}. \ end {aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/8e0e7c3cc424bca388336d8c1c588fb17e9fc18a)

Here stand for the derivatives according to the second or third argument and for the partial derivative according to the parameter .

Later it will prove to be favorable if the second integral contains instead of the first integral . This can be achieved through partial integration:

-

![0 = \ left [\ int _ {t_ {a}} ^ {t_ {e}} \ partial _ {2} {\ mathcal {L}} (t, x _ {\ alpha} (t), {\ dot { x}} _ {\ alpha} (t)) \, \ partial _ {\ alpha} x _ {\ alpha} (t) \, \ mathrm {d} t + \ left [\ partial _ {3} {\ mathcal { L}} (t, x _ {\ alpha} (t), {\ dot {x}} _ {\ alpha} (t)) \, \ partial _ {\ alpha} x _ {\ alpha} (t) \ right ] _ {t = t_ {a}} ^ {t_ {e}} \ right.](https://wikimedia.org/api/rest_v1/media/math/render/svg/8bb48656e84a12aab815afdf7daa15016859ec3e)

![- \ left. \ int _ {t_ {a}} ^ {t_ {e}} {\ frac {\ mathrm {d}} {\ mathrm {d} t}} \ left (\ partial _ {3} {\ mathcal {L}} (t, x _ {\ alpha} (t), {\ dot {x}} _ {\ alpha} (t)) \ right) \, \ partial _ {\ alpha} x _ {\ alpha} (t) \, \ mathrm {d} t \ right] _ {\ alpha = 0}](https://wikimedia.org/api/rest_v1/media/math/render/svg/43818b5e9daa4e5cc498d69cac85efdf8c12f954)

At the points and apply regardless of the conditions and . Derive these two constants according to returns . Therefore the term disappears and one obtains the equation

after combining the integrals and factoring out

![\ left [\ partial _ {3} {\ mathcal {L}} (t, x _ {\ alpha} (t), {\ dot {x}} _ {\ alpha} (t)) \ partial _ {\ alpha } x _ {\ alpha} (t) \ right] _ {t = t_ {a}} ^ {t_ {e}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/7a9a10af11e272a928bc299525748d4776399657)

![{\ displaystyle 0 = \ left [\ int _ {t_ {a}} ^ {t_ {e}} \ left (\ partial _ {2} {\ mathcal {L}} (t, x _ {\ alpha} (t ), {\ dot {x}} _ {\ alpha} (t)) - {\ frac {\ mathrm {d}} {\ mathrm {d} t}} \ partial _ {3} {\ mathcal {L} } (t, x _ {\ alpha} (t), {\ dot {x}} _ {\ alpha} (t)) \ right) \, \ partial _ {\ alpha} x _ {\ alpha} (t) \ , \ mathrm {d} t \ right] _ {\ alpha = 0}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/22db0f02cd0f6ac7f7ab22347807e8d2aa579168)

and with

![0 = \ int _ {t_ {a}} ^ {t_ {e}} \ left (\ partial _ {2} {\ mathcal {L}} (t, x (t), {\ dot {x}} ( t)) - {\ frac {\ mathrm {d}} {\ mathrm {d} t}} \ partial _ {3} {\ mathcal {L}} (t, x (t), {\ dot {x} } (t)) \ right) \ left [\ partial _ {\ alpha} x _ {\ alpha} (t) \ right] _ {\ alpha = 0} \, \ mathrm {d} t.](https://wikimedia.org/api/rest_v1/media/math/render/svg/3907dc1db49c7ace86122d096558601b92fab2fe)

Except for the start time and the end time, there are no restrictions. With this, the time functions are arbitrary, twice continuously differentiable time functions except for the conditions . According to the fundamental lemma of the calculus of variations , the last equation can only be fulfilled for all admissible ones if the factor is equal to zero in the entire integration interval (this is explained in more detail in the remarks). This gives the Euler-Lagrange equation for the stationary function

![t \ mapsto \ left [\ partial _ {\ alpha} x _ {\ alpha} (t) \ right] _ {\ alpha = 0}](https://wikimedia.org/api/rest_v1/media/math/render/svg/256a743e9eb95acc689c8d784aa1394d54571f30)

![\ left [\ partial _ {\ alpha} x _ {\ alpha} \ right] _ {\ alpha = 0}](https://wikimedia.org/api/rest_v1/media/math/render/svg/90b1e0f40d8652eed2e80a387612bba89e37e9d2)

-

,

,

which must be fulfilled for everyone .

The specified quantity to be made to disappear is also called the Euler derivative of the Lagrangian ,

In physics books in particular, the derivation is referred to as variation. Then the variation of . The variation of the effect

is like a linear form in the variations of the arguments, its coefficients are called the variation derivative of the functional . In the case under consideration it is the Euler derivative of the Lagrangian

-

.

.

Remarks

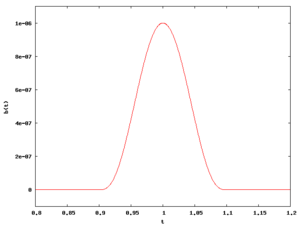

When deriving the Euler-Lagrange equation, it was taken into account that a continuous function , which for all functions at least twice continuously differentiable with when integrating over

returns zero, must be identical to zero.

This is easy to see when you take into account that it has for example

gives a twice continuously differentiable function, which is positive in an environment of an arbitrarily selected point in time and otherwise zero. If there were a point at which the function would be greater or less than zero, then due to the continuity it would also be greater or less than zero in an entire area around this point. With the function just defined , however, the integral, in contradiction to the requirement an, is also greater or less than zero. The assumption that something would be non-zero at one point is therefore wrong. The function is really identical to zero.

If the function space is an affine space , the family is often defined in the literature as a sum with a freely selectable time function that must meet the condition . The derivative is then precisely the Gateaux derivative of the functional at the point in direction . The version presented here appears to the author a little more favorable if the set of functions is no longer an affine space (for example, if it is restricted by a nonlinear constraint; see, for example, the Gaussian principle of least constraint ). It is shown in more detail in and is based on the definition of tangent vectors on manifolds.

In the case of a further, restricting functional , which restricts the function space by the fact that it should apply, the Lagrange multiplier method can be applied analogously to the real case :

for any and a solid .

Generalization for higher derivative and dimensions

The above derivation by means of partial integration can be applied to variation problems of the type

transferred, whereby in the dependencies derivatives (see multi-index notation ) also higher order appear, for example up to the order . In this case the Euler-Lagrange equation is

-

,

,

where the Euler derivative as

is to be understood (and where symbolically represents the corresponding dependence on in a self-explanatory manner , stands for the concrete value of the derivation of ). In particular, the total is also over .

See also

literature

Older books:

-

Friedrich Stegmann ; Textbook of the calculus of variations and their application in studies of the maximum and minimum . Kassel, Luckhardt, 1854.

-

Oskar Bolza : Lectures on calculus of variations. BG Teubner, Leipzig et al. 1909, ( digitized version ).

-

Paul Funk : Calculus of Variations and their application in physics and technology (= The basic teachings of the mathematical sciences in individual representations. 94, ISSN 0072-7830 ). 2nd Edition. Springer, Berlin et al. 1970.

-

Adolf Kneser : calculus of variations. In: Encyclopedia of Mathematical Sciences, including its applications . Volume 2: Analysis. Part 1. BG Teubner, Leipzig 1898, pp. 571-625 .

-

Paul Stäckel (Hrsg.): Treatises on the calculation of variations. 2 parts. Wilhelm Engelmann, Leipzig 1894;

- Part 1: Treatises by Joh. Bernoulli (1696), Jac. Bernoulli (1697) and Leonhard Euler (1744) (= Ostwald's classic of the exact sciences. 46, ISSN 0232-3419 ). 1894, ( digitized version );

- Part 2: Treatises by Lagrange (1762, 1770), Legendre (1786), and Jacobi (1837) (= Ostwald's classic of the exact sciences. 47). 1894, ( digitized version ).

Individual evidence

-

↑ Brachistochronous problem .

-

↑ Vladimir I. Smirnow : Course of higher mathematics (= university books for mathematics. Vol. 5a). Part 4, 1st (14th edition, German-language edition of the 6th Russian edition). VEB Deutscher Verlag der Wissenschaften, Berlin 1988, ISBN 3-326-00366-8 .

-

↑ See also Helmut Fischer, Helmut Kaul: Mathematics for physicists. Volume 3: The calculus of variations, differential geometry, mathematical foundations of general relativity. 2nd, revised edition. Teubner, Stuttgart et al. 2006, ISBN 3-8351-0031-9 .

![{\ displaystyle x \ colon [t_ {a}, t_ {e}] \ to \ mathbb {R} ^ {n}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/461206b20d0ca8b7ea8fbce2fe12cfdc66d85137)

![{\ displaystyle \ forall t \ in [t_ {a}, t_ {e}] \ colon \ left (x (t), {\ frac {\ mathrm {d} x} {\ mathrm {d} t}} ( t) \ right) \ in G}](https://wikimedia.org/api/rest_v1/media/math/render/svg/914dedf83c57715698f8b4ba969d5b0c70067b61)

![{\ displaystyle 0 = \ left. {\ frac {\ mathrm {d}} {\ mathrm {d} \ alpha}} I (x _ {\ alpha}) \ right | _ {\ alpha = 0} = \ left [ {\ frac {\ mathrm {d}} {\ mathrm {d} \ alpha}} \ int _ {t_ {a}} ^ {t_ {e}} {\ mathcal {L}} (t, x _ {\ alpha } (t), {\ dot {x}} _ {\ alpha} (t)) \, \ mathrm {d} t \ right] _ {\ alpha = 0}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/0dc64a927b2d446ed187a75c8a350febf53bfc42)

![{\ displaystyle {\ begin {aligned} 0 & = \ left [\ int _ {t_ {a}} ^ {t_ {e}} \ left (\ partial _ {2} {\ mathcal {L}} (t, x_ {\ alpha} (t), {\ dot {x}} _ {\ alpha} (t)) \ partial _ {\ alpha} x _ {\ alpha} (t) + \ partial _ {3} {\ mathcal { L}} (t, x _ {\ alpha} (t), {\ dot {x}} _ {\ alpha} (t)) \ partial _ {\ alpha} {\ dot {x}} _ {\ alpha} (t) \ right) \, \ mathrm {d} t \ right] _ {\ alpha = 0} \\ & = \ left [\ int _ {t_ {a}} ^ {t_ {e}} \ partial _ {2} {\ mathcal {L}} (t, x _ {\ alpha} (t), {\ dot {x}} _ {\ alpha} (t)) \ partial _ {\ alpha} x _ {\ alpha} (t) \, \ mathrm {d} t + \ int _ {t_ {a}} ^ {t_ {e}} \ partial _ {3} {\ mathcal {L}} (t, x _ {\ alpha} (t ), {\ dot {x}} _ {\ alpha} (t)) \ partial _ {\ alpha} {\ dot {x}} _ {\ alpha} (t) \, \ mathrm {d} t \ right ] _ {\ alpha = 0}. \ end {aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/8e0e7c3cc424bca388336d8c1c588fb17e9fc18a)

![0 = \ left [\ int _ {t_ {a}} ^ {t_ {e}} \ partial _ {2} {\ mathcal {L}} (t, x _ {\ alpha} (t), {\ dot { x}} _ {\ alpha} (t)) \, \ partial _ {\ alpha} x _ {\ alpha} (t) \, \ mathrm {d} t + \ left [\ partial _ {3} {\ mathcal { L}} (t, x _ {\ alpha} (t), {\ dot {x}} _ {\ alpha} (t)) \, \ partial _ {\ alpha} x _ {\ alpha} (t) \ right ] _ {t = t_ {a}} ^ {t_ {e}} \ right.](https://wikimedia.org/api/rest_v1/media/math/render/svg/8bb48656e84a12aab815afdf7daa15016859ec3e)

![- \ left. \ int _ {t_ {a}} ^ {t_ {e}} {\ frac {\ mathrm {d}} {\ mathrm {d} t}} \ left (\ partial _ {3} {\ mathcal {L}} (t, x _ {\ alpha} (t), {\ dot {x}} _ {\ alpha} (t)) \ right) \, \ partial _ {\ alpha} x _ {\ alpha} (t) \, \ mathrm {d} t \ right] _ {\ alpha = 0}](https://wikimedia.org/api/rest_v1/media/math/render/svg/43818b5e9daa4e5cc498d69cac85efdf8c12f954)

![\ left [\ partial _ {3} {\ mathcal {L}} (t, x _ {\ alpha} (t), {\ dot {x}} _ {\ alpha} (t)) \ partial _ {\ alpha } x _ {\ alpha} (t) \ right] _ {t = t_ {a}} ^ {t_ {e}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/7a9a10af11e272a928bc299525748d4776399657)

![{\ displaystyle 0 = \ left [\ int _ {t_ {a}} ^ {t_ {e}} \ left (\ partial _ {2} {\ mathcal {L}} (t, x _ {\ alpha} (t ), {\ dot {x}} _ {\ alpha} (t)) - {\ frac {\ mathrm {d}} {\ mathrm {d} t}} \ partial _ {3} {\ mathcal {L} } (t, x _ {\ alpha} (t), {\ dot {x}} _ {\ alpha} (t)) \ right) \, \ partial _ {\ alpha} x _ {\ alpha} (t) \ , \ mathrm {d} t \ right] _ {\ alpha = 0}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/22db0f02cd0f6ac7f7ab22347807e8d2aa579168)

![0 = \ int _ {t_ {a}} ^ {t_ {e}} \ left (\ partial _ {2} {\ mathcal {L}} (t, x (t), {\ dot {x}} ( t)) - {\ frac {\ mathrm {d}} {\ mathrm {d} t}} \ partial _ {3} {\ mathcal {L}} (t, x (t), {\ dot {x} } (t)) \ right) \ left [\ partial _ {\ alpha} x _ {\ alpha} (t) \ right] _ {\ alpha = 0} \, \ mathrm {d} t.](https://wikimedia.org/api/rest_v1/media/math/render/svg/3907dc1db49c7ace86122d096558601b92fab2fe)

![t \ mapsto \ left [\ partial _ {\ alpha} x _ {\ alpha} (t) \ right] _ {\ alpha = 0}](https://wikimedia.org/api/rest_v1/media/math/render/svg/256a743e9eb95acc689c8d784aa1394d54571f30)

![\ left [\ partial _ {\ alpha} x _ {\ alpha} \ right] _ {\ alpha = 0}](https://wikimedia.org/api/rest_v1/media/math/render/svg/90b1e0f40d8652eed2e80a387612bba89e37e9d2)