The indicator function (also called characteristic function ) is a function in mathematics that is characterized by the fact that it only takes one or two function values. It makes it possible to grasp complex sets with mathematical precision and to define functions such as the Dirichlet function on them .

definition

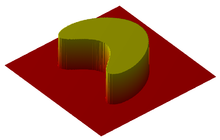

two-dimensional indicator function of a subset of a square

There are several spellings for the characteristic function in the literature. In addition to the means used here, the spellings and are also common.

Real-valued characteristic function

A basic set and a subset are given . The function defined by

is then called the characteristic function or indicator function of the set . The assignment provides a bijection between the power set and the set of all functions of into the set

Extended characteristic function

In the optimization, the characteristic function is partly defined as an extended function . Here the function is called defined by

the characteristic function or indicator function of the set . It is a real function when it is not empty.

Partial characteristic function

When forming the partial characteristic function , the definition set is restricted to; in terms of partial functions , they can be described as follows:

Use of the different definitions

The real-valued characteristic function is often used in integration theory and stochastics , as it enables integrals of the function over the set to be replaced by integrals of over the basic set:

-

.

.

In this way, for example, case distinctions can often be avoided.

The extended characteristic function is used in optimization in order to restrict the function to sub-areas on which certain desired properties such as e.g. B. have convexity , or to model restriction sets.

The partial characteristic function is used in computability theory .

Properties and calculation rules of the real-valued characteristic function

- The amount is clearly determined by its characteristic function. It applies

-

.

.

- For thus follows from the equality of the equality of the quantities.

- The characteristic function of the empty set is the null function . The characteristic function of the basic set is the constant function with the value 1.

- Let there be sets . Then applies to the intersection

- and for the union

-

.

.

- For the difference amount is

-

.

.

- In particular, applies to the complement

-

.

.

Use to calculate expected value, variance and covariance

For a given probability space and event , the indicator function is a Bernoulli distributed random variable . In particular, applies to the expected value

and for the variance

-

.

.

The variance of thus assumes its maximum value in the case .

If is additional , then applies to the covariance

-

.

.

Two indicator variables are therefore uncorrelated if and only if the associated events are stochastically independent .

If there are any events, then there is the random variable

the number of those events that have occurred. Because of the linearity of the expected value, the following then applies

-

.

.

This formula also applies when the events are dependent. If they are also independent in pairs, then the Bienaymé equation applies to the variance

-

.

.

In the general case the variance can be calculated using the formula

to be determined.

See also

literature

Remarks

-

↑ The term is also for the relation of identity or -Picture use and can easily lead to confusion.