Text mining

Text Mining , rarely, text mining , text data mining or Textual Data Mining , is a bundle of algorithm -based analysis method for discovering meaning structures from non- or weakly structured text data. With statistical and linguistic means text mining opens up software from texts structures to enable users the ability to identify and manage key information of the processed texts quickly. In the best case, text mining systems provide information that the user does not know beforehand whether and that it is contained in the processed texts. When used in a targeted manner, text mining tools are also able to generate hypotheses , check them and gradually refine them.

concept

Text mining, introduced in 1995 by Ronen Feldman and Ido Dagan as Knowledge Discovery from Text (KDT) in research terminology, is not a clearly defined term. In analogy to data mining in Knowledge Discovery in Databases ( KDD ), text mining is a largely automated process of knowledge discovery in textual data, which is intended to enable the effective and efficient use of available text archives. More comprehensively, text mining can be seen as the process of compiling and organizing, formal structuring and algorithmic analysis of large document collections for the needs-based extraction of information and the discovery of hidden content-related relationships between texts and text fragments.

Typologies

The different views of text mining can be classified using different typologies. Types of information retrieval ( IR ), document clustering , text data mining and KDD are repeatedly mentioned as sub-forms of text mining.

In the case of IR , it is known that the text data contain certain facts that are to be found using suitable search queries. In the data mining perspective , text mining is understood as "data mining on textual data" for the exploration of data (which needs to be interpreted) from texts. The most extensive type of text mining is the actual KDT , in which new, previously unknown information is to be extracted from the texts.

Related procedures

Text mining is related to a number of other techniques, from which it can be distinguished as follows.

Text mining is most similar to data mining. It shares many processes with this, but not the subject: While data mining is mostly applied to highly structured data, text mining deals with much less structured text data. In text mining, the primary data are therefore structured more strongly in a first step in order to enable them to be developed using data mining methods. In contrast to most data mining tasks, multiple classifications are usually expressly desired in text mining.

In addition, text mining uses information retrieval methods , which are designed to find those text documents that are supposed to be relevant for answering a search query. In contrast to data mining, possibly unknown structures of meaning in the overall text material are not opened up, but a number of relevant, hoped-for individual documents are identified on the basis of known key words.

Information extraction methods aim to extract individual facts from texts. Information extraction often uses the same or similar procedural steps as is done in text mining; Sometimes information extraction is therefore viewed as a branch of text mining. In contrast to (many other types of) text mining, at least the categories for which information is sought are known here - the user knows what he does not know.

Processes of the automatic summarization of texts, the text extraction , produce a condensate of a text or a text collection; In contrast to text mining, this does not go beyond what is explicitly available in the texts.

Argument mining can be seen as a continuation of text mining . The aim here is to extract argumentation structures.

application areas

Web mining

Web mining , especially web content mining , is an important application area for text mining. Attempts to establish text mining as a method of social science content analysis are still relatively new , for example sentiment detection for the automatic extraction of attitudes towards a topic.

example

The Word of the Day website , a project by the University of Leipzig, shows what text mining processes can achieve. It shows which words are currently used frequently on the web. The topicality of a term results from its current frequency compared with its average frequency over a longer period of time.

methodology

Text mining proceeds in several standard steps: First, a suitable data material is selected. In a second step, this data is processed in such a way that it can then be analyzed using various methods . After all, the presentation of the results is an unusually important part of the process. All procedural steps are supported by software .

Data material

Text mining is applied to a (usually very large) set of text documents that have certain similarities in terms of size, language and subject matter. In practice, this data mostly comes from extensive text databases such as PubMed or LexisNexis . The analyzed documents are unstructured in the sense that they do not have a uniform data structure, which is why we also speak of "free format". Nevertheless, they have semantic , syntactic , often also typographical and, more rarely, markup- specific structural features that text mining techniques use; one therefore speaks of weakly structured or semi-structured text data. The documents to be analyzed mostly originate from a certain discourse universe ( domain ), which can be more (e.g. genome analysis ) or less (e.g. sociology ) clearly delimited.

Data preparation

The actual text mining requires computational linguistic processing of the documents. This is typically based on the following steps, which can only be partially automated.

First of all, the documents are converted into a standardized format - nowadays mostly XML .

For the text representation, the documents are then usually based on characters , words , terms ( terms ) and / or so-called concepts tokenized . In the above units, the strength of the semantic meaning increases, but at the same time the complexity of their operationalization , which is why hybrid methods are often used for tokenization.

As a result, words in most languages have to be lemmatized , i.e. reduced to their basic morphological form, for example the infinitive in verbs . This is done by stemming .

Dictionaries

Digital dictionaries are needed to solve some problems . A stop dictionary removes those words from the data to be analyzed that are expected to have little or no predictive power, as is often the case with articles like “the” or “an”. In order to recognize stop words, lists are often created with the most frequently occurring words in the text corpus; In addition to stop words, these mostly also contain most of the domain-specific expressions for which dictionaries are normally also created. The important problems of polysemy - the ambiguity of words - and synonymy - the meaning of different words - are solved by means of dictionaries. (Often domain-specific) thesauri that weaken the synonym problem are increasingly being automatically generated in large corpora.

Depending on the type of analysis, it may be possible for phrases and words to be linguistically classified using part-of-speech tagging , but this is often not necessary for text mining.

- Pronouns (he, she) must be assigned to the preceding or following noun phrases (Goethe, die Polizisten) to which they refer ( anaphora resolution ).

- Proper names for persons, places, companies, states etc. have to be recognized, since they have a different role in the constitution of the text meaning than generic nouns.

- Ambiguity of words and phrases is resolved by assigning exactly one meaning to each word and phrase (determination of the word meaning, disambiguation).

- Some words and sentence (parts) can be assigned to a subject area (term extraction).

In order to be able to better determine the semantics of the analyzed text data, subject-specific knowledge is usually used.

Analytical method

On the basis of this partially structured data, the actual text mining procedures can be built, which are based primarily on the discovery of co-occurrences , ideally between concepts . These procedures are intended to:

- Make information implicitly available in texts explicit,

- Making relationships between information represented in different texts visible.

The core operations of most methods are the identification of ( conditional ) distributions , frequent quantities and dependencies . Machine learning plays a major role in the development of such processes , both in its supervised and unsupervised versions.

Cluster process

In addition to the traditionally most widespread cluster analysis methods - means and hierarchical clusters - self-organizing maps are also used in cluster methods. In addition, more and more methods are using fuzzy logic .

k-means cluster analysis

Clusters are very often formed in text mining means . The algorithm belonging to these clusters aims to minimize the sum of the Euclidean distances within and across all clusters. The main problem here is to determine the number of clusters to be found, a parameter that the analyst must determine with the aid of his prior knowledge. Such algorithms are very efficient, but it can happen that only local optima are found.

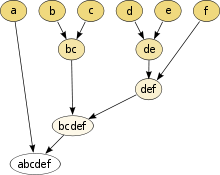

Hierarchical cluster analysis

In the likewise popular hierarchical cluster analysis , documents are grouped according to their similarity in a hierarchical cluster tree ( see illustration ). This method is significantly more computationally intensive than that for -means cluster. Theoretically, one can proceed in such a way that one divides the amount of documents in successive steps or by first considering each document as a separate cluster and then aggregating the most similar clusters step by step. In practice, however, only the latter procedure usually leads to meaningful results. In addition to the runtime problems , another weakness is the fact that you need background knowledge of the cluster structure to be expected for good results. As with all other methods of clustering, it is ultimately the human analyst who has to decide whether the clusters found reflect structures of meaning.

Self-organizing cards

The self-organizing map approach, first developed by Teuvo Kohonen in 1982, is another widespread concept for clustering in text mining. Artificial neural networks (usually two-dimensional) are created. These have an input level in which each text document to be classified is represented as a multidimensional vector and to which a neuron is assigned as the center, and an output level in which the neurons are activated according to the sequence of the selected distance measure.

Fuzzy clustering

Clustering algorithms based on fuzzy logic are also used, since many - in particular deictic - language entities can only be adequately decoded by the human reader, and this creates an inherent uncertainty in the computer algorithm processing. Since they take this fact into account, fuzzy clusters generally offer results that are above average. Typically, fuzzy C-Means are used. Other applications of this type rely on Koreferenzcluster - graphs back.

Vector method

A large number of text mining methods are vector-based . Typically, the terms occurring in the examined documents are represented in a two-dimensional matrix , where t is defined by the number of terms and d by the number of documents. The value of the element is determined by the frequency of the term in the document ; the frequency number is often transformed , usually by normalizing the vectors in the matrix columns by dividing them by their amount . The resulting high-dimensional vector space is then mapped onto a significantly lower-dimensional vector . Since 1990, Latent Semantic Analysis ( LSA ) has played an increasingly important role, traditionally using singular value decomposition . Probablistic Latent Semantic Analysis ( PLSA ) is a more statistically formalized approach that is based on Latent Class Analysis and uses an EM algorithm to estimate the latency class probabilities .

Algorithms based on LSA, however, are very computationally intensive: a normal desktop computer from 2004 can hardly analyze more than a few hundred thousand documents. Slightly worse, but less computationally intensive results than LSA achieve vector space methods based on covariance analyzes.

The evaluation of relationships between documents by means of such reduced matrices makes it possible to determine documents which relate to the same subject, although their wording is different. Evaluation of relationships between terms in this matrix makes it possible to establish associative relationships between terms, which often correspond to semantic relationships and can be represented in an ontology .

Results presentation

An unusually important and complex part of text mining is the presentation of the results. This includes tools for browsing as well as for visualizing the results. The results are often presented on two-dimensional maps.

software

A number of text mining application programs exist; often these are specialized in certain areas of knowledge . From a technical point of view , a distinction can be made between pure text miners , extensions to existing software - for example for data mining or content analysis - and programs that only accompany partial steps or areas of text mining.

Pure text miner

Generic applications

- Megaputer TextAnalyst / PolyAnalyst

- Leximancer

- ClearForest Text Analytics Suite

- IBM's WebFountain (will no longer be developed)

Domain-specific applications

- GeneWays The GeneWays developed at Columbia University also covers all procedural steps of text mining, but, unlike the programs distributed by ClearForest, it relies much more on domain-specific knowledge. The program is thematically limited to genetic research and dedicates most of its tools to data processing and less to text mining and the presentation of results.

- Patent Researcher

Extensions to existing software suites

- Text mining module tm for R

- Text processing module for KNIME

- Text Analytics Toolbox for MATLAB offers algorithms and visualizations for preprocessing, analyzing and modeling text data.

- RapidMiner

- ELKI contains numerous cluster analysis methods.

- NClassifier

- WordStat The WordStat software module offered by Provalis Research is the only program for text mining that is linked to both a statistical application - Simstat - and software for computer-assisted qualitative data analysis - QDA Miner . So that the program is particularly suitable for triangulation of qualitative social science methods with the quantitatively oriented text mining. The program offers a range of cluster algorithms - hierarchical clusters and multidimensional scaling - as well as a visualization of the cluster results.

- SPSS Clementine includes computational linguistic methods for information extraction, for dictionary creation, and for lemmatization for different languages.

- SAS Text Miner In addition to the SAS Enterprise Miner, the SAS Institute offers the additional program SAS Text Miner, which offers a range of text cluster algorithms.

Partial provider

Link analysis

literature

- Gerhard Heyer, Uwe Quasthoff, Thomas Wittig: Text Mining: Text as a resource for knowledge - concepts, algorithms, results. W3L Verlag, Herdecke / Bochum 2006, ISBN 3-937137-30-0 .

- Alexander Mehler, Christian Wolff: Introduction: Perspectives and Positions of Text Mining. In: Journal for Computational Linguistics and Language Technology. Volume 20, Issue 1, Regensburg 2005, pages 1-18.

- Alexander Mehler: Text mining . In: Lothar Lemnitzer, Henning Lobin (eds.): Text Technologie. Perspectives and Applications. Stauffenburg, Tübingen 2004, ISBN 3-86057-287-3 , pp. 329-352.

- Jürgen Franke, Gholamreza Nakhaeizadeh, Ingrid Renz (Eds.): Text Mining - Theoretical Aspects and Applications. Physica, Berlin 2003.

- Ronen Feldman, James Sanger: The Text Mining Handbook: Advanced Approaches in Analyzing Unstructured Data. Cambridge University Press, 2006, ISBN 0-521-83657-3 .

- Bastian Buch: Text Mining for the automatic extraction of knowledge from unstructured text documents. VDM, 2008, ISBN 3-8364-9550-3 .

- Matthias Lemke , Gregor Wiedemann (ed.): Text mining in the social sciences. Basics and applications between qualitative and quantitative discourse analysis. Springer VS, Wiesbaden 2016, ISBN 978-3-658-07223-0 .

Web links

- Untangling Text Data Mining by Marti A. Hearst, published in the Proceedings of ACL'99: the 37th Annual Meeting of the Association for Computational Linguistics , University of Maryland, June 20-26, 1999

- GSCL Symposium "Language Technology and eHumanities" 02/26/2009 - 02/27/2009 , proceedings (PDF; 6.5 MB)

- National Center for Text Mining (NaCTeM) at the University of Manchester

Individual evidence

- ^ Ronen Feldman, Ido Dagan: Knowledge Discovery in Texts. Pp. 112–117 , accessed January 27, 2015 (First International Conference on Knowledge Discovery (KDD)).

- ↑ a b c d e f g h i Andreas Hotho, Andreas Nürnberger, Gerhard Paaß: A Brief Survey of Text Mining . (PDF) In: Journal for Computational Linguistics and Language Technology . 20, No. 1, 2005. Retrieved November 11, 2011.

- ↑ a b c Alexander Mehler, Christian Wollf: Introduction: Perspectives and Positions of Text Mining Archived from the original on April 2, 2015. Info: The archive link has been inserted automatically and has not yet been checked. Please check the original and archive link according to the instructions and then remove this notice. (PDF) In: Journal for Computational Linguistics and Language Technology . 20, No. 1, 2005. Retrieved November 11, 2011.

- ↑ a b c d e f Sholom M Weiss, Nitin Indurkhya, Tong Zhang, Fred J. Damerau: Text Mining: Predictive Methods for Analyzing unstructured Information . Springer, New York, NY 2005, ISBN 0-387-95433-3 .

- ^ A b John Atkinson: Evolving Explanatory Novel Patterns for Semantically-Based Text Mining . In: Anne Kao, Steve Poteet (Eds.): Natural Language Processing and Text Mining . Springer, London, UK 2007, ISBN 978-1-84628-754-1 , pp. 145-169, p. 146.

- ^ A b Max Bramer: Principles of Data Mining . Springer, London, UK 2007, ISBN 978-1-84628-765-7 .

- ↑ z. B. Fabrizio Sebastiani: Machine learning in automated text categorization . (PDF) In: ACM Computing Surveys . 34, No. 1, 2002, pp. 1-47, p. 2.

- ↑ a b c d Anne Kao, Steve Poteet, Jason Wu, William Ferng, Rod Tjoelker, Lesley Quach: Latent Semantic Analysis and Beyond . In: Min Song, Yi-Fang Brooke Wu (Ed.): Handbook of Research on Text and Web Mining Technologies . Information Science Reference, Hershey, PA 2009, ISBN 978-1-59904-990-8 .

- ↑ Source: Word of the Day ( Memento of the original from September 19, 2016 in the Internet Archive ) Info: The archive link was inserted automatically and has not yet been checked. Please check the original and archive link according to the instructions and then remove this notice.

- ↑ a b c d e f g h i j k l m n Ronan Feldman, James Sanger: The Text Mining Handbook: Advanced Approaches in Analyzing Unstructured Data . Cambridge University Press, New York, NY 2007, ISBN 978-0-511-33507-5 .

- ^ Scott Deerwester, Susan T. Dumais, George W. Furnas, Thomas K. Landauer: Indexing by latent semantic analysis . In: Journal of the American Society for Information Science . 41, No. 6, 1990, pp. 391-407, pp. 391f. doi : 10.1002 / (SICI) 1097-4571 (199009) 41: 6 <391 :: AID-ASI1> 3.0.CO; 2-9 .

- ↑ Pierre Senellart, Vincent D. Blondel: Automatic Discovery of Similar Words . In: Michael W. Berry & Malu Castellanos (ed.) (Eds.): Survey of Text Mining II: Clustering, Classification and Retrieval . Springer, London, UK 2008, ISBN 978-0-387-95563-6 , pp. 25-44.

- ↑ Joydeep Ghosh, Alexander Liu: -Means . In: Xindong Wu, Vipin Kumar (Eds.): The Top Ten Algorithms in Data Mining . CRC Press, New York, NY 2005, ISBN 0-387-95433-3 , pp. 21-37, pp. 23f.

- ↑ Roger Bilisoly: Practical Text Mining with Perl . John Wiley & Sons, Hoboken, NY 2008, ISBN 978-0-470-17643-6 , p. 235.

- ↑ a b Abdelmalek Amine, Zakaria Elberrichi, Michel Simonet, Ladjel Bellatreche, Mimoun Malki: SOM-Based Clustering of Textual Documents Using WordNet . In: Min Song, Yi-fang Brooke Wu (Ed.): Handbook of Research on Text and Web Mining Technologies . Information Science Reference, Hershey, PA 2009, ISBN 978-1-59904-990-8 , pp. 189-200, pp. 195.

- ↑ a b c René Witte, Sabine Bergler: Fuzzy Clustering for Topic Analysis and Summarization of Document Collections . In: Advances in Artificial Intelligence . 4509, 2007. doi : 10.1007 / 978-3-540-72665-4_41 .

-

↑ a b Hichem Frigui, Olfa Nasraoui: Simultaneous Clustering and Dynamic Keyword Weighting for Text Documents . In: Michael W. Berry (Ed.) (Ed.): Survey of Text Mining: Clustering, Classification and Retrieval . Springer, New York, NY 2004, ISBN 978-0-387-95563-6 . .

- ↑ a b Mei Kobayashi, Masaki Aono: Vector Space Models for Search and Cluster Mining . In: Michael W. Berry (Ed.): Survey of Text Mining: Clustering, Classification and Retrieval . Springer, New York, NY 2004, ISBN 978-0-387-95563-6 , pp. 103-122, pp. 108f.

- ↑ Alessandro Zanasi: Text Mining Tools . In: Alessandro Zanasi (ed.) (Ed.): Text Mining and its Applications to Intelligence, CRM and Knowledge Management . WIT Press, Southampton & Billerica, MA 2005, ISBN 978-1-84564-131-3 , pp. 315-327, p. 315.

- ^ A b c Richard Segall, Qingyu Zhang: A Survey of Selected Software Technologies for Text Mining . In: Min Song, Yi-fang Brooke Wu (Ed.): Handbook of Research on Text and Web Mining Technologies . Information Science Reference, Hershey, PA 2009, ISBN 978-1-59904-990-8 .