Option (economy)

One option referred to in the economic right, a certain thing to buy at a later date at an agreed price or to sell. Options are also known as conditional futures and thus belong to the group of derivatives . It is expressly a right and not an obligation : the option holder who has bought the option at a certain price (premium) from the option writer (option seller) decides unilaterally whether to exercise the option against the option writer or to allow it to expire.

Overview

The standard forms (English plain vanilla ) of the option are the purchase option (English call ) and the sell option (English put ). The buyer of the option has the right - but not the obligation - to buy or sell a certain amount of the underlying asset at a predetermined exercise price ( strike ) at certain exercise times . The seller of the option (also writer , writer, subscriber) receives the purchase price of the option. If exercised, he is obliged to sell or buy the underlying asset at the previously determined price.

As with all forward transactions, a distinction is made between two types of exercise: payment and delivery ( physical delivery ) and cash settlement . If payment and delivery have been agreed, one counterparty (in the case of a put option the holder, in the case of a call option the writer) delivers the underlying, the other counterparty pays the exercise price as the purchase price. In the case of cash settlement, the writer pays the difference in value, which results from the exercise price and the market price of the base value on the exercise date, to the option holder. The opposite case, in which the holder pays the writer, cannot normally occur, since in this case the holder does not exercise the option. The economic benefit for the owner is the same in both cases, apart from transaction, storage and delivery costs.

Option types

In addition to the standard options, there are also exotic options , the payout profile of which does not only depend on the difference between the price and the exercise price.

Exercise types

A distinction is made depending on the exercise times

- European option : the option can only be exercised on the due date;

- American option : the option can be exercised on any trading day prior to maturity;

- Bermuda Option : The option can be exercised at one of several predetermined times.

According to his own statement, the name goes back to the economist Paul Samuelson .

Black-Scholes model

In 1973 , almost simultaneously with Robert C. Merton, American scientists Fischer Black and Myron Scholes published methods for determining the “true” value of an option in two independent articles. In 1997 Scholes and Merton received the Swedish Reichsbank's Prize for Economic Sciences in memory of Alfred Nobel , often referred to as the Nobel Prize in Economics , "for a new method for determining the value of derivatives", the Black-Scholes model .

trade

Most of the global options trading takes place on futures exchanges such as the Chicago Board Options Exchange in the USA or EUREX in Europe (exchange-traded options). Standardized contracts with fixed underlyings, expiry dates and exercise prices are traded. The standardization is intended to increase the liquidity of the options. It makes it easier for market participants to close the option positions they have taken before they mature by reselling or buying back the contracts. The range of options contracts on a futures exchange is usually coordinated with that of futures contracts.

An option can also be concluded as an individual contract between the option holder and the option giver (writer) ( OTC options). Since the contract is negotiated bilaterally, it can in principle be freely designed. Unless they are offered as warrants for the retail market, exotic options are always OTC options. The advantage of greater flexibility is offset by the disadvantage of less tradability. Once a position has been entered, it can only be terminated prematurely in negotiations with the contractual partner. Alternatively, the risk incurred can be hedged by counter-transactions that are similar or exactly the same .

Ultimately, options can still be designed as securities ( warrants ). Like other securities, these can also be resold by the option buyer. Warrants can also be freely designed, but the issuer must find buyers for the specific design.

Underlyings

Options on the following underlyings can be traded on the financial markets:

- shares

- Indices

- Currencies

- Bonds

- Swaps (so-called swaptions )

- Exchange traded fund (ETF)

- raw materials

- food

- electrical power

- Weather

- other options

Regulated trading in options requires that the underlying assets are traded on liquid markets in order to be able to determine the value of the option at any time. In principle, however, it is also possible that the base value can be chosen as desired, as long as it is possible to determine the necessary variables described in the section on sensitivities and key figures . These derivatives , on the other hand, are only offered by approved traders such as investment banks or brokers in over-the-counter trading (OTC).

Proximity to money

The money close or "Moneyness" is measured a parameter for options that, as the current price of the underlying is to the strike price.

In the money

In the money (English in the money ) is an option that an intrinsic value has.

- A call option is in the money if the market price of the underlying is greater than the exercise price.

- A put option is in the money if the market price of the underlying is less than the exercise price.

Out of the money

Out of the money (English out of the money ) is an option that has no intrinsic value.

- A call option is out of the money if the market price of the underlying is lower than the exercise price.

- A put option is out of the money if the market price of the underlying is greater than the strike price.

At the money

An option is in the money (English at the money ) if the market price of the underlying is equal or nearly equal to the exercise price. Depending on the consideration, the market price of the underlying can be based on the spot price or the forward price at the end of the option's term. English terms to distinguish these two considerations are at the money spot (for the spot rate) and at the money forward (for the forward rate).

Sensitivities and key figures - the so-called "Greeks"

delta

Sensitivity figure that indicates the influence of the price of the underlying asset on the value of the option. Mathematically, the delta is the first derivative of the option price based on the price of the underlying asset. A delta of 0.5 means that a change in the base value by € 1 (in a linear approximation) results in a change in the option price of 50 cents. The delta is important in so-called delta hedging .

gamma

The gamma of an option indicates how much its delta changes (in a linear approximation) if the price of the underlying changes by one unit and all other values do not change. Mathematically, the gamma is the second derivative of the option price after the price of the underlying asset. For the holder of the option (i.e. for both long calls and long put) it always applies that gamma ≥ 0. The key figure is also taken into account in hedging strategies in the form of gamma hedging .

Theta

The theta of an option indicates how much the theoretical value of an option changes if the remaining term is shortened by one day and all other values remain constant. For the holder of the option, the theta is usually negative, so a shorter remaining term always means a lower theoretical value.

Vega

The Vega (sometimes also Lambda or Kappa , since Vega is not a letter of the Greek alphabet) of an option indicates how much the value of the option changes if the volatility of the underlying changes by one percentage point and all other parameters remain constant.

Rho

The rho of an option indicates how much the value of the option changes if the risk-free interest rate in the market changes by one percentage point. The rho is positive for call options and negative for put options.

lever

The lever is calculated by the current price of the underlying of the option by the current price divided . If the option relates to a multiple or a fraction of the underlying, this factor must be taken into account in the calculation. One speaks here of the subscription ratio (ratio).

omega

By multiplying the delta by the current leverage, a new leverage size is obtained, which is usually found in the price tables under the designation Omega or "leverage effective". An option with a current leverage of 10 and a delta of 50% has "only" an omega of 5, so the note increases by around 5% if the base increases by 1%. Again, however, it should be noted that both the delta and the omega and most of the other key figures are constantly changing. Even so, the Omega gives a relatively good picture of the chances of the option in question.

rating

Influencing variables

The price of an option depends on the one hand on its features, here

- the current price of the underlying asset,

- the exercise price,

- the remaining term until the exercise date,

on the other hand, the underlying model for the future development of the underlying and other market parameters. The other influencing factors are under the Black-Scholes model

- the volatility of the underlying asset,

- the risk-free, short-term interest rate on the market,

- expected dividend payments within the lifetime.

The current price of the underlying and the exercise price determine the intrinsic value of the option. The intrinsic value is the difference between the exercise price and the price of the base value. In the case of a call option in relation to an underlying asset with a current value of € 100 and an exercise price of € 90, the intrinsic value is € 10. In the case of a put option, the intrinsic value is 0 in the case described.

Volatility in particular has a major impact on the value of the option. The more the price fluctuates, the higher the probability that the value of the underlying asset will change significantly and that the intrinsic value of the option will rise or fall. The general rule is that higher volatility has a positive impact on the value of the option. In extreme borderline cases, however, it can be exactly the opposite.

The remaining term affects the value of the option in a similar way to the volatility. The more time there is until the exercise date, the higher the probability that the intrinsic value of the option will change. Part of the value of the option consists of this time value. It is theoretically possible to calculate the time value by comparing two options that differ only in terms of their duration and are otherwise identical. But this presupposes the unrealistic case of an almost perfect capital market.

The increase in the risk-free interest rate has a positive effect on the value of call options (call option) and a negative effect on the value of put options (put option) because, according to the current valuation methods, the likelihood of an increase in the price or value of the underlying asset risk-free rate is coupled. This is because the money, which thanks to the call does not have to be invested in an underlying asset, can be invested with interest. The higher the interest rates on an alternative investment, the more attractive it is to buy a call. As the interest rate level rises, the value of the option above the intrinsic value, the fair value, rises. With a put, the situation is reversed: the higher the interest rate, the lower the current value of the put, because theoretically you would have to own the base value of the option in order to be able to exercise the right to sell.

Dividend payments in the case of options on shares have a negative impact on the value of a call option compared to the same share if there is no dividend, since dividends are waived during the option holding period, which theoretically can be received by exercising the option. Conversely, compared to the same dividend-free share, they have a positive influence on the value of a put option, because dividends can still be received during the option holding period, which the option holder would be entitled to if exercised immediately. In the case of options on currencies or commodities, the underlying interest rate of the currency or the “ convenience yield ” is used instead of dividends.

Asymmetrical profit and loss

In the event of a development in the price of the underlying that is disadvantageous for him, the owner of the option will not exercise his right and allow the option to lapse. He loses the option price at most - thus realizes a total loss - but has the possibility of unlimited profit on call options. This means that the seller's potential losses on purchase options are unlimited. However, this loss could also be viewed as "lost profit" (covered short call), unless the seller of the call option does not have the corresponding underlying assets (i.e. has to buy to fulfillment and then deliver - uncovered sale of a call option (uncovered Short call), where uncovered means that the position consists of only one instrument).

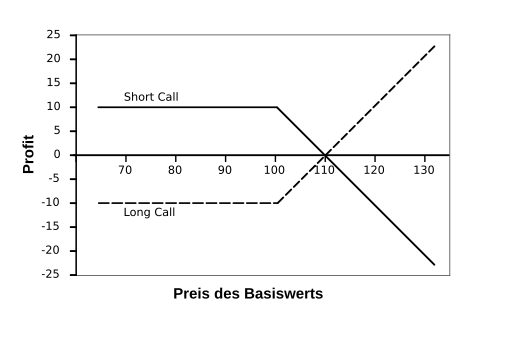

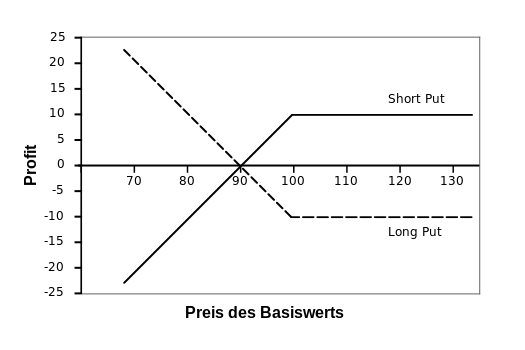

The following graphics illustrate the asymmetrical payout structure . The options shown are identical for all influencing factors. It is important to understand that the buyer of an option takes a long position and the seller of an option takes a short position. In all four cases, the value of the option is 10 and the exercise price is 100.

In the previous graphic you can see that the buyer ( long ) of the call has a maximum loss of 10, but has unlimited profit opportunities. In contrast, the seller ( short ) has a maximum profit of 10 with unlimited losses.

In the case of a put, the buyer ( long ) also has a maximum loss of 10. A common mistake is to transfer the unlimited profit potential of the call option to the put option. However, the basic good can at most assume the market value of zero. As a result, the maximum profit opportunity is limited to this case of a price of zero. As with the call, the seller ( short ) has a maximum profit of 10 with only limited losses if the price of the underlying asset assumes zero. The difference between a call and a put lies in how the payout changes in relation to the underlying, and in the limitation of the maximum profit / loss for put options.

Calculation of the option price

In option price theory, there are basically two approaches to determining the fair option price:

- With the help of estimates without assumptions about possible future share prices and their probabilities (distribution-free no-arbitrage relationships, see: option price theory )

- Through possible share prices and risk-neutral probabilities. These include the binomial model and the Black-Scholes model

In principle, it is possible to model the stochastic processes that determine the price of the underlying asset in different ways. These processes can be mapped analytically in a time-continuous manner with differential equations and analytically in a time-discrete manner with binomial trees. A non-analytical solution is possible through future simulations.

The best known analytical time-continuous model is the Black and Scholes model . The best-known analytical time-discrete model is the Cox-Ross-Rubinstein model . A common simulation method is the Monte Carlo simulation .

Distribution-free, no-arbitrage relationships

A call option cannot be worth more than the underlying asset. Let us assume that the base value is traded at € 80 today and someone offers an option on this base value that costs € 90. Nobody would want to buy this option because the underlying asset itself is cheaper to buy, which is obviously worth more than the option. Since, for example, a share does not contain any obligations as an underlying, it can be bought and deposited. If necessary, it will be brought out again. This corresponds to a perpetual option with an exercise price of € 80; however, a more valuable option is inconceivable, so that the (call) option can never be more valuable than the underlying.

This assumption does not apply if the product to be traded causes considerable storage costs. In this case, the call option can exceed the base value by the storage costs expected by the due date.

A put option cannot be worth more than the present value of the exercise price. Nobody would spend more than € 80 on the right to sell something for € 80. In a financially correct manner, these € 80 must be discounted to today's present value.

These value limits are the starting point for determining the value of a European option, the put-call parity.

Put-call parity

The put-call parity is a relationship between the price of a European call and the price when both have a European put the same strike price and the same due date:

in which

- p : price of the European put option

- : Share price

- c : price of the European purchase option

- K : Base price of the call and put option

- r : risk-free interest rate

- T : number of years

- D : Discounted dividend payments over the life of the options

If the put-call parity were violated, risk-free arbitrage profits would be possible.

The put-call parity shows the equivalence between option strategies and simple option positions.

- Covered call corresponds to put short, the relationship demonstrated in this example:, d. H. Long share and short call (covered call) equals a short put plus an amount of money.

- Counter position ( reverse hedge ) of covered call corresponds to put long

- Protective put corresponds to a long call

- The opposite position to the protective put is the short call

Black-Scholes

The Black-Scholes formulas for the value of European calls and puts on underlying assets with no dividend payments are

in which

In this formula, S is today's price of the underlying asset, X the exercise price, r the risk-free interest rate, T the lifetime of the option in years, σ the volatility of S and the cumulative probability that a variable with a standard normal distribution is less than x .

If the underlying does not pay dividends, the price of an American call option is the same as the price of a European call option. The formula for c therefore also gives the value of an American call option with the same key figures , assuming that the underlying pays no dividends . There is no analytical solution to the value of an American put option.

Consideration of interest

The gain or loss of options, taking interest into account, can be determined as:

where is linear, since the money market interest rate is used here . With which is maximum function called.

Protection against dilution

The valuation methods implicitly assume that the option right cannot lose value (dilute) due to corporate actions by the stock corporation. This is guaranteed by the so-called protection against dilution when trading options.

Optimal exercise

American options can be exercised at multiple points in time. The exercise behavior is influenced by the factors interest on the base price, a flexibility effect and the dividend. A distinction must be made between calls and puts.

A positive effect means that it should be exercised , a negative effect that it is more worthwhile to wait and see .

With interest on the strike price, the effect on calls is negative, but positive on puts. The flexibility effect has a negative effect on both calls and puts. The dividend event has a positive effect on calls, but a negative effect on puts.

Dividends

- If no dividend is paid, the exercise of a call at the end of the term is always optimal.

- When paying dividends, it is still optimal to wait until the end date for puts.

Criticism of the standard evaluation methods

The valuation methods are usually based on the assumption that the changes in value are normally distributed and independent of one another. According to Benoît Mandelbrot , all models and valuation formulas based on this assumption (for example the above by Black-Scholes) are wrong. His investigations showed that the rate changes are exponentially distributed and dependent on one another and thus lead to much more violent price fluctuations than the standard models provide.

literature

- John C. Hull : Options, Futures, and Other Derivatives. 7th, updated edition. Pearson Studium, Munich et al. 2009, ISBN 978-3-8273-7281-9 .

- Michael Bloss, Dietmar Ernst, Joachim Häcker : Derivatives. An authoritative guide to derivatives for financial intermediaries and investors. Oldenbourg Verlag, Munich 2008, ISBN 978-3-486-58632-9 .

- Ingo Zahn: Option Price Theory. Publishing house Dr. Kovač, Hamburg 2019, ISBN 978-3-339-10622-3 .

Individual evidence

- ↑ Masters of Finance: Paul A. Samuelson on YouTube . Interview with Robert Merton (minute 11:00).

- ↑ Igor Uszczpowski, Understanding Options and Futures , 6th edition, Beck-Wirtschaftsberater im dtv, ISBN 978-3-423-05808-7

- ↑ Benoît Mandelbrot: The Variations of certain speculative prices . In: Journal of Business 36, 1963, pp. 394-419