The Newton method , also Newton-Raphson method (named after Sir Isaac Newton 1669 and Joseph Raphson 1690), is a frequently used approximation algorithm in mathematics for the numerical solution of nonlinear equations and systems of equations. In the case of an equation with one variable, approximate values for solutions of the equation can be used for a given continuously differentiable function , i.e. H. Find approximations of the zeros of this function. The basic idea of this procedure is to linearize the function in a starting point, i.e. H. determine its tangent and use the zero of the tangent as an improved approximation of the zero of the function. The approximation obtained serves as the starting point for a further improvement step. This iteration takes place until the change in the approximate solution has fallen below a fixed limit. In the best case , the iteration method converges asymptotically with the quadratic order of convergence , the number of correct decimal places then doubles in each step.

Expressed formally, the iteration

is based on a start value

repeated until sufficient accuracy is achieved.

Newton's method for real functions of a variable

Historical facts about the Newton method

Isaac Newton wrote the work "Methodus fluxionum et serierum infinitarum" (Latin for: From the method of fluxions and infinite consequences ) between 1664 and 1671 . In it he explains a new algorithm for solving a polynomial equation using the example . One can easily guess the point as a first approximation. Newton made the approach with what was assumed to be "small" and put it in the equation:

According to the binomial formulas ,

-

.

.

Since “small” is supposed to be, the higher order terms can be neglected compared to the linear and constant ones, which leaves or remains. We can now repeat this procedure and start , insert it into the second equation, omit higher terms and keep.

Joseph Raphson described this calculation process formally in 1690 in the work "Analysis Aequationum universalis" and illustrated the formalism using the general equation of the third degree, where he found the following iteration rule.

The abstract form of the procedure using the derivative comes from Thomas Simpson .

Construction on the graph

Animation: Iteration with the Newtonian method

One arrives at this procedure clearly as follows: Let be a continuously differentiable real function, of which we know a place in the domain of definition with a “small” function value. We want to find a point near that is an improved approximation of the zero. To do this, we linearize the function at the point , i.e. H. we replace it with its tangent at the point with a rise .

The tangent is given by the function . If we use , we receive

-

.

.

We choose as the only zero of this linear function,

-

.

.

If we apply this construction several times, we get an infinite sequence of positions from a first position , which is determined by the recursion rule

is defined. This rule is also known as Newton iteration refers to the function as Newton operator . The Newton iteration is a special case of a fixed point iteration , if the sequence converges to , then and therefore .

The art of using Newton's method is to find suitable starting values . The more is known about the function , the smaller the required set of start values can be.

Many nonlinear equations have multiple solutions, so a -th degree polynomial has up to zeros. If you want to determine all zeros in a certain range , a suitable start value in must be found for each zero , for which the Newton iteration converges. You could z. B. determine sufficiently small isolating intervals for each zero by bisection .

First example

The square root of a number is the positive zero of the function . This function has the derivative , so the Newton iteration is carried out according to the rule

The advantage of this rule compared to the Heron root extraction (see below) is that it is division-free as soon as the reciprocal of has been determined. The starting value was selected in the table . The iterates were cut off at the first imprecise point. It can be seen that after a few steps the number of valid digits increases rapidly.

| n |

at at

|

at at

|

at at

|

| 0 |

|

|

|

| 1 |

|

|

|

| 2 |

|

|

|

| 3 |

|

|

|

| 4th |

|

|

|

| 5 |

|

|

|

| 6th |

|

|

|

| 7th |

|

|

|

| 8th |

|

|

|

If we consider the difference to the limit value in the -th step, the difference in the -th step can be split off twice using the binomial formulas :

-

According to the inequality of the arithmetic and geometric mean, the following applies , so that the second factor can sensibly be limited by. If the difference in the -th step is a small number, the difference in the -th step is proportional to the square of it, i.e. much smaller. Squaring an error produces an error estimate proportional to . That is why it is said that the number of valid digits roughly doubles in each step of the Newton iteration.

Convergence considerations

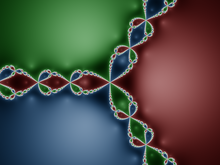

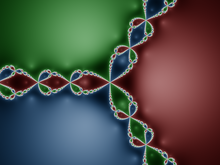

The Newton method for the polynomial over the complex numbers converges to one of the three zeros of the polynomial for starting values from the red, green and blue areas. The method does not converge for starting values from the light structure, the Newton fractal.

The Newton method is a so-called locally convergent method. Convergence of the Newton iteration generated sequence to zero is therefore only guaranteed if the start value d. H. the 0 th member of the sequence is already "sufficiently close" to the zero. If the starting value is too far away, the convergence behavior is not fixed, i.e. both a divergence of the sequence and an oscillation (in which a finite number of function values alternate) or a convergence to another zero of the function under consideration is possible.

Dynamics of the Newton method for the function with starting values between −4 and 4: Each color-coded line shows the result of one step of the process, applied to the line above. The start values are in the top line. Many starting values converge to the (only real-valued) zero of the polynomial at approx. −1.769, the color of which is medium blue. However, there are also many starting values for which the method does not converge and oscillates back and forth between zero (black) and one (red). The set of these starting values that do not lead to the zero position contains open intervals, so it is a set of positive dimensions and therefore in particular not a

zero set .

If the start value is chosen so that the Newton method converges, the convergence is, however, quadratic, i.e. with the order of convergence 2 (if the derivative at the zero point does not disappear). The set of starting points for which the Newton method converges to a certain zero point forms the catchment area of this zero point. If you color the catchment areas of different zeros in the complex plane differently for a polynomial function with real or complex coefficients, a Newton fractal results . In this it can be seen that the catchment areas basins, i. H. Contain circular disks around the zeros from which the Newton iteration converges stably towards the zero in the center. But it can also be seen that the edges of the catchment areas are “frayed”, they even have a fractal structure. Small deviations in the starting point can therefore lead to different zeros.

If, however, there is exactly one zero in the interval , in consistently as well as and and the starting value is chosen to the left of the zero , then the sequence always converges in Newton's method, strictly increasing monotonically (see figure below or the table above ).

![{\ displaystyle I = {] a, b [}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/0b87023fb36a814cff30f5ba8d17d80e852fc0b9)

Examples of non-convergence

- Oscillating behavior results u. a. for the polynomial with . The point with and is mapped to the point using the Newton operator , the point in turn, with and , is mapped to, so that the Newton iteration with one of these points as a starting value results in a periodic sequence , these two points alternate cyclically. This cycle is stable, it forms an attractor of the Newton iteration. This means that there are surroundings around both points, so that starting points from these surroundings converge towards the cycle and thus each have one of the points 0 and 1 as the limit value of the subsequence with an even index and that with an odd index.

- Divergence or any distance from the starting point results for with and . There is a place with d. H. You convince yourself that then applies. This behavior is not stable, because with a slight variation of the initial value, as it occurs for example through the numerical calculation, the Newton iteration moves further and further away from the ideal diverging sequence. Even with eventual convergence, the zero found will be very far from the starting value.

Local quadratic convergence

Let be a twice continuously differentiable real function and a simple zero of , in which the derivative thus has no zero. This means that the graph of the function is transversal, i.e. H. non-touching, the -axis intersects. Be a point close to . Then the Taylor formula of the second degree (with Lagrange remainder term )

-

lies between and ,

lies between and ,

be adjusted according to the difference ,

-

.

.

It is now rearranged so that the Newton operator appears on the right side,

-

.

.

Let be an interval around without zero of the derivative and and bounds of the derivative of . Then the estimation

follows for all

-

.

.

With the constant factor is referred. In each step of the Newton iteration the size will be smaller than the square of the same size in the previous step . After complete induction, it results

-

.

.

So can the estimate be guaranteed for the starting point of the iteration , e.g. B. if the interval length is smaller than , then the sequence of the Newton iteration converges towards the zero , because the sequence and thus is a zero sequence according to the given estimate . The shortening of the interval can be achieved by a few iterations of a slower zero-restriction method, e.g. B. the bisection process or the Regula falsi .

The speed of convergence resulting from these estimates is called quadratic , the (logarithmic) accuracy or number of valid digits doubles in each step. The estimate of the distance to the zero point is often given linearly in . B.

-

if the length of the interval is less than . This gives an estimate of the valid digits in the binary system.

if the length of the interval is less than . This gives an estimate of the valid digits in the binary system.

-

if the length of the interval is less than , d. H. close enough to the zero there is a doubling of the valid decimal places in each step.

if the length of the interval is less than , d. H. close enough to the zero there is a doubling of the valid decimal places in each step.

Local quadratic convergence with multiple zeros through modification

In the event that at a multiple zero has finite degree, also can estimate the convergence speed and force again square by a slight modification of convergence.

Has at a -fold zero, can be written as with .

Then according to the product rule and with it the expression

-

.

.

If you insert this into the iteration, you get

and from it after subtracting both sides from the iteration error

-

![(x _ {\ text {new}} - a) = (xa) - (xa) {\ frac {g (x)} {k \ cdot g (x) + (xa) \ cdot g '(x)}} = (xa) \ left [1 - {\ frac {g (x)} {k \ cdot g (x) + (xa) \ cdot g '(x)}} \ right]](https://wikimedia.org/api/rest_v1/media/math/render/svg/0edb3af9242a98e29c32a4f3d94546f15a71111d) .

.

If the expression has now become very small, the summand in the denominator becomes much smaller than , so that the parentheses in the back approaches the value more and more . For the simple zero with one has a small value that becomes almost 0, so that becomes. For becomes about 0.5, so that the distance to the zero is only halved from step to step and after about 10 steps the accuracy has only been increased in another three decimal places. When is about 0.67, so that after about 16 steps, the accuracy for another three decimal places increases, etc.

One can therefore estimate the multiplicity of the zero from the convergence behavior, if one does not know it for other reasons, and - as will now be described - optimize the method.

In the case of a -fold zero, the Newtonian approximation method is modified with a factor :

-

(Newton method with -fold zero)

(Newton method with -fold zero)

This then becomes

![{\ displaystyle {\ begin {aligned} (x _ {\ text {new}} - a) & = (xa) \ left [1 - {\ frac {k \ cdot g (x)} {k \ cdot g (x ) + (xa) \ cdot g '(x)}} \ right] \\ & = (xa) \ left [{\ frac {k \ cdot g (x) + (xa) \ cdot g' (x)} {k \ cdot g (x) + (xa) \ cdot g '(x)}} - {\ frac {k \ cdot g (x)} {k \ cdot g (x) + (xa) \ cdot g' (x)}} \ right] \\ & = (xa) {\ frac {(xa) \ cdot g '(x)} {k \ cdot g (x) + (xa) \ cdot g' (x)} } \\ & = (xa) ^ {2} {\ frac {g '(x)} {k \ cdot g (x) + (xa) \ cdot g' (x)}} \ end {aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/5f1c38c84e7d192079e376bbf7e046389222342a)

If it is now very small again, the summand in the denominator becomes much smaller than , and one obtains

-

,

,

where the right factor converges to a fixed value due to. As you can see, there is now quadratic convergence here too.

An example shows the convergence behavior very nicely. The function has the simple zero . The left column of the table shows the rapid convergence for the starting value 1, after 4 steps the accuracy can no longer be increased, in the event of an error the number of zeros behind the decimal point doubles (at least). If you now square the function (middle column), the zero becomes double, and now the behavior explained above is shown that, without modification, the error is only halved in each step. If you then modify this case with the factor , the same behavior occurs as with the simple zero (right column).

| n |

For For

|

error |

for no factor for no factor

|

error |

for with factor for with factor

|

error

|

| 0 |

|

|

|

|

|

|

| 1 |

|

|

|

|

|

|

| 2 |

|

|

|

|

|

|

| 3 |

|

|

|

|

|

|

| 4th |

|

|

|

|

|

|

| 5 |

|

|

|

|

|

|

| 6th |

|

|

|

|

|

|

| 7th |

|

|

|

|

|

|

| 8th |

|

|

|

|

|

|

| 9 |

|

|

|

|

|

|

| 10 |

|

|

|

|

|

|

Remarks

- The local proof of convergence can also be carried out in the same way in the multi-dimensional case, but then it is technically somewhat more difficult, since two- and three-level tensors are used for the first and second derivative. Essentially, the constant K has to be replaced by, with suitable induced operator norms .

- The local proof of convergence presupposes that an interval containing a zero is known. However, there is no way to test this quickly from his evidence. A proof of convergence, which also provides a criterion for this, was first given by Leonid Kantorowitsch and is known as the Kantorowitsch theorem.

- In order to find a suitable starting point, other (“coarser”) methods are occasionally used. For example, you can use the gradient method to determine an approximate solution and then refine it using the Newton method.

- If the starting point is unknown, a homotopy can be used to deform the function for which one is looking for a zero into a simpler function of which (at least) one zero is known. One then runs through the deformation backwards in the form of a finite sequence of only “slightly” differing functions. The first function has a zero. As the starting value of the Newton iteration for the current function of the sequence, the approximation of a zero of the function preceding in the sequence is used. For the exact procedure, see homotopy procedure .

- The “flooding homotopy” may serve as an example: we use an arbitrary one to form the output function with a known zero . We have flooded the "water level" from the "zero level" to the top . Now, we gradually reduce the water level , , . In each step, an approximation of a zero is determined, and is set. It is and therefore one of the approximate solutions we are looking for.

The Newtonian approximation method

Termination criteria

Possible termination criteria with regard to a residual quantity (e.g. computer arithmetic) are:

Whereby the quality of the "zero point" determines. In both cases it can happen that the termination criterion is fulfilled at a "bad" point in time.

Further application examples

Calculating the square root

A special case of the Newtonian approximation method is the Babylonian root extraction , also known as Heron method after Heron of Alexandria :

If you apply the iteration formula for determining zeros to the function

the approximation method

is obtained because of the derivative function for the solution

-

.

.

This procedure converges for any and any initial value .

Calculating the cube root

If you apply the iteration formula for determining zeros to the function

the approximation method

is obtained because of the derivative function for the solution

![{\ sqrt [{3}] {a}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/895424fc79dfd221f984d973ac95ca277bd0e60c)

-

.

.

For negative radicands, we recommend converting with .

![{\ displaystyle {\ sqrt [{3}] {x}} = - {\ sqrt [{3}] {- x}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/d1b0364516c96bec2de010817fdbff921251457d)

This procedure converges for and initial value if and have the same sign.

Intersection of two functions

The value of the intersection of two functions and can be determined in a similar way :

Since the two functions for solving the problem are equated, the following form can always be determined by transformation, to which the Newtonian approximation method can be applied:

Mixed goniometric function

Find the positive solution of the equation . The problem can be rephrased as . So we are looking for zeros of .

We have now . As for all valid and for we know that the zero point between 0 and 1. We start the iteration with the value .

The first twelve digits of the zero are now known.

Newton's method in the multidimensional

Newton's method can also be used to determine zeros of multidimensional functions . A specific application is the combination with the Gaussian least squares method in the Gauss-Newton method . For the general case, the starting point is a Taylor expansion of the function :

where the Jacobian matrix, i.e. the matrix of the partial derivatives of , is:

Instead of looking for zeros of the non-linear function, one looks for zeros of the linear fit of in the point :

For and this inspires the Newton method :

Since solving

Using the calculation of the inverse of a matrix and subsequent multiplication with is more complex and numerically less favorable, the linear system of equations is used instead

solved. Then we get from:

There are various solution methods for solving the system (see list of numerical methods ). If the Jacobian matrix is invertible in the zero and is Lipschitz continuous in the vicinity of the zero , then the method converges locally quadratically.

Variants of the Newton method

The biggest problem with using Newton's method is that you need the first derivative of the function. Their calculation is usually time-consuming, and in many applications a function is not given analytically, but only, for example, by a computer program (see also Automatic Differentiation ). In the one-dimensional, the Regula falsi is preferable, in which the secant and not the tangent is used. In the multidimensional one has to look for other alternatives. Here the problem is also more dramatic, since the derivation is a matrix with entries, so the calculation effort increases quadratically with the dimension.

Simplified Newton method

Instead of calculating the derivative in every Newton step, it is also possible to only calculate it in every nth step. This drastically lowers the cost of an iteration step, the price is a loss of convergence speed. The convergence is then no longer quadratic, but superlinear convergence can still be achieved.

Inexact Newton method

A similar idea is to calculate an approximation of the derivative in each step , for example using finite differences. A quantitative statement of convergence is difficult in this case, but as a rule of thumb it can be said that the worse the approximation of the derivative, the worse the convergence. An example of such a process is the secant process .

Newton-Krylow method

For the numerical solution of non-linear partial differential equations , Newton's method is basically the basic solver. The corresponding Jacobian matrix is always sparsely populated , and therefore Krylow subspace methods are suitable for solving the linear systems of equations. One then speaks of the Newton-Krylow method. In the Krylow method itself, the Jacobian matrix occurs only in matrix-vector products, which can be interpreted as directional derivatives. If these are approximated by finite differences, matrix-free methods are obtained.

literature

- P. Deuflhard, A. Hohmann: Numerical Mathematics I. An algorithmic introduction. 3rd revised and expanded edition. de Gruyter, Berlin, New York 2002, ISBN 3-11-017182-1

- P. Deuflhard: Newton Methods for Nonlinear Problems. Affine Invariance and Adaptive Algorithms. , Springer, Berlin 2004, ISBN 3-540-21099-7 (Series: Springer Series in Computational Mathematics , Vol. 35)

- JM Ortega, WC Rheinboldt: Iterative Solution of Nonlinear Equations in Several Variables. , Society for Industrial & Applied Mathematics, 2000, ISBN 0-89871-461-3 ( Classics in Applied Mathematics series )

Individual evidence

-

↑ Xavier Gourdon : Newton's method and high order iterations , error-free representation in the Postscript file

-

↑ JH Hubbard, D. Schleicher, S. Sutherland : How to Find All Roots of Complex Polynomials by Newton's Method Preprint (2000), Inventiones Mathematicae vol. 146 (2001)

![{\ displaystyle I = {] a, b [}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/0b87023fb36a814cff30f5ba8d17d80e852fc0b9)

![(x _ {\ text {new}} - a) = (xa) - (xa) {\ frac {g (x)} {k \ cdot g (x) + (xa) \ cdot g '(x)}} = (xa) \ left [1 - {\ frac {g (x)} {k \ cdot g (x) + (xa) \ cdot g '(x)}} \ right]](https://wikimedia.org/api/rest_v1/media/math/render/svg/0edb3af9242a98e29c32a4f3d94546f15a71111d)

![{\ displaystyle {\ begin {aligned} (x _ {\ text {new}} - a) & = (xa) \ left [1 - {\ frac {k \ cdot g (x)} {k \ cdot g (x ) + (xa) \ cdot g '(x)}} \ right] \\ & = (xa) \ left [{\ frac {k \ cdot g (x) + (xa) \ cdot g' (x)} {k \ cdot g (x) + (xa) \ cdot g '(x)}} - {\ frac {k \ cdot g (x)} {k \ cdot g (x) + (xa) \ cdot g' (x)}} \ right] \\ & = (xa) {\ frac {(xa) \ cdot g '(x)} {k \ cdot g (x) + (xa) \ cdot g' (x)} } \\ & = (xa) ^ {2} {\ frac {g '(x)} {k \ cdot g (x) + (xa) \ cdot g' (x)}} \ end {aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/5f1c38c84e7d192079e376bbf7e046389222342a)

![{\ sqrt [{3}] {a}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/895424fc79dfd221f984d973ac95ca277bd0e60c)

![{\ displaystyle {\ sqrt [{3}] {x}} = - {\ sqrt [{3}] {- x}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/d1b0364516c96bec2de010817fdbff921251457d)