Differential (math)

In analysis, a differential (or differential ) describes the linear part of the increase in a variable or a function and describes an infinitely small section on the axis of a coordinate system . Historically, the term was at the core of the development of calculus in the 17th and 18th centuries . From the 19th century, Augustin Louis Cauchy and Karl Weierstrass rebuilt analysis in a mathematically correct manner on the basis of the concept of limit values , and the concept of differential lost its meaning for elementary differential and integral calculus .

If there is a functional dependency with a differentiable function , then the fundamental relationship between the differential of the dependent variable and the differential of the independent variable is

- ,

where the derivative of denotes at the point . Instead of you also write or . This relationship can be generalized to functions of several variables with the help of partial derivatives and then leads to the concept of the total differential .

Today differentials are used in different applications with different meanings and with different mathematical rigor . The differentials appearing in standard spellings such as integrals or derivatives are nowadays usually regarded as a mere part of the notation with no independent meaning.

A rigorous definition is provided by the theory of differential forms used in differential geometry , where differentials are interpreted as exact 1-forms . A different approach is provided by nonstandard analysis , which takes up the historical concept of the infinitesimal number again and specifies it in the sense of modern mathematics.

classification

In his “Lectures on Differential and Integral Calculus”, first published in 1924, Richard Courant writes that the idea of the differential as an infinitely small quantity has no meaning and that it is therefore useless to define the derivative as the quotient of two such quantities, but that one should try anyway could define the expression as the actual quotient of two quantities and . To do this, first define as, as usual, and then consider the increase as an independent variable for a fixed one. (These are called .) Then define what one gets tautologically with .

In more modern terminology, one can understand the differential in as a linear mapping from the tangent space into the real numbers. The real number is assigned to the "tangential vector" and this linear mapping is by definition the differential . So and in particular what tautologically the relationship results from.

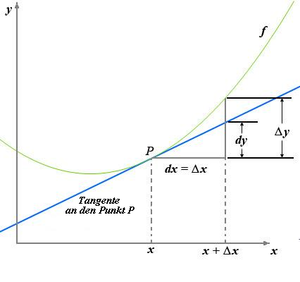

The differential as a linearized increase

If a real function is a real variable, changing the argument from to changes the function value from to ; the following applies to the increase in the function value

- .

For example, if an (affine) linear function , ie , follow as . This means that in this simple case the increase in the function value is directly proportional to the increase in the argument and the ratio just corresponds to the constant slope of .

The situation is more complicated for functions whose slope is not constant. If it is differentiable at this point , then the slope there is given by the derivative , which is defined as the limit value of the difference quotient:

- .

Consider now for the difference between the difference quotient and the derivative

- ,

so it follows for the increase in the function value

- .

This representation is broken down into a component that depends linearly on and a remainder that vanishes from a higher than linear order, in the sense that applies. The linear portion of the increase, which therefore generally represents a good approximation for small values of , is called the differential of and denoted by.

definition

Let it be a function with a domain . Is differentiable at this point and , then is called

the differential from at the point to the argument growth . Instead , you often write too . If so, you also write instead of .

For a fixed selected one , the differential is a linear function that assigns the value to each argument .

For example, for the identical function , the equation applies because of and therefore in this example .

Higher order differentials

Is at this point -mal differentiable ( ) and is called

the -th order differential of at the point of the argument growth . In this product, the -th derivative of the place and the -th power of the number .

The meaning of this definition is explained by Courant as follows. If one thinks that one has chosen to be fixed, namely the same value for different ones , that is to say fixed , then is a function of , from which one can again form the differential (see Fig.). The result is the second differential , which is obtained by replacing the term in brackets in (the increment of ) with its linear part , which means that is. The definition of higher order differentials can be motivated in an analogous way. It then applies accordingly z. B. and general .

For a fixedly chosen function , the differential is again a (for non-linear) function that assigns the value to each argument .

Calculation rules

Regardless of the definition used, the following calculation rules apply to differentials. In the following, the independent variable, dependent variable or functions and any real constant denote . The derivation from to is written. Then the following calculation rules result from the relationship

and the derivation rules . The following rules for calculating differentials of functions are to be understood in such a way that the functions obtained after inserting the arguments should match. The rule, for example, says that one has the identity in everyone and this means by definition that the equation should apply to all real numbers .

Constant and constant factor

- and

Addition and subtraction

- ; and

multiplication

division

Chain rule

- Is dependent on and on , so and , then applies

- .

Examples

- For and applies or . It follows

- .

- For and applies and , thus

- .

Extension and variants

Instead , the following symbols are used to denote differentials:

- With (introduced by Condorcet, Legendre and then Jacobi you can see it in old French cursive, or as a variant of the italic Cyrillic d) is a partial differential .

- With (the Greek small delta) is a virtual displacement, the variation is called a position vector. It is therefore related to the partial differential according to the individual spatial dimensions of the position vector.

- With is an inexact differential .

Total differential

The total differential or complete differential of a differentiable function in variables is defined by

- .

This can again be interpreted as the linear proportion of the increase. A change in the argument um causes a change in the function value um , which can be decomposed as

- ,

where the first summand represents the scalar product of the two -element vectors and and the remainder of higher order vanishes, that is .

Virtual shift

A virtual displacement is a fictitious infinitesimal displacement of the -th particle that is compatible with constraints . The dependence on time is not considered. The desired virtual change arises from the total differential of a function . The term "instantaneous" is thereby mathematized.

The holonomic constraints,, are fulfilled by using so-called generalized coordinates :

The holonomic constraints are therefore explicitly eliminated by selecting and reducing the generalized coordinates accordingly.

Stochastic Analysis

In stochastic analysis , the differential notation is often used, for example for the notation of stochastic differential equations ; it is then always to be understood as a shorthand for a corresponding equation of Itō integrals . If, for example, a stochastic process that can be Itō-integrable with respect to a Wiener process , then it becomes through

given equation for a process in differential form as noted. In the case of stochastic processes with non-vanishing quadratic variation , however, the above-mentioned calculation rules for differentials have to be modified according to Itō's lemma .

Today's approach: differentials as 1-forms

The definition of the differential given above corresponds to the term of the exact 1-form in today's terminology .

Let it be an open subset of the . A 1-shape or Pfaff's shape assigns a linear shape to each point . Such linear forms are called cotangential vectors ; they are elements of the dual space of the tangential space . A Pfaffian form is therefore an image

The total differential or the external derivative of a differentiable function is Pfaff's form, which is defined as follows: If a tangential vector, then: is therefore equal to the directional derivative of in direction . So is a way with and , so is

Using the gradient and the standard scalar product is the total differential of leaves through

represent.

In particular, one obtains the differential of functions for .

Differentials in integral calculus

Clear explanation

In order to calculate the area of an area enclosed by the graph of a function , the -axis and two perpendicular straight lines and , the area was divided into rectangles of width , which are made "infinitely narrow", and height . Their respective area is the "product"

- ,

the total area is the sum

where here again is a finite quantity which corresponds to a subdivision of the interval . See more precisely: mean value theorem of integral calculus . There is a fixed value in the interval whose function value multiplied by the sum of the finite values of the interval gives the value of the integral of this one continuous function:

The total interval of the integral does not have to be divided equally. The differentials at the different subdivision points can be selected to be of different sizes; the choice of subdivision of the integration interval often depends on the type of integration problem. Together with the function value within the “differential” interval (or the maximum and minimum value within it, corresponding to the upper and lower sums), an area size is formed; one makes the limit value transition in the sense that one chooses the subdivision of always finer. The integral is a definition for a surface bounded by a curve segment.

Formal declaration

Let it be an integrable function with an antiderivative . The differential

is a 1-form, which can be integrated according to the rules of integration of differential forms . The result of the integration over an interval is exactly the Lebesgue integral

- .

Historical

Gottfried Wilhelm Leibniz first used the integral sign in a manuscript in 1675 in the treatise Analysis tetragonistica , he did not write but . On November 11, 1675, Leibniz wrote an essay with the title "Examples of the reverse tangent method" and here appears for the first time , as well as the spelling instead .

In the modern version of this approach to integral calculus according to Bernhard Riemann , the “integral” is a limit value of the area of a finite number of rectangles of finite width for ever finer subdivisions of the “ area”.

Therefore the first symbol in the integral is a stylized S for "sum". “Utile erit scribi pro omnia (it will be useful instead of writing omnia) and ∫ l to denote the sum of a total ∫ ... Here a new genre of calculus appears; if, on the other hand , there is an opposite calculus with the designation , namely, as ∫ increases the dimensions, then d decreases them. But ∫ means the sum, d the difference. ”Leibniz wrote on October 29, 1675 in an investigation in which he used the Cavalier totalities. In the later writing of November 11, 1675 he changes from the spelling to dx, he notes in a footnote “dx is equal ”, the formula also appears in the same calculation . Omnia stands for omnia l and is used in the geometrically oriented area calculation method by Bonaventura Cavalieri . Leibniz's corresponding printed publication is De geometria recondita from 1686. Leibniz tried to use the designation "to make the calculation calculatively simple and inevitable."

Blaise Pascal's reflections on the quarter arc: Quarts de Cercle

When Leibniz was in Paris as a young man in 1673, he received a decisive stimulus from a consideration of Pascal in his 1659 treatise Traité des sinus des quarts de cercle ( Treatise on the sine of the quarter circle ). He says he saw a light in it that the author did not notice. It is the following (written in modern terminology, see figure):

About the static moment

of the quarter-circle arc with respect to the x-axis, Pascal concludes from the similarity of the triangles with the sides

and

that their aspect ratio is the same

and thus

so that

applies. Leibniz now noticed - and this was the "light" that he saw - that this procedure is not restricted to the circle, but applies in general to every (smooth) curve, provided that the circle radius a is divided by the length of the curve normal (the reciprocal curvature , the radius of the circle of curvature ) is replaced. The infinitesimal triangle

is the characteristic triangle (It can also be found in Isaac Barrow for the determination of tangents.) It is remarkable that the later Leibnizian symbolism of differential calculus (dx, dy, ds) corresponds precisely to the standpoint of this "improved concept of individuality".

similarity

All triangles from a section of the tangent together with the pieces parallel to the respective x and y axes and form similar triangles with the triangle from the radius of curvature a , subnormal and ordinate y and keep their proportions according to the slope of the tangent to the circle of curvature at this point also when the limit value transition is made. The ratio of is exactly the slope of . Therefore, for each circle of curvature at a point on the curve, its (characteristic) proportions in the coordinate system can be transferred to the differentials there, especially if they are understood as infinitesimal quantities.

Nova methodus 1684

New method of maxima, minima and tangents, which does not come into contact with either broken or irrational quantities, and a peculiar type of calculation related to this. (Leibniz (GGL), Acta eruditorum 1684)

Leibniz explains his method very briefly on four pages. He chooses any independent fixed differential (here dx, see Fig. Ro) and gives the calculation rules, as below, for the differentials, describes how they are formed.

Then he gives the chain rule:

- “So it happens that one can write down its differential equation for every equation presented. This is done by simply inserting the differential of the term for each term (i.e. every component that contributes to the production of the equation through mere addition or subtraction), but for another quantity (which is not itself a term, but for forming a term) contributes) uses its differential to form the differential of the link itself, and not without further ado, but according to the algorithm prescribed above. "

From today's point of view, this is unusual because he considers independent and dependent differentials equally and individually, and not, as finally required, the differential quotient from dependent and independent quantities. The other way around, if he gives a solution, the formation of the differential quotient is possible. It covers the full range of rational functions. What follows is a formal, complicated example, a dioptric one that deals with the refraction of light (minimum), an easily resolvable geometric one with intricate spacing relationships, and one that deals with the logarithm.

Further connections are examined historically from the connection with earlier and later works on the topic, some of which are only available in handwritten form or in letters and not published. In Nova methodus 1684, for example, it does not say that dx = const for the independent dx. and ddx = 0. In further contributions he deals with the topic up to "roots" and squaring of infinite rows.

Leibniz describes the relationship between infinitely small and known differential (= size):

- “It is also clear that our method masters the transcendent lines which cannot be traced back to the algebraic calculation or which are of no particular degree, and that applies quite generally, without any special, not always applicable prerequisites. One only has to state once and for all that to find a tangent is as much as drawing a straight line connecting two curve points with an infinitely small distance, or an extended side of the infinite polygon, which for us is synonymous with the curve. But that infinitely small distance can always be expressed by some known differential, such as dv, or by a relation to it, i.e. H. by a certain well-known tangent. "

For the transcendent line, the cycloid is used as evidence.

As an appendix, he explains in 1684 the solution to a problem posed by Florimond de Beaune Descartes , which he did not solve. The problem provides that a function (w, the line WW in Table XII) is found whose tangent (WC) always intersects the x-axis in such a way that the section between the intersection of the tangent with the x-axis and its distance to the associated Abscissa x, there he chooses dx always equal to b, constant, he calls it here a, is. He compares this proportionality with the arithmetic series and the geometric series and receives the logarithms as the abscissa and the numbers as the ordinate. “The ordinates w” (increase in value) “den dw” (increase in gradient) ”, their increments or differences, proportional, ...” He gives the logarithmic function as the solution: “… if the w are the numbers, then are the x the logarithms. “: w = a / b dw, or w dx = a dw. This fulfilled

or

Cauchy's differential term

In the 1980s a dispute took place in Germany as to the extent to which Cauchy's foundations of analysis were logically sound. Detlef Laugwitz tries, with the help of Cauchy's historical reading, to make the term infinitely small quantities fruitful for his numbers, but as a result finds discrepancies in Cauchy. Detlef Spalt corrects the (first!) Historical reading approach of Cauchy's work and calls for the use of terms from Cauchy's time and not today's terms to prove his sentences and comes to the conclusion that Cauchy's foundations of analysis are logically sound, but the questions still remain after treatment of infinitely small sizes open.

The differentials in Cauchy are finally and constant ( finally). The value of the constant is not specified.

with Cauchy is infinitely small and changeable .

The relationship to is where is finite and infinitesimal (infinitely small).

Their geometric relationship is as

certainly. Cauchy can transfer this ratio of infinitely small quantities, or more precisely the limit of geometrical difference ratios of dependent number quantities, a quotient, to finite quantities.

Differentials are finite number quantities, the geometrical relationships of which are strictly equal to the limits of the geometrical relationships, which are formed from the infinitely small increments of the presented independent variables or the variable of the functions. Cauchy thinks it is important to consider differentials as finite quantities.

The computer uses the infinitely small as intermediaries, which must lead it to the knowledge of the relationship that exists between the finite quantities; and in Cauchy's opinion, the infinitely small in the final equations, where their presence would be pointless, pointless, and useless, must never be allowed. In addition: If one regards the differentials as consistently very small quantities, then one would thereby give up the advantage which consists in the fact that one can take one of the differentials of several variables as a unit. For in order to develop a clear idea of any number, it is important to relate it to the unit of its genus. So it is important to choose a unit among the differentials.

In particular, Cauchy does not have the difficulty of defining higher differentials. Because Cauchy sets after he has received the rules of calculation of the differentials through transition to the limits. And since the differential of a function of the changeable is another function of this changeable, he can differentiate several times and in this way receives the differentials of different orders.

- ...

Remarks

-

↑ If you divide by uv, the symmetry plus to minus becomes clearer when dividing:

-

↑ If you divide by, the symmetry minus to plus becomes clearer when multiplying:

- ↑ Graphic panel XII, center left

- ↑ Graphic panel XII lower left

See also

literature

- Gottfried Leibniz, Sir Isaac Newton: On the analysis of the infinite - treatise on the quadrature of the curves . Ostwald's Classics of Exact Sciences, Volume 162, Verlag Harri Deutsch, ISBN 3-8171-3162-3

- Oskar Becker: Fundamentals of Mathematics . Suhrkamp Verlag, ISBN 3-518-07714-7

- Detlef Spalt: Reason in the Cauchy myth . Verlag Harri Deutsch, ISBN 3-8171-1480-X (Spalt problematizes the adoption of modern terms on earlier analysis, states that Cauchy's structure of analysis is logically flawless, addresses neighboring terms and lets Cauchy hold virtual discussions with much younger mathematicians about them conceptual accuracy, e.g. Abel etc.)

- K. Popp, E. Stein (ed.): Gottfried Wilhelm Leibniz, philosopher, mathematician, physicist, technician . Schlütersche GmbH & Co. KG, publishing house and printer, Hanover 2000, ISBN 3-87706-609-7

- Bos, Henk, Differentials, Higher-Order Differentials and the Derivative in the Leibnizian Calculus , Archive for History of Exact Sciences 14, 1974, 1-90. The heavily discussed publication from the 1970s on continuum and infinity.

- Courant lectures on differential and integral calculus , Springer, 1971

- Joos / Kaluza Higher mathematics for the practitioner in older editions, e.g. B. 1942, Johann Ambriosius Barth.

- Duden arithmetic and mathematics , Dudenverlag 1989

swell

- ^ Jürgen Schmidt: Basic knowledge of mathematics . Springer-Verlag, 2014, ISBN 978-3-662-43545-8 , pp. 307 .

- ↑ limited preview in the Google book search

- ^ Herbert Dallmann, Karl-Heinz Elster: Introduction to higher mathematics. Volume 1. 3rd edition. Gustav Fischer Verlag, Jena 1991, ISBN 3-334-00409-0 , p. 370.

- ^ Herbert Dallmann, Karl-Heinz Elster: Introduction to higher mathematics. Volume 1. 3rd edition. Gustav Fischer Verlag, Jena 1991, ISBN 3-334-00409-0 , p. 371.

- ^ Herbert Dallmann, Karl-Heinz Elster: Introduction to higher mathematics. Volume 1. 3rd edition. Gustav Fischer Verlag, Jena 1991, ISBN 3-334-00409-0 , p. 381.

- ↑ Courant, op.cit., P. 107.

- ↑ Kowalewski's remarks on On the Analysis of the Infinite by Leibniz.

- ^ Karlson Vom Zauber der Numbers Ullstein 1954 p. 574

- ↑ K. Popp, E. Stein, Gottfried Wilhelm Leibniz. Philosopher, mathematician, physicist, technician. Schlütersche, Hannover 2000, ISBN 3-87706-609-7 , p. 50

- ↑ Thomas Sonar: 3000 Years of Analysis: History - Cultures - People . 2nd Edition. Springer-Verlag, 2016, ISBN 978-3-662-48917-8 , pp. 408 .

- ↑ limited preview in the Google book search

- ↑ French Text, Fig. 29, is at the end of the book

- ↑ At constant density, the partial mass coincides with the arch at this point and accordingly.

- ↑ is the limit for the independent s , a is the correspondingly converted for the "parameter" x . You can also see clearly in the figure that with the quarter arc one runs through a radius length on the x -axis and vice versa.

- ↑ Barrow . In: Heinrich August Pierer , Julius Löbe (Hrsg.): Universal Lexicon of the Present and the Past . 4th edition. tape 2 . Altenburg 1857, p. 349-350 ( zeno.org ).

- ↑ Oskar Becker, Fundamentals of Mathematics , Suhrkamp

- ↑ Reinhard Finster, Gerd van der Heuvel, Gottfried Wilhelm Leibniz , monograph, Rowohlt

- ↑ a b About the analysis of the infinite German v. G. Kowalewski, Ostwald's Classics of Exact Natural Sciences, p. 7

- ↑ Detlef Spalt: The reason in the Cauchy myth . Verlag Harri Deutsch, ISBN 3-8171-1480-X (on modern terminological problems, and whether Cauchy understood it or not, and a few other things, including virtual discussions with the deceased mathematician Abel etc.)

![[a, b]](https://wikimedia.org/api/rest_v1/media/math/render/svg/9c4b788fc5c637e26ee98b45f89a5c08c85f7935)

![\ left [a, b \ right]](https://wikimedia.org/api/rest_v1/media/math/render/svg/f30926fb280a9fdf66fd931e14d4363cb824feaa)