This article covers the multiplication of two vectors, the result of which is a

scalar . For the multiplication of vectors by scalars, the result of which is a vector, see

scalar multiplication .

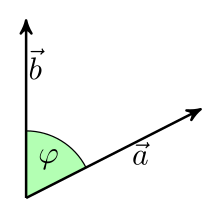

The scalar product of two vectors in Euclidean

intuition space depends on the length of the vectors and the included angle.

The scalar product (also inner product or point product ) is a mathematical combination that assigns a number ( scalar ) to two vectors . It is the subject of analytical geometry and linear algebra . Historically, it was first introduced in Euclidean space . Geometrically calculating the scalar product of two vectors and according to the formula

And denote the lengths (amounts) of the vectors. With is the cosine of the angle enclosed by the two vectors . The scalar product of two vectors of a given length is therefore zero if they are perpendicular to one another and maximum if they have the same direction.

In a Cartesian coordinate system , the scalar product of two vectors and is calculated as

If you know the Cartesian coordinates of the vectors, you can use this formula to calculate the scalar product and then use the formula from the previous paragraph to calculate the angle between the two vectors by solving it for :

In linear algebra, this concept is generalized. A scalar product is a function that assigns a scalar to two elements of a real or complex vector space , more precisely a ( positively definite ) Hermitian sesquilinear form , or more specifically in real vector spaces a (positively definite) symmetrical bilinear form . In general, no scalar product is defined from the start in a vector space. A space together with a scalar product is called an interior product space or a prehilbert space . These vector spaces generalize Euclidean space and thus enable the application of geometric methods to abstract structures.

In Euclidean space

Geometric definition and notation

Vectors in three-dimensional Euclidean space or in the two-dimensional Euclidean plane can be represented as arrows. In this case, filters arrows parallel have the same length oriented, and equal to, the same vector. The dot product of two vectors , and is a scalar, that is a real number. Geometrically, it can be defined as follows:

And denote the lengths of the vectors and and denotes the angle enclosed by and , so is

As with normal multiplication (but less often than there), when it is clear what is meant, the multiplication sign is sometimes left out:

Instead of writing occasionally in this case

Other common notations are and

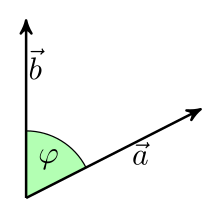

illustration

To illustrate the definition, consider the orthogonal projection of the vector on the direction given by and set

Then and for the scalar product of and we have:

This relationship is sometimes used to define the scalar product.

Examples

In all three examples, and . The scalar products result from the special cosine values , and :

In Cartesian coordinates

If you introduce Cartesian coordinates in the Euclidean plane or in Euclidean space, each vector has a coordinate representation as a 2- or 3-tuple, which is usually written as a column.

In the Euclidean plane one then obtains the scalar product of the vectors

-

and

and

the representation

Canonical unit vectors in the Euclidean plane

For the canonical unit vectors and the following applies:

-

and

and

It follows from this (anticipating the properties of the scalar product explained below):

In three-dimensional Euclidean space one obtains correspondingly for the vectors

-

and

and

the representation

For example, the scalar product of the two vectors is calculated

-

and

and

as follows:

properties

From the geometric definition it follows directly:

- If and are parallel and equally oriented ( ), then applies

- In particular, the scalar product of a vector with itself is the square of its length:

- If and are oriented parallel and opposite ( ), then applies

- If and are orthogonal ( ), then

- If there is an acute angle, then the following applies

- Is an obtuse angle , the following applies

As a function that assigns the real number to every ordered pair of vectors , the scalar product has the following properties that one expects from a multiplication:

- It is symmetrical (commutative law):

-

for all vectors and

for all vectors and

- It is homogeneous in every argument (mixed associative law):

-

for all vectors and and all scalars

for all vectors and and all scalars

- It is additive in every argument (distributive law):

-

and

and

-

for all vectors and

for all vectors and

Properties 2 and 3 are also summarized: The scalar product is bilinear .

The designation "mixed associative law" for the 2nd property makes it clear that a scalar and two vectors are linked in such a way that the brackets can be exchanged as in the associative law. Since the scalar product is not an inner link, a scalar product of three vectors is not defined, so the question of real associativity does not arise. In the expression , only the first multiplication is a scalar product of two vectors, the second is the product of a scalar with a vector ( S multiplication ). The expression represents a vector, a multiple of the vector On the other hand, the expression represents a multiple of . In general, then,

Neither the geometric definition nor the definition in Cartesian coordinates is arbitrary. Both follow from the geometrically motivated requirement that the scalar product of a vector with itself is the square of its length, and the algebraically motivated requirement that the scalar product fulfills the above properties 1-3.

Amount of vectors and included angles

With the help of the scalar product it is possible to calculate the length (the amount) of a vector from the coordinate representation:

The following applies to a vector of two-dimensional space

You can recognize the Pythagorean theorem here . The same applies in three-dimensional space

By combining the geometric definition with the coordinate representation, the angle they enclose can be calculated from the coordinates of two vectors. Out

follows

The lengths of the two vectors

-

and

and

so amount

-

and

and

The cosine of the angle enclosed by the two vectors is calculated as

So is

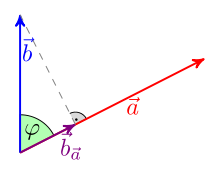

Orthogonality and Orthogonal Projection

Two vectors and are orthogonal if and only if their scalar product is zero, that is

The orthogonal projection from onto the direction given by the vector is the vector with

so

The projection is the vector whose end point is the plumb line from the end point of to the straight line through the zero point determined by. The vector is vertical

If a unit vector (i.e., ist ), the formula simplifies to

Relation to the cross product

Another way of combining two vectors and multiplying them in three-dimensional space is the outer product or cross product. In contrast to the scalar product, the result of the cross product is not a scalar, but a vector again. This vector is perpendicular to the plane spanned by the two vectors and and its length corresponds to the area of the parallelogram that is spanned by them.

The following calculation rules apply to the connection of the cross product and the scalar product:

The combination of the cross product and the scalar product of the first two rules is also called the late product ; it gives the oriented volume of the parallelepiped spanned by the three vectors .

Applications

In geometry

Cosine theorem with vectors

The dot product makes it possible to simply prove complicated theorems that talk about angles.

Claim: ( cosine law )

Proof: With the help of the drawn-in vectors it follows (The direction of is irrelevant.) Squaring the amount gives

and thus

In physics

In physics , many quantities , such as work , are defined by scalar products:

with the vector quantities force and path . It denotes the angle between the direction of the force and the direction of the path. With is the component of the force in the direction of the path, with the component of the path in the direction of the force.

Example: A wagon of the weight is transported over an inclined plane from to . The lifting work is calculated

In general real and complex vector spaces

One takes the above properties as an opportunity to generalize the concept of the scalar product to any real and complex vector spaces . A scalar product is then a function that assigns a body element (scalar) to two vectors and fulfills the properties mentioned. In the complex case, the condition of symmetry and bilinearity is modified in order to save the positive definition (which is never fulfilled for complex symmetrical bilinear forms).

In general theory, the variables for vectors, i.e. elements of any vector space, are generally not indicated by arrows. The dot product is usually not denoted by a painting point, but by a pair of angle brackets. For the scalar product of the vectors and we write . Other common notations are (especially in quantum mechanics in the form of Bra-Ket notation), and .

Definition (axiomatic)

An inner product or inner product on a real vector space is a positively definite symmetric bilinear form, that is, for and the following conditions apply:

- linear in each of the two arguments:

- symmetrical:

- positive definite:

-

exactly when

exactly when

A scalar product or inner product on a complex vector space is a positively definite Hermitian sesquilinear form that means for and the following conditions apply:

- sesquilinear:

-

(semilinear in the first argument)

(semilinear in the first argument)

-

(linear in the second argument)

(linear in the second argument)

- hermitesch:

- positive definite:

-

(That is real follows from condition 2.)

(That is real follows from condition 2.)

-

exactly when

exactly when

A real or complex vector space in which a scalar product is defined is called a scalar product space or Prähilbert space . A finite-dimensional real vector space with scalar product is also called Euclidean vector space , in the complex case one speaks of a unitary vector space. Correspondingly, the scalar product in a Euclidean vector space is sometimes referred to as the Euclidean scalar product, and that in a unitary vector space is referred to as the unitary scalar product . The term "Euclidean scalar product" but also specifically for the above described geometric scalar or described below standard scalar in use.

- Remarks

- Often every symmetrical bilinear form or every Hermitian sesquilinear form is referred to as a scalar product; with this usage the above definitions describe positively definite scalar products.

- The two axiom systems given are not minimal. In the real case, due to the symmetry, the linearity in the first argument follows from the linearity in the second argument (and vice versa). Similarly, in the complex case, due to the hermiticity, the semilinearity in the first argument follows from the linearity in the second argument (and vice versa).

- In the complex case, the scalar product is sometimes defined alternatively, namely as linear in the first and semilinear in the second argument. This version is preferred in mathematics and especially in analysis , while in physics the above version is predominantly used (see Bra and Ket vectors ). The difference between the two versions lies in the effects of the scalar multiplication in terms of homogeneity . After the alternative version applies to and and . The additivity is understood in the same way in both versions. The norms obtained from the scalar product according to both versions are also identical.

- A pre-Hilbert space, which completely with respect to the induced by the scalar standard is is referred to as the Hilbert space , respectively.

- The distinction between real and complex vector space when defining the scalar product is not absolutely necessary, since a Hermitian sesquilinear form corresponds in real to a symmetrical bilinear form.

Examples

Standard scalar product in R n and in C n

Based on the representation of the Euclidean scalar product in Cartesian coordinates, one defines the standard scalar product in the -dimensional coordinate space for through

in linear algebra

The “geometric” scalar product in Euclidean space treated above corresponds to the special case. In the case of the -dimensional complex vector space , the standard scalar product for is defined

where the overline means the complex conjugation . In mathematics, the alternative version is also often used, in which the second argument is conjugated instead of the first.

The standard scalar product in or can also be written as a matrix product by interpreting the vector as a matrix ( column vector ): In the real case, the following applies

where is the row vector which results from the column vector by transposing . In the complex case (for the left semilinear, right linear case)

where is the row vector adjoint to Hermitian .

General scalar products in R n and in C n

More generally, in the real case, every symmetric and positively definite matrix is defined by

a scalar product; likewise, in the complex case, for every positively definite Hermitian matrix, over

defines a scalar product. Here, the angle brackets on the right-hand side denote the standard scalar product , the angle brackets with the index on the left-hand side denote the scalar product defined by the matrix .

Every scalar product on or can be represented in this way by a positively definite symmetric matrix (or positively definite Hermitian matrix).

L 2 scalar product for functions

On the infinite-dimensional vector space of the continuous real-valued functions on the interval , the -scalar product is through

![C ^ 0 ([a, b], \ R)](https://wikimedia.org/api/rest_v1/media/math/render/svg/9031f182bf36a2537c1747fe6a7f7ee2461b6bdb)

![[from]](https://wikimedia.org/api/rest_v1/media/math/render/svg/9c4b788fc5c637e26ee98b45f89a5c08c85f7935)

defined for everyone .

![f, g \ in C ^ 0 ([a, b], \ R)](https://wikimedia.org/api/rest_v1/media/math/render/svg/97d766f23dd6e08b9abc30da269fabe6663e78e2)

For generalizations of this example see Prähilbertraum and Hilbertraum .

Frobenius scalar product for matrices

On the matrix space of the real - matrices is for by

defines a scalar product. Accordingly, in the space of complex matrices for by

defines a scalar product. This scalar product is called the Frobenius scalar product and the associated norm is called the Frobenius norm .

Norm, angle and orthogonality

The length of a vector in Euclidean space corresponds in general scalar product spaces to the norm induced by the scalar product . This norm is defined by transferring the formula for the length from Euclidean space as the root of the scalar product of the vector with itself:

This is possible because due to the positive definiteness is not negative. The triangle inequality required as a norm axiom follows from the Cauchy-Schwarz inequality

If so, this inequality can

be reshaped. Therefore, in general real vector spaces, one can also use

define the angle of two vectors. The angle defined in this way is between 0 ° and 180 °, i.e. between 0 and There are a number of different definitions for angles between complex vectors.

In the general case, too, vectors whose scalar product is zero are called orthogonal:

Matrix display

Is a n-dimensional vector space and a base of such, each dot on by a ( ) - matrix , the Gram matrix , are described in the scalar product. Your entries are the scalar products of the basis vectors:

-

with for

with for

The scalar product can then be represented with the help of the basis: Do the vectors have the representation

with respect to the basis

-

and

and

so in the real case

One denotes with the coordinate vectors

-

and

and

so it is true

where the matrix product provides a matrix, i.e. a real number. With the will row vector designated by transposition from the column vector formed.

In the complex case, the same applies

where the overline denotes complex conjugation and the line vector to be adjoint is.

If there is an orthonormal basis , that is, holds for all and for all, then the identity matrix and it holds

in the real case and

in the complex case. With regard to an orthonormal basis, the scalar product of and thus corresponds to the standard scalar product of the coordinate vectors and or

See also

literature

Web links

-

Information and materials on the scalar product for the upper school level Landesbildungsserver Baden-Württemberg

- Joachim Mohr: Introduction to the scalar product

- Video: dot product . Jörn Loviscach 2010, made available by the Technical Information Library (TIB), doi : 10.5446 / 9742 .

- Video: dot product and vector product . Jörn Loviscach 2011, made available by the Technical Information Library (TIB), doi : 10.5446 / 9929 .

- Video: dot product, part 1 . Jörn Loviscach 2011, made available by the Technical Information Library (TIB), doi : 10.5446 / 10212 .

- Video: Dot product part 2, orthogonality . Jörn Loviscach 2011, made available by the Technical Information Library (TIB), doi : 10.5446 / 10213 .

- Video: Of vectors and their scalar product - vector calculation part 1 . Jakob Günter Lauth (SciFox) 2013, made available by the Technical Information Library (TIB), doi : 10.5446 / 17886 .

Individual evidence

-

↑ Synonymous with:

-

^ Liesen, Mehrmann: Lineare Algebra . S. 168 .

-

^ Walter Rudin : Real and Complex Analysis . 2nd improved edition. Oldenbourg Wissenschaftsverlag, Munich 2009, ISBN 978-3-486-59186-6 , p. 91 .

-

^ Klaus Scharnhorst: Angles in complex vector spaces . In: Acta Applicandae Math. Volume 69 , 2001, p. 95-103 .

![C ^ 0 ([a, b], \ R)](https://wikimedia.org/api/rest_v1/media/math/render/svg/9031f182bf36a2537c1747fe6a7f7ee2461b6bdb)

![[from]](https://wikimedia.org/api/rest_v1/media/math/render/svg/9c4b788fc5c637e26ee98b45f89a5c08c85f7935)

![f, g \ in C ^ 0 ([a, b], \ R)](https://wikimedia.org/api/rest_v1/media/math/render/svg/97d766f23dd6e08b9abc30da269fabe6663e78e2)