The cross product , also vector product , vector product or outer product , is a link in the three-dimensional Euclidean vector space that assigns a vector to two vectors . In order to distinguish it from other products, in particular the scalar product , it is written in German and English-speaking countries with a cross as a multiplication symbol (see section Spellings ). The terms cross product and vector product go back to the physicist Josiah Willard Gibbs , the term outer product was coined by the mathematician Hermann Graßmann .

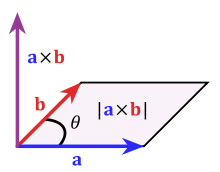

The cross product of the vectors and is a vector that is perpendicular to the plane spanned by the two vectors and forms a right system with them . The length of this vector corresponds to the area of the parallelogram that is spanned by vectors and .

The cross product occurs in many places in physics, for example in electromagnetism when calculating the Lorentz force or the Poynting vector . In classical mechanics, it is used for rotational quantities such as torque and angular momentum or for apparent forces such as the Coriolis force .

Geometric definition

The cross product of two vectors and in the three-dimensional visual space is a vector that is orthogonal to and , and thus orthogonal to the plane spanned by and .

This vector is oriented in such a way that and in this order form a legal system . Mathematically this means that the three vectors and are oriented in the same way as the vectors , and the standard basis . In physical terms, it means that they behave like the thumb, index finger and the splayed middle finger of the right hand ( right-hand rule ). Rotating the first vector into the second vector results in the positive direction of the vector via the clockwise direction .

The amount of is the surface area of from and spanned parallelogram on. Expressed by the angle enclosed by and applies

And denote the lengths of the vectors and , and is the sine of the angle they enclose .

In summary, then

where the vector is the unit vector perpendicular to and perpendicular to it, which complements it to form a legal system.

Spellings

Depending on the country, different spellings are sometimes used for the vector product. In English and German-speaking countries, the notation is usually used for the vector product of two vectors and , whereas in France and Italy, the notation is preferred. In Russia, the vector product is often written in the spelling or .

![{\ displaystyle [{\ vec {a}} \ {\ vec {b}}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f525d35f8edddf7a3f90c4d8666e165cc0ac5a00)

![{\ displaystyle [{\ vec {a}}, {\ vec {b}}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/008d8868e90625dc41200b2396382596923b8d9c)

The notation and the designation outer product are not only used for the vector product, but also for the link that assigns a so-called bivector to two vectors , see Graßmann algebra .

Calculation by component

In a right-handed Cartesian coordinate system or in real coordinate space with the standard scalar product and the standard orientation, the following applies to the cross product:

A numerical example:

A rule of thumb for this formula is based on a symbolic representation of the determinant . A matrix is noted in the first column of which the symbols , and stand for the standard basis . The second column is formed by the components of the vector and the third by those of the vector . This determinant is calculated according to the usual rules, for example by placing them by the first column developed

or using the rule of Sarrus :

With the Levi-Civita symbol , the cross product is written as

properties

Bilinearity

The cross product is bilinear , that is, for all real numbers , and and all vectors , and holds

The bilinearity also implies, in particular, the following behavior with regard to the scalar multiplication

Alternating figure

The cross product of a vector with itself or a collinear vector gives the zero vector

-

.

.

Bilinear maps for which this equation applies are named alternately.

Anti-commutativity

The cross product is anti-commutative . This means that if the arguments are swapped, it changes the sign:

This follows from the property of being (1) alternating and (2) bilinear since

applies to all .

Jacobi identity

The cross product is not associative . Instead, the Jacobi identity applies , i.e. the cyclic sum of repeated cross products vanishes:

Because of this property and those mentioned above, the together with the cross product forms a Lie algebra .

Relationship to the determinant

The following applies to each vector :

-

.

.

The painting point denotes the scalar product . The cross product is clearly determined by this condition:

The following applies to every vector : If two vectors and are given, then there is exactly one vector , so that applies to all vectors . This vector is .

Graßmann identity

For the repeated cross product of three vectors (also called double vector product ) the Graßmann identity applies (also Graßmann evolution theorem , after Hermann Graßmann ). This is:

or.

where the paint dots denote the scalar product . In physics, the spelling is often used

used. According to this representation, the formula is also called the BAC-CAB formula . In index notation , the Graßmann identity is:

-

.

.

Here is the Levi-Civita symbol and the Kronecker delta .

Lagrange identity

For the scalar product of two cross products applies

This gives us the square of the norm

so the following applies to the amount of the cross product:

Since , the angle between and , is always between 0 ° and 180 °, is

Cross product made from two cross products

Special cases:

Cross product matrix

For a fixed vector , the cross product defines a linear mapping that maps a vector onto the vector . This can with a skew-symmetric tensor second stage be identified . When using the standard basis , the linear mapping corresponds to a matrix operation . The skew-symmetric matrix

-

With

With

does the same as the cross product with , d. H. :

-

.

.

The matrix is called the cross product matrix . It is also referred to with .

![[\ vec w] _ {\ times}](https://wikimedia.org/api/rest_v1/media/math/render/svg/5df1ca3d4151f61cc9ccdd7a4b0e463f5ff5121f)

Given a skew-symmetric matrix, the following applies

-

,

,

where is the transpose of , and the associated vector is obtained from

-

.

.

Has the shape , then the following applies to the corresponding cross product matrix:

-

![{W} = [\ vec w] _ {\ times} = \ vec {a} \ otimes \ vec {b} - \ vec {b} \ otimes \ vec {a}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4f392bdb15ce8bf4d9a58f818866104c073637ff) and for everyone .

and for everyone .

Here “ ” denotes the dyadic product .

Polar and axial vectors

When applying the cross product to vector physical quantities , the distinction between polar or shear vectors (these are those that behave like differences between two position vectors, for example speed , acceleration , force , electric field strength ) on the one hand and axial or rotation vectors , also called pseudo vectors , on the other hand (these are those that behave like axes of rotation, for example angular velocity , torque , angular momentum , magnetic flux density ) an important role.

Polar or shear vectors are assigned the signature (or parity ) +1, axial or rotation vectors the signature −1. When two vectors are multiplied by vector, these signatures are multiplied: two vectors with the same signature produce an axial product, two with a different signature produce a polar vector product. In operational terms: a vector transfers its signature to the cross product with another vector if this is axial; if the other vector is polar, the cross product gets the opposite signature.

Operations derived from the cross product

Late product

The combination of cross and scalar product in the form

is called a late product. The result is a number which corresponds to the oriented volume of the parallelepiped spanned by the three vectors . The late product can also be represented as a determinant of the named three vectors

rotation

In vector analysis , the cross product is used together with the Nabla operator to denote the differential operator "rotation". If a vector field is im , then is

![\ operatorname {rot} \ vec {V} = \ nabla \ times \ vec {V} = \ begin {pmatrix} \ frac \ partial {\ partial x_1} \\ [. 5em] \ frac \ partial {\ partial x_2} \\ [. 5em] \ frac \ partial {\ partial x_3} \ end {pmatrix} \ times \ begin {pmatrix} V_1 \\ [. 5em] V_2 \\ [. 5em] V_3 \ end {pmatrix} = \ begin {pmatrix} \ frac {\ partial} {\ partial x_2} V_3 - \ frac {\ partial} {\ partial x_3} V_2 \\ [. 5em] \ frac {\ partial} {\ partial x_3} V_1 - \ frac { \ partial} {\ partial x_1} V_3 \\ [. 5em] \ frac {\ partial} {\ partial x_1} V_2 - \ frac {\ partial} {\ partial x_2} V_1 \ end {pmatrix} = \ begin {pmatrix } \ frac {\ partial V_3} {\ partial x_2} - \ frac {\ partial V_2} {\ partial x_3} \\ [. 5em] \ frac {\ partial V_1} {\ partial x_3} - \ frac {\ partial V_3} {\ partial x_1} \\ [. 5em] \ frac {\ partial V_2} {\ partial x_1} - \ frac {\ partial V_1} {\ partial x_2} \ end {pmatrix}](https://wikimedia.org/api/rest_v1/media/math/render/svg/76fc4a22ce14a3ff684e6269ed0675da48fb7eac)

again a vector field, the rotation of .

Formally, this vector field is calculated as the cross product of the Nabla operator and the vector field . The expressions occurring here are not products, but applications of the differential operator to the function . Therefore, the calculation rules listed above, such as B. the Graßmann identity is not valid in this case. Instead, special calculation rules apply to double cross products with the Nabla operator .

Cross product in n-dimensional space

The cross product can be generalized to n-dimensional space for any dimension . The cross product im is not a product of two factors, but of factors.

The cross product of the vectors is characterized in that for each vector is considered

The cross product in coordinates can be calculated as follows. Let it be the associated -th canonical unit vector . For vectors

applies

analogous to the above-mentioned calculation with the help of a determinant.

The vector is orthogonal to

. The orientation is such that the vectors

in this order form a legal system. The amount of equal to the dimensional volume of from spanned Parallelotops .

For you don't get a product, just a linear mapping

-

,

,

the rotation by 90 ° clockwise.

This also shows that the component vectors of the cross product including the result vector in this order - unlike the usual - generally do not form a legal system; these arise only in real vector spaces with odd , with even the result vector forms a link system with the component vectors. This is in turn due to the fact that the basis in spaces of even dimensions is not the same as the basis , which by definition (see above) is a legal system . A small change in the definition would mean that the vectors in the first-mentioned order always form a legal system, namely if the column of the unit vectors in the symbolic determinant were set to the far right, this definition has not been accepted.

An even further generalization leads to the Graßmann algebras . These algebras are used, for example, in formulations of differential geometry , which allow the rigorous description of classical mechanics ( symplectic manifolds ), quantum geometry and, first and foremost, general relativity . In the literature, the cross product in the higher-dimensional and possibly curved space is usually written out in index with the Levi-Civita symbol .

Applications

The cross product is used in many areas of mathematics and physics, including the following topics:

Web links

swell

Individual evidence

-

↑ Max Päsler: Basics of vector and tensor calculus . Walter de Gruyter, 1977, ISBN 3-11-082794-8 , pp. 33 .

-

^ A b c d e Herbert Amann, Joachim Escher : Analysis. 2nd volume 2nd corrected edition. Birkhäuser-Verlag, Basel et al. 2006, ISBN 3-7643-7105-6 ( basic studies in mathematics ), pp. 312-313

-

↑ Duplicate vector product ( page no longer available , search in web archives ) Info: The link was automatically marked as defective. Please check the link according to the instructions and then remove this notice. (Website from elearning.physik.uni-frankfurt.de, accessed on June 5, 2015, password protected)@1@ 2Template: Toter Link / elearning.physik.uni-frankfurt.de

![{\ displaystyle [{\ vec {a}} \ {\ vec {b}}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f525d35f8edddf7a3f90c4d8666e165cc0ac5a00)

![{\ displaystyle [{\ vec {a}}, {\ vec {b}}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/008d8868e90625dc41200b2396382596923b8d9c)

![[\ vec w] _ {\ times}](https://wikimedia.org/api/rest_v1/media/math/render/svg/5df1ca3d4151f61cc9ccdd7a4b0e463f5ff5121f)

![{W} = [\ vec w] _ {\ times} = \ vec {a} \ otimes \ vec {b} - \ vec {b} \ otimes \ vec {a}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4f392bdb15ce8bf4d9a58f818866104c073637ff)

![\ operatorname {rot} \ vec {V} = \ nabla \ times \ vec {V} = \ begin {pmatrix} \ frac \ partial {\ partial x_1} \\ [. 5em] \ frac \ partial {\ partial x_2} \\ [. 5em] \ frac \ partial {\ partial x_3} \ end {pmatrix} \ times \ begin {pmatrix} V_1 \\ [. 5em] V_2 \\ [. 5em] V_3 \ end {pmatrix} = \ begin {pmatrix} \ frac {\ partial} {\ partial x_2} V_3 - \ frac {\ partial} {\ partial x_3} V_2 \\ [. 5em] \ frac {\ partial} {\ partial x_3} V_1 - \ frac { \ partial} {\ partial x_1} V_3 \\ [. 5em] \ frac {\ partial} {\ partial x_1} V_2 - \ frac {\ partial} {\ partial x_2} V_1 \ end {pmatrix} = \ begin {pmatrix } \ frac {\ partial V_3} {\ partial x_2} - \ frac {\ partial V_2} {\ partial x_3} \\ [. 5em] \ frac {\ partial V_1} {\ partial x_3} - \ frac {\ partial V_3} {\ partial x_1} \\ [. 5em] \ frac {\ partial V_2} {\ partial x_1} - \ frac {\ partial V_1} {\ partial x_2} \ end {pmatrix}](https://wikimedia.org/api/rest_v1/media/math/render/svg/76fc4a22ce14a3ff684e6269ed0675da48fb7eac)