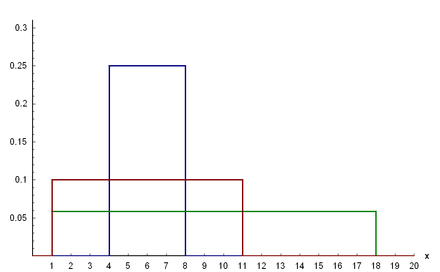

The constant uniform distribution , also called rectangular distribution , continuous uniform distribution , or uniform distribution , is a constant probability distribution . It has a constant probability density over an interval . This is synonymous with the fact that all sub-intervals of the same length have the same probability.

![[from]](https://wikimedia.org/api/rest_v1/media/math/render/svg/9c4b788fc5c637e26ee98b45f89a5c08c85f7935)

The possibility of simulating the constant uniform distribution on the interval from 0 to 1 forms the basis for generating numerous randomly distributed random numbers using the inversion method or the rejection method .

definition

A continuous random variable is called uniformly distributed over the interval if the density function and distribution function are given as

![[from]](https://wikimedia.org/api/rest_v1/media/math/render/svg/9c4b788fc5c637e26ee98b45f89a5c08c85f7935)

|

|

|

|

Often or is used as an abbreviation for the constant uniform distribution . In some formulas one also sees or as a designation for the distribution. The constant uniform distribution is completely described by its first two central moments, i.e. H. all higher moments can be calculated from expected value and variance.

properties

Probabilities

The probability that an evenly distributed random variable lies in a sub-interval is equal to the ratio of the interval lengths:

![[from]](https://wikimedia.org/api/rest_v1/media/math/render/svg/9c4b788fc5c637e26ee98b45f89a5c08c85f7935)

![[c, d] \ subseteq [a, b]](https://wikimedia.org/api/rest_v1/media/math/render/svg/32c4b90060cfad2522da60caeab608de43226f6e)

-

.

.

Expected value and median

The expected value and the median of the constant uniform distribution are equal to the middle of the interval :

![[from]](https://wikimedia.org/api/rest_v1/media/math/render/svg/9c4b788fc5c637e26ee98b45f89a5c08c85f7935)

-

.

.

Variance

The variance of the constant uniform distribution is

Standard deviation and other measures of variance

The standard deviation is obtained from the variance

-

.

.

The mean absolute deviation is , and the interquartile range is exactly twice as large. The uniform distribution is the only symmetrical distribution with monotonic density with this property.

Coefficient of variation

The following results for the coefficient of variation :

-

.

.

symmetry

The constant uniform distribution is symmetrical around .

Crookedness

The skew can be represented as

-

.

.

Bulge and excess

The bulge and the excess can also be represented as closed

-

or.

or.

-

.

.

Moments

-th moment -th moment

|

|

-th central moment -th central moment

|

|

Sum of uniformly distributed random variables

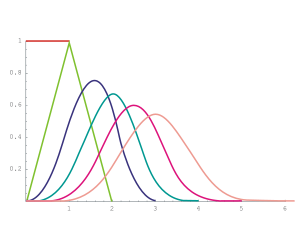

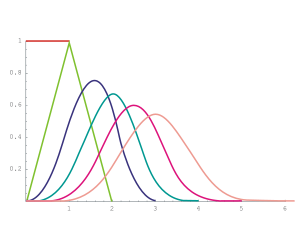

Distribution densities of the sum of up to 6 uniform distributions U (0.1)

The sum of two independent and continuously uniformly distributed random variables with the same carrier width is triangularly distributed , otherwise a trapezoidal distribution results. More accurate:

Let two random variables be independent and continuously evenly distributed, one on the interval , the other on the interval . Be and . Then their sum has the following trapezoidal distribution :

![[from]](https://wikimedia.org/api/rest_v1/media/math/render/svg/9c4b788fc5c637e26ee98b45f89a5c08c85f7935)

![[CD]](https://wikimedia.org/api/rest_v1/media/math/render/svg/d85b3b21d6d891d97f85e263d394e3c90287586f)

![{\ displaystyle f \ colon \ mathbb {R} \ to \ mathbb {R}, x \ longmapsto {\ begin {cases} 0 & x \ not \ in [a + c, b + d] \\ {\ frac {x} {\ alpha \ beta}} - {\ frac {a + c} {\ alpha \ beta}} & x \ in [a + c, a + c + \ alpha] \\ {\ frac {1} {\ beta}} & x \ in [a + c + \ alpha, a + c + \ beta] \\ {\ frac {b + d} {\ alpha \ beta}} - {\ frac {x} {\ alpha \ beta}} & x \ in [a + c + \ beta, b + d] \ end {cases}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3873fa7a21bbd83e45689defa7c5dd2cf85458bf)

The sum of independent, uniformly distributed random variables on the interval [0; 1] is an Irwin-Hall distribution ; it approximates the normal distribution ( central limit theorem ).

An occasionally used method ( rule of twelve ) for the approximate generation of (standard) normally distributed random numbers works like this: one adds up 12 (independent) random numbers uniformly distributed over the interval [0,1] and subtracts 6 (this provides the correct moments, since the variance of a U (0,1) -distributed random variable is 1/12 and it has the expectation value 1/2).

Characteristic function

The characteristic function has the form

-

,

,

where represents the imaginary unit .

Moment generating function

The moment-generating function of the constant uniform distribution is

and especially for and

Relationship to other distributions

Relationship to the triangular distribution

The sum of two independent and continuously equally distributed random variables has a triangular distribution .

Relationship to beta distribution

If there are independent random variables that are constantly uniformly distributed, then the order statistics have a beta distribution . More precisely applies

![[0.1]](https://wikimedia.org/api/rest_v1/media/math/render/svg/738f7d23bb2d9642bab520020873cccbef49768d)

for .

Simulation of distributions from the constant uniform distribution

With the inversion method, uniformly distributed random numbers can be converted into other distributions. If is a uniformly distributed random variable, then, for example, the

exponential distribution with the parameter is sufficient .

Generalization to higher dimensions

The continuous uniform distribution can be from the interval in any measurable subsets of with Lebesgue measure generalize. Then you bet

![[from]](https://wikimedia.org/api/rest_v1/media/math/render/svg/9c4b788fc5c637e26ee98b45f89a5c08c85f7935)

for measurable .

Discreet case

The uniform distribution is also defined on finite sets, then it is called discrete uniform distribution .

Example for the interval [0, 1]

Often it is and assumed, that is, considered. Then the density function on the interval is constant equal to 1 and the distribution function there applies . The expected value is accordingly , the variance and the standard deviation , whereby the latter two values also apply to any intervals of length 1. See also the section above, Sum of uniformly distributed random variables .

![[0.1]](https://wikimedia.org/api/rest_v1/media/math/render/svg/738f7d23bb2d9642bab520020873cccbef49768d)

![{\ displaystyle [a, a + 1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/b53692e0b256c365ff55b9d84ac933484c3c09f6)

Is a -distributed random variable, then is

-distributed.

-distributed.

See also

literature

Web links

Discrete univariate distributions

Continuous univariate distributions

Multivariate distributions

![[from]](https://wikimedia.org/api/rest_v1/media/math/render/svg/9c4b788fc5c637e26ee98b45f89a5c08c85f7935)

![[c, d] \ subseteq [a, b]](https://wikimedia.org/api/rest_v1/media/math/render/svg/32c4b90060cfad2522da60caeab608de43226f6e)

![[CD]](https://wikimedia.org/api/rest_v1/media/math/render/svg/d85b3b21d6d891d97f85e263d394e3c90287586f)

![{\ displaystyle f \ colon \ mathbb {R} \ to \ mathbb {R}, x \ longmapsto {\ begin {cases} 0 & x \ not \ in [a + c, b + d] \\ {\ frac {x} {\ alpha \ beta}} - {\ frac {a + c} {\ alpha \ beta}} & x \ in [a + c, a + c + \ alpha] \\ {\ frac {1} {\ beta}} & x \ in [a + c + \ alpha, a + c + \ beta] \\ {\ frac {b + d} {\ alpha \ beta}} - {\ frac {x} {\ alpha \ beta}} & x \ in [a + c + \ beta, b + d] \ end {cases}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3873fa7a21bbd83e45689defa7c5dd2cf85458bf)

![[0.1]](https://wikimedia.org/api/rest_v1/media/math/render/svg/738f7d23bb2d9642bab520020873cccbef49768d)

![{\ displaystyle [a, a + 1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/b53692e0b256c365ff55b9d84ac933484c3c09f6)