Negative binomial distribution

| Negative binomial distribution | |

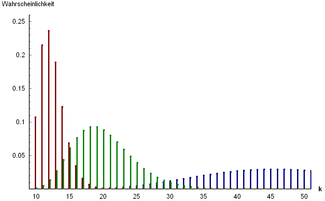

Probability distribution expected value = 10 orange; green the standard deviation |

|

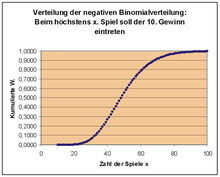

| Distribution function |

|

| parameter |

r > 0 - number of successes until termination p ∈ (0.1) - single success probability |

|---|---|

| carrier | k ∈ {0, 1, 2, 3,…} - number of failures |

| Probability function | |

| Distribution function | Euler's beta function |

| Expected value | |

| mode | |

| Variance | |

| Crookedness | |

| Bulge | |

| Moment generating function | |

| Characteristic function | |

The negative binomial distribution (also Pascal distribution ) is a univariate probability distribution . It is one of the discrete probability distributions and is one of the three Panjer distributions .

It describes the number of attempts that are required to achieve a given number of successes in a Bernoulli process .

In addition to the Poisson distribution , the negative binomial distribution is the most important loss number distribution in actuarial mathematics . There it is used in particular to distribute the number of claims in health insurance, less often in the area of motor vehicle liability or comprehensive insurance.

Derivation of the negative binomial distribution

This distribution can be described with the help of the urn model as putting back: In an urn there are two kinds of balls ( dichotomous population). The proportion of balls of the first kind is . The probability that a ball of first grade is pulled, thus amounts .

A ball is drawn and put back again until the first type of balls is exactly of the first type. You can define a random variable : “Number of attempts to achieve success for the first time ”. The number of attempts depends on the amount . has a countably infinite number of possible manifestations.

The probability that attempts were necessary to achieve success is calculated according to the following consideration:

Attempts are said to have already taken place at this point in time . A total of balls of the first kind were drawn. The probability of this is given by the binomial distribution of the random variables : "Number of balls of the first kind in experiments":

The probability that another ball of the first kind will be drawn is then

A random variable is called negative binomial distribution with the parameters (number of successful attempts) and (probability of occurrence of a success in an individual attempt) if the probability function applies to it

lets specify.

This variant is called variant A here to avoid confusion.

Alternative definition

A discrete random variable is subject to the negative binomial distribution with the parameters and , if they are the probabilities

for owns.

Both definitions are related above ; While the first definition asks about the number of attempts (successful and unsuccessful) until the -th success occurs, the alternative representation is interested in the number of failures until the -th success occurs. The successes are not counted. The random variable then only describes the number of unsuccessful attempts.

This variant is called variant B here .

Properties of the negative binomial distribution

Expected value

- option A

The expected value is determined to be

- .

- Variant B

With the alternative definition, the expected value is smaller, i.e.

- .

Variance

The variance of the negative binomial distribution is given by for both definitions

- .

With the alternative definition, the variance is always greater than the expected value (over- dispersion ).

Coefficient of variation

- option A

Of expectation and variance, the results immediately coefficient of variation to

- Variant B

In the alternative representation the result is

- .

Crookedness

The skew results for both variants as follows:

- .

Bulge

The excess is for both variants

- .

So that is the bulge

- .

Characteristic function

- option A

The characteristic function has the form

- .

- Variant B

Alternatively it arises

- .

Probability generating function

- option A

For the probability generating function one obtains

- with .

- Variant B

Analog is then

- .

Moment generating function

- option A

The moment generating function of the negative binomial distribution is

- with .

- Variant B

Then there is the alternative display

Sums of negative binomial random variables

Are two independent negative binomial random variables for the parameters and . Then there is again a negative binomial distribution for the parameter and . The negative binomial distribution is thus reproductively , for folding applies ,

it forms a folding semi-group .

Generalization to real parameters

The above derivation and interpretation of the negative binomial distribution using the urn model is only possible for. However, there is also a generalization of the negative binomial distribution for . For this purpose, a Poisson distribution is considered, the intensity of which is randomly distributed according to a gamma distribution with the parameters and . If the mixed distribution of these two distributions is now formed, the so-called Poisson-gamma distribution results . The following then applies to the probability function of this distribution

For is the probability function of the negative binomial distribution. Thus, the negative binomial distribution can also be interpreted as meaningful. The probability of achieving success is then equal to the probability of achieving success with a binomial distribution with random, gamma-distributed parameters . The gamma functions in the probability function can also be replaced by generalized binomial coefficients.

This construction corresponds to variant B defined above. All characteristics, such as expected value, variance and so on, remain unchanged. In addition, the variant for real is infinitely divisible .

Relationships with other distributions

Relationship to the geometric distribution

The negative binomial distribution goes over into the geometric distribution . On the other hand, the sum of mutually independent geometrically distributed random variables with the same parameter is negative-binomially distributed with the parameters and . However, it should also be noted here which parameterization variant was selected.

Relationship to the composite Poisson distribution

The negative binomial distribution arises from the composite Poisson distribution when combined with the logarithmic distribution . The parameters go to variant B with and .

example

The student Paula is playing skat tonight. She knows from long experience that she wins every fifth game. Winning is defined as follows: you must first get a game by bidding, then you have to win this game.

Since she has a statistics lecture at eight o'clock tomorrow, the evening shouldn't be too long. That's why she decided to go home after winning the 10th game. Let us assume that a game lasts about 4 minutes (generously calculated). What is the probability that she can go home after two hours, that is, after 30 games?

We proceed with our considerations in the same way as above:

What is the probability that she won 9 times in 29 games? We calculate this probability with the binomial distribution, in terms of the urn model with 29 attempts and 9 balls of the first sort:

The probability of making the 10th win on the 30th game is now

This probability now seems to be very small. The graph of the negative binomial random variable X shows that overall the probabilities remain very small. How is poor Paula ever supposed to get to bed? We can reassure her: it is enough to ask how many attempts Paula needs at most , it doesn't have to be exactly 30.

The probability that a maximum of 30 attempts are necessary is the distribution function F (x) of the negative binomial distribution at x = 30, which here is the sum of the probabilities P (X = 0) + P (X = 1) + P (X = 2) + ... + P (X = 30) results. A look at the graph of the distribution function shows: If Paula is satisfied with a 50% probability, she would have to play at most about 50 games, that would be 50 · 4 min = 200 min = 3h 20 min. In order to get her 10 winnings with an 80% probability, she would have to play a maximum of about 70 games, i.e. almost 5 hours. Maybe Paula should change her game number strategy after all.

Web links

- AV Prokhorov: Negative binomial distribution . In: Michiel Hazewinkel (Ed.): Encyclopaedia of Mathematics . Springer-Verlag , Berlin 2002, ISBN 978-1-55608-010-4 (English, online ).

- Eric W. Weisstein : Negative Binomial Distribution . In: MathWorld (English).

literature

- Achim Klenke: Probability Theory . 3. Edition. Springer-Verlag, Berlin Heidelberg 2013, ISBN 978-3-642-36017-6 , doi : 10.1007 / 978-3-642-36018-3 .

- Christian Hesse: Applied probability theory . 1st edition. Vieweg, Wiesbaden 2003, ISBN 3-528-03183-2 , doi : 10.1007 / 978-3-663-01244-3 .

![{\ begin {aligned} f (k | r, p) & = \ int _ {0} ^ {\ infty} f _ {{{\ text {Poi}}}} (k | \ lambda) \ cdot f _ {{ {\ text {Gamma}}}} (\ lambda | r, {\ frac {p} {1-p}}) \; {\ mathrm {d}} \ lambda \\ [8pt] & = \ int _ { 0} ^ {\ infty} {\ frac {\ lambda ^ {k}} {k!}} E ^ {{- \ lambda}} \ cdot \ lambda ^ {{r-1}} {\ frac {e ^ {{- \ lambda p / (1-p)}}} {{\ big (} {\ frac {1-p} {p}} {\ big)} ^ {r} \, \ Gamma (r)} } \; {\ mathrm {d}} \ lambda \\ [8pt] & = {\ frac {p ^ {r} (1-p) ^ {{- r}}} {k! \, \ Gamma (r )}} \ int _ {0} ^ {\ infty} \ lambda ^ {{r + k-1}} e ^ {{- \ lambda / (1-p)}} \; {\ mathrm {d}} \ lambda \\ [8pt] & = {\ frac {(p) ^ {r} (1-p) ^ {{- r}}} {k! \, \ Gamma (r)}} \ (1-p ) ^ {{r + k}} \, \ Gamma (r + k) \\ [8pt] & = {\ frac {\ Gamma (r + k)} {k! \; \ Gamma (r)}} \ ; (1-p) ^ {k} p ^ {r}. \ End {aligned}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/164b4691984d59427ac464e5ffaf363a77ffe8c7)