Subspace

A subspace , part vector space , linear subspace or linear subspace is in mathematics a subset of a vector space , which is a vector space itself again. The vector space operations vector addition and scalar multiplication are inherited from the initial space to the sub-vector space. Each vector space contains itself and the zero vector space as trivial sub-vector spaces.

Each subspace is the product of a linearly independent subset of vectors of the output space . The sum and the intersection of two sub-vector spaces results in a sub-vector space, the dimensions of which can be determined using the dimensional formula . Each subspace has at least one complementary space , so that the output space is the direct sum of the subspace and its complement. Furthermore, a factor space can be assigned to each sub-vector space , which is created in that all elements of the output space are projected in parallel along the sub-vector space .

In linear algebra , subspaces are used, among other things, to characterize the core and image of linear mappings , sets of solutions for linear equations and eigenspaces of eigenvalue problems . In the functional analysis , sub-vector spaces of Hilbert spaces , Banach spaces and dual spaces are examined in particular . Sub-vector spaces have diverse applications, for example in numerical solution methods for large linear systems of equations and for partial differential equations , in optimization problems , in coding theory and in signal processing .

definition

If a vector space is over a body , then a subset forms a sub- vector space of if and only if it is not empty and closed with regard to vector addition and scalar multiplication . So it has to

hold for all vectors and all scalars . The vector addition and the scalar multiplication in the sub-vector space are the restrictions of the corresponding operations of the output space .

Equivalent to the first condition, one can also demand that the zero vector of in be contained. If it contains at least one element, then the zero vector in is also included (set ) due to the closure of with regard to the scalar multiplication . Conversely , if the set contains the zero vector, it is not empty.

With the help of these three criteria it can be checked whether a given subset of a vector space also forms a vector space without having to prove all vector space axioms. A subspace is often referred to as a “subspace” for short when it is clear from the context that it is a linear subspace and not a more general subspace .

Examples

Concrete examples

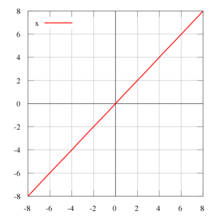

The set of all vectors of the real number plane forms a vector space with the usual component-wise vector addition and scalar multiplication. The subset of the vectors for which applies forms a subspace of , because it applies to all :

- the coordinate origin is in

As a further example one can consider the vector space of all real functions with the usual pointwise addition and scalar multiplication. In this vector space the set of linear functions forms a sub-vector space, because it holds for :

- the null function lies in

- , Consequently

- , Consequently

More general examples

- Each vector space contains itself and the zero vector space , which only consists of the zero vector, as trivial sub-vector spaces.

- In the vector space of real numbers , the set and whole are the only subspaces.

- In the vector space of complex numbers , the set of real numbers and the set of imaginary numbers are subspaces.

- In the Euclidean plane , all straight lines through the zero point form sub-vector spaces.

- In Euclidean space , all straight lines through the origin and planes of origin form sub-vector spaces.

- In the vector space of all polynomials , the set of polynomials of maximum degree forms a sub-vector space for every natural number .

- In the vector space of the square matrices , the symmetrical and the skew-symmetrical matrices each form sub-vector spaces.

- In the vector space of the real functions over an interval , the integrable functions , the continuous functions and the differentiable functions each form sub-vector spaces.

- In the vector space of all mappings between two vector spaces over the same body, the set of linear mappings forms a sub-vector space.

properties

Vector space axioms

The three subspace criteria are indeed sufficient and necessary for the validity of all vector space axioms. Because of the closed nature of the set, the following applies to all vectors by setting

and thus continue by setting

- .

The set thus contains in particular the zero vector and the additively inverse element for each element . So it is a subgroup of and therefore especially an Abelian group . The associative law , the commutative law , the distributive laws and the neutrality of one are transferred directly from the starting space . With that it satisfies all vector space axioms and is also a vector space. Conversely, every subspace must meet the three criteria given, since vector addition and scalar multiplication are the restrictions of the corresponding operations of .

presentation

Each subset of vectors of a vector space spans by forming all possible linear combinations

- ,

a subspace of on, which is called the linear envelope of . The linear hull is the smallest subspace that includes the set and is equal to the average of all subspaces of that include. Conversely, every subspace is the product of a subset of , that is, it holds

- ,

where the set is called a generating system of . A minimal generating system consists of linearly independent vectors and is called the basis of a vector space. The number of elements of a basis indicates the dimension of a vector space.

Operations

Intersection and union

The intersection of two subspaces of a vector space

is always itself a subspace.

The union of two subspaces

however, it is only a subspace if or holds. Otherwise the union is complete with regard to the scalar multiplication, but not with regard to the vector addition.

total

The sum of two subspaces of a vector space

is again a subspace, namely the smallest subspace that contains. Which applies to the sum of two finite-dimensional subspaces dimension formula

- ,

from which, conversely, the dimension of the intersection of two sub-vector spaces can also be read. Section and sum bases of sub-vector spaces of finite dimensions can be calculated with the Zassenhaus algorithm .

Direct sum

If the intersection of two sub-vector spaces consists only of the zero vector , then the sum is called an inner direct sum

- ,

because it is isomorphic to the outer direct sum of the two vector spaces. In this case there are uniquely certain vectors for each , with . It then follows from the set of dimensions that the zero vector space is zero-dimensional, for the dimension of the direct sum

- ,

which is also true in the infinite-dimensional case.

Multiple operands

The preceding operations can also be generalized to more than two operands. If is a family of subspaces of , where is any index set , then these subspaces are averaged

again a subspace of . The sum of several sub-vector spaces also results

again a subspace of , whereby in the case of an index set with an infinite number of elements only a finite number of summands may not be equal to the zero vector. Such a sum is called direct and is then with

denotes when the intersection of each sub-vector space with the sum of the remaining sub-vector spaces results in the zero vector space. This is equivalent to the fact that each vector has a unique representation as the sum of elements of the subspaces.

Derived spaces

Complementary space

For each subspace of there is at least one complementary space such that

applies. Each such complementary space corresponds exactly to one projection onto the sub-vector space , i.e. an idempotent linear mapping with the

holds, where is the identical figure . In general, there are several complementary spaces to a sub-vector space, none of which is distinguished by the vector space structure. In scalar product spaces , however, it is possible to speak of mutually orthogonal sub-vector spaces. If finite dimensional, then for each sub-vector space there exists a uniquely determined orthogonal complementary space , which is precisely the orthogonal complement of , and it then applies

- .

Factor space

A factor space can be assigned to each sub-vector space of a vector space , which is created in that all elements of the sub-vector space are identified with one another and thus the elements of the vector space are projected in parallel along the sub-vector space . The factor space is formally defined as the set of equivalence classes

of vectors in , being the equivalence class of a vector

is the set of vectors in that differ by only one element of subspace . The factor space forms a vector space if the vector space operations are defined representative, but it is not itself a subspace of . The following applies to the dimension of the factor space

- .

The subspaces of are exactly the factor spaces , where subspace of is with .

Annihilator room

The dual space of a vector space over a body is the space of the linear mappings from to and thus a vector space itself. For a subset of , the set of all functionals that vanish on forms a subspace of the dual space, the so-called annihilator space

- .

If is finite dimensional, then the dimension of the annihilator space is a subspace of

- .

The dual space of a subspace is isomorphic to the factor space .

Subspaces in linear algebra

Linear maps

If there is a linear mapping between two vector spaces and over the same body, then forms the core of the mapping

a subspace of and the image of the mapping

a subspace of . Furthermore, the graph of a linear mapping is a subspace of the product space . If the vector space is finite-dimensional, then the rank theorem applies to the dimensions of the spaces involved

- .

The dimension of the image is also called rank and the dimension of the core is also called the defect of the linear mapping. According to the homomorphism theorem , the image is isomorphic to the factor space .

Linear equations

If again a linear mapping between two vector spaces over the same field is the solution set of the homogeneous linear equation

a subspace of , specifically the kernel of . The solution set of an inhomogeneous linear equation

on the other hand, if it is not empty, with is an affine-linear subspace of , which is a consequence of the superposition property . The dimension of the solution space is then also equal to the dimension of the core of .

Eigenvalue problems

Is now a linear mapping of a vector space in itself, i.e. an endomorphism , with an associated eigenvalue problem

- ,

then everyone is eigenspace belonging to an eigenvalue

a subspace of whose elements different from the zero vector are exactly the associated eigenvectors . The dimension of the eigen-space corresponds to the geometric multiplicity of the eigen-value; it is at most as large as the algebraic multiplicity of the eigenvalue.

Invariant subspaces

If there is an endomorphism again , then a subspace of invariant is called under or -invariant for short , if

applies, that is, if the picture is also in for everyone . The image from below is then a subspace of . The trivial subspaces and , but also , and all eigenspaces of are always invariant under . Another important example of invariant subspaces are the main spaces , which are used, for example, to determine the Jordanian normal form .

Sub-vector spaces in functional analysis

Unterhilbert dreams

In Hilbert spaces, so full Skalarprodukträumen, in particular are under Hilbert spaces considered, ie subspaces that the limitation of respect scalar are still complete. This property is synonymous with the fact that the subspace is closed with respect to the norm topology that is induced by the scalar product. Not every sub-vector space of a Hilbert space is complete, but a sub-Hilbert space can be obtained for every incomplete sub-vector space by closing it , in which it is then dense . According to the projection theorem, there is also a clearly defined orthogonal complement for every Unterhilbert space , which is always closed.

Unterhilbert spaces play an important role in quantum mechanics and the Fourier or multi-scale analysis of signals.

Underbench rooms

In Banach spaces, i.e. complete normalized spaces , one can analogously consider sub- Banach spaces, i.e. sub-vector spaces that are complete with regard to the restriction of the norm . As in the Hilbert space case, a subspace of a Banach space is a sub-Banach space if and only if it is closed. Furthermore, for every incomplete sub-vector space of a Banach space, a sub-Banach space can be obtained by completion, which lies close to it. However, there is generally no complementary sub-annex space to a sub-annex space.

In a half- normalized space , the vectors with half-normal zero form a sub-vector space. A normalized space as a factor space is obtained from a semi-normalized space by considering equivalence classes of vectors that do not differ with regard to the semi-norm. If the semi-normalized space is complete, this factor space is then a Banach space. This construction is used in particular in L p spaces and related function spaces .

In the numerical calculation of partial differential equations using the finite element method , the solution is approximated in suitable finite-dimensional sub- appendix spaces of the underlying Sobolev space .

Topological dual spaces

In functional analysis, in addition to the algebraic dual space, one also considers the topological dual space of a vector space , which consists of the continuous linear mappings from to . For a topological vector space , the topological dual space forms a sub-vector space of the algebraic dual space. According to Hahn-Banach's theorem , a linear functional on a subspace of a real or complex vector space that is bounded by a sublinear function has a linear continuation on the total space that is also bounded by this sublinear function. As a consequence, the topological dual space of a normalized space contains a sufficient number of functionals, which forms the basis of a rich duality theory.

Other uses

Other important applications of subspaces are:

- The Gram-Schmidt orthogonalization method for the construction of orthogonal bases

- Krylow subspace method for solving large sparse linear equation systems

- Solution procedures for optimization problems

- Linear Codes in Coding Theory

- The representation of projective spaces in projective geometry

See also

literature

- Siegfried Bosch : Linear Algebra . Springer, 2006, ISBN 3-540-29884-3 .

- Gilbert Strang : Linear Algebra . Springer, 2003, ISBN 3-540-43949-8 .

Web links

- MI Voitsekhovskii: Linear subspace . In: Michiel Hazewinkel (Ed.): Encyclopaedia of Mathematics . Springer-Verlag , Berlin 2002, ISBN 978-1-55608-010-4 (English, online ).

- Marco Milon et al. a .: Vector subspace . In: PlanetMath . (English)

- Eric W. Weisstein : Subspace . In: MathWorld (English).

![V / U = \ {\, [v] \ mid v \ in V \}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4f8a3c50fb1cd7da155d60c8ce85772cf172d235)

![[v] = v + U = \ {v + u \ mid u \ in U \}](https://wikimedia.org/api/rest_v1/media/math/render/svg/df30e5b4a5885db18fe9a9ad8f5dae6a099e2fa9)