Hard disk drive

| Storage medium Hard disk drive

|

|

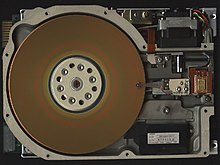

Open hard disk: three magnetic disks, read / write head, mechanics |

|

| General | |

|---|---|

| Type | magnetic |

| capacity | up to 20 terabytes (2020) |

| origin | |

| developer | IBM |

| performance | 1956 |

| predecessor | Drum storage (partly magnetic tape ) |

A hard disk drive ( English hard disk drive , abbreviation HDD ), often also referred to as hard disk or hard disk (abbreviated HD ), is a magnetic storage medium of computer technology in which data is written on the surface of rotating disks (also called "platter") . For writing, the hard-magnetic coating of the disc surface is magnetized without contact in accordance with the information to be recorded . Due to the remanence(remaining magnetization) the information is saved. The information is read by non-contact scanning of the magnetization of the disk surface.

Unlike sequentially addressed storage media such as magnetic tape or paper tape drives are the directly addressable storage media (Engl. Direct access storage devices , DASD ) allocated because no linear pass is required to move to a specific location. Before they were used in the PC sector from the 1980s, hard disks were mainly used in the mainframe sector . The data can be stored on the hard drives in different organizational forms. CKD (count key data) organized hard disks contain data blocks of different lengths depending on the record format. FBA (fix block architecture) organized hard disks contain data blocks of the same length, which are usually 512 or 4096 bytes in size. An access must always comprise an integer number of blocks.

Since 2007, flash memories (so-called solid-state drives , or SSD for short) and hybrid memories (combinations of SSD and conventional hard drives) have also been offered in the end customer market, which are also addressed and simplified via the same interfaces (specification according to SATA , etc.) referred to as "hard drives". A cheap desktop SSD (as of August 2020) is around three times as expensive per terabyte as a cheap conventional desktop hard drive, but achieves significantly lower access times and higher write and read speeds. Due to the lower price per storage unit, conventional hard disks are still widely used, especially in applications that require high capacities but not high speeds (e.g. when used in NAS for media storage in a private setting).

The term “hard disk” describes, on the one hand, that the magnetic disk, in contrast to the “ removable disk ”, is permanently connected to the drive or the computer. Secondly, it corresponds to the English term "hard disk", as opposed to flexible (Engl. Floppy ) disks in floppy disk consists of a rigid material. Accordingly, rigid disks were also in use until the 1990s .

General technical data

Hard disks are provided with an access structure through (so-called " low-level ") formatting . Since the beginning of the 1990s with the advent of IDE hard drives, this has been done by the manufacturer and can only be done by the manufacturer. The term “formatting” is also used for creating a file system (“ high-level formatting ”).

With low-level formatting, various markings and the sector headers are written which contain track and sector numbers for navigation. The servo information required for current hard disks with linear motors cannot be written by the user. Servo information is necessary so that the head can reliably follow the "track". Purely mechanical guidance is no longer possible with a higher track density and is too imprecise - with a track density of 5.3 tracks / µm a track is only 190 nm wide.

Storage capacity

The storage capacity of a hard disk is calculated from the size of a data block (256, 512, 2048 or 4096 bytes) multiplied by the number of available blocks. The size of the first hard disks was given in megabytes, from around 1997 in gigabytes, and disks in the terabyte range since around 2008.

Was the way in which the data on the first disks were stored was still "visible from the outside" (the hard disk controller , the firmware such as the BIOS on an IBM PC-compatible computer , and the operating system , the sectors per track, number of tracks, Number of heads, MFM or RLL modulation ), this changed with the introduction of IDE disks in the early 1990s. There was less and less to see how the data is stored internally; the panel is addressed via an interface that hides internals from the outside. Sometimes the hard disk reported “wrong” information for the number of tracks, sectors and heads in order to circumvent system limitations: Firmware and operating system worked on the basis of these “wrong” values, the hard disk logic then converted them into internal values that actually corresponded to its own geometry .

The development of the maximum hard drive capacity over time shows an almost exponential course, comparable to the development of computing power according to Moore's law . The capacity has doubled about every 16 months with slightly falling prices, with the increase in capacity since about 2005 (January 2007: 1 terabyte, September 2011: 4 terabytes, December 2019: 16 terabytes).

Hard disk manufacturers use prefixes in their SI-compliant decimal meaning for storage capacities . A capacity of one terabyte indicates a capacity of 10 12 bytes. The Microsoft Windows operating system and some other older operating systems use the same prefixes when specifying the capacity of hard disks, but - following historical usage, but contrary to IEC standards that have been in force since 1998 - in their binary meaning. This leads to an apparent contradiction between the size specified by the manufacturer and that of the operating system. For example, for a hard drive with a manufacturer-specified capacity of one terabyte, the operating system only specifies a capacity of 931 “gigabytes”, since a “terabyte” there denotes 2 40 bytes (the IEC-compliant name for 2 40 bytes is tebibyte ). This effect does not occur under the IEC-compliant systems OS X (from version 10.6 ) and Unix or most Unix-like operating systems.

Sizes (mechanical)

The dimensions of hard drives are traditionally given in inches . This is not an exact size specification, but a form factor . Common form factors for the width are 5.25 ", 3.5", 2.5 "and 1.8", for the height z. B. 1 ″, 1 ⁄ 2 ″ and 3 ⁄ 8 ″. The inches usually correspond roughly to the diameter of the platter, not the width of the drive housing. In some cases, however, smaller platters are used to enable higher speeds.

In the course of technical advancement, sizes were repeatedly discontinued in favor of smaller ones, as these, in addition to the smaller space requirement, are less susceptible to vibrations and have a lower power consumption . Admittedly, less space initially means that a drive has smaller platters and thus provides less storage space. Experience shows, however, that the rapid development of technology in the direction of higher data densities compensates for this limitation in the short term.

The first IBM 350 hard disk drive from 1956 was a cabinet with a 24 ″ disk stack. In the mid-1970s, models with a size of 8 "appeared, which were relatively quickly replaced by much more manageable and, above all, lighter 5.25" hard disk drives. In the meantime there were still sizes of 14 "and 9".

5.25 ″ hard disks were introduced by ( Shugart Technology) Seagate in 1980 ; their disks are about the size of a CD / DVD / Blu-Ray. This size has not been manufactured since 1997. Some SCSI server drives and the LowCost- ATA drive BigFoot Quantum are the last representatives of this format. The size of these drives is based on that of 5.25 ″ floppy disk drives: The width of these drives is 5 3 ⁄ 4 ″ (146 mm), the height of drives with full height 3 1 ⁄ 4 ″ (82.6 mm), for drives with half height 1 5 ⁄ 8 ″ (41.3 mm). There were models with an even lower overall height: the models in the BigFoot series had an overall height of 3 ⁄ 4 "(19 mm) and 1" (25.4 mm). The depth of 5.25 "hard drives is not specified, but should not be significantly more than 200 mm.

3.5 ″ hard drives were introduced by IBM in 1987 with the PS / 2 series and were standard in desktop computers for a long time. The size of these drives is based on that of 3.5 ″ floppy disk drives : The width of these drives is 4 ″ (101.6 mm), the height is usually 1 ″ (25.4 mm). Seagate brought out a hard drive with twelve disks and 1.6 ″ (40.64 mm) high with the ST1181677; Fujitsu also offered drives of this size. The depth of 3.5 ″ hard drives is 5 ″ (146 mm).

In many areas, 3.5 ″ hard drives have largely been replaced by 2.5 ″ models or SSDs.

2.5 ″ hard drives were originally developed for notebooks. a. use in servers and special devices (multimedia players, USB hard drives); they are now widespread. The width is 70 mm, the depth 100 mm. The traditional height was 1 ⁄ 2 ″ (12.7 mm); Meanwhile there are construction heights between 5 mm and 15 mm, 5 mm, 7 mm and 9.5 mm are common. The permitted height depends on the device in which the hard disk is to be installed. The interface connection is modified compared to the larger designs; with ATA , the distance between the pins is reduced from 2.54 mm to 2 mm. There are also four pins (a total of 43 pins), which replace the separate power supply connector of the larger models. 2.5 ″ hard drives only require an operating voltage of 5 V; the second operating voltage of 12 V required for larger panels is not required. 2.5 ″ SATA hard disks have the same connections as the 3.5 ″ drives, only the 5 mm high drives partly have a special SFF-8784 connection due to the low height available.

Seagate, Toshiba , Hitachi and Fujitsu have been offering 2.5 ″ hard disk drives for use in servers since 2006 . Since April 2008, Western Digital has been marketing the Velociraptor, a 2.5 "hard disk drive (with a height of 15 mm) with a 3.5" mounting frame as a desktop hard disk drive.

| Form factor |

Height (mm) |

Width (mm) |

Depth (mm) |

|---|---|---|---|

| 5.25 ″ | ≤82.6 | 146 | > 200 |

| 3.5 ″ | 25.4 | 101.6 | 146 |

| 2.5 ″ | 12.7 | 70 | 100 |

| 1.8 ″ | 8th | 54 | 71-78.5 |

1.8 ″ hard drives have been used in subnotebooks , various industrial applications and large MP3 players since 2003 . The width is 54 mm, the depth between 71 and 78.5 mm, the height 8 mm.

Even smaller sizes with 1.3 ″, 1 ″ and 0.85 ″ hardly play a role. Microdrives were an exception in the early days of digital photography - with a size of 1 ″, they enabled comparatively high-capacity and inexpensive memory cards in CompactFlash Type II format for digital cameras , but have since been replaced by flash memories . In 2005, Toshiba offered hard disk drives with a size of 0.85 ″ and a capacity of 4 gigabytes for applications such as MP3 players.

Layout and function

Physical structure of the unit

A hard disk consists of the following assemblies:

- one or more rotatably attached discs ( Platter , plural: Platters ),

- an axis, also called a spindle , on which the disks are mounted one above the other,

- an electric motor as a drive for the spindle (and thus the disk (s)),

- mobile write - write heads (Heads)

- One bearing each for the spindle (mostly hydrodynamic plain bearings ) and for the actuator axis (and thus the read / write heads) (also magnetic bearings ),

- a drive for the actuator axis (and thus for the read-write heads) (ger .: Actuator , German: Actuator )

- the control electronics for motor and head control,

- a DSP for administration, operation of the interface, control of the read / write heads. Modulation and demodulation of the signals from the read / write heads is carried out by integrated special hardware and is not carried out directly by the DSP. The required processing power of the demodulation is in the range ~ 10 7 MIPS .

- ( Flash ) ROM and DDR-RAM for firmware , temporary data and hard disk cache . Currently, 2 to 64 MiB are common .

- the interface for addressing the hard disk from the outside and

- a sturdy housing .

Technical structure and material of the data disks

Since the magnetizable layer should be particularly thin, the disks consist of a non-magnetizable base material with a thin magnetizable cover layer. The basic material are often surface-treated aluminum - alloys used. In the case of panes with a high data density, however, magnesium alloys, glass or glass composites are primarily used , as these materials show less diffusion. They must be dimensionally stable as possible (both under mechanical and thermal stress) and have a low electrical conductivity in order to keep the size of the eddy currents low.

An iron oxide or cobalt topcoat approximately one micrometer thick is applied to the panes. Today's hard disks are produced by sputtering so-called “high storage density media” such as CoCrPt. The magnetic layer is additionally provided with a protective layer made of diamond-like carbon ("carbon overcoat") in order to avoid mechanical damage. There is also a 0.5–1.5 nm thick sliding layer on top. The future miniaturization of the magnetic "bits" requires research into "ultra high storage density media" as well as alternative concepts as the superparamagnetic limit is slowly approached. In addition, an increase in data density was achieved through better carrier material and through optimization of the writing process .

Glass was used as the material for the panes in IBM desktop hard drives from 2000 to 2002 (Deskstar 75GXP / 40GV DTLA-30xxxx , Deskstar 60GXP / 120GXP IC35Lxxxx ) . However, newer models of the hard disk division from IBM (taken over by Hitachi in 2003 ) use aluminum again with the exception of server hard disks. One or more rotating disks lying one above the other are located in the hard disk housing. Up to now, hard disks with up to twelve disks have been built, one to four are currently common. Energy consumption and noise generation within a hard drive family increase with the number of disks. It is common to use all surfaces of the platters (n disks, 2n surfaces and read / write heads). Some drive sizes (e.g. 320 GB drive with 250 GB / disk) manage with an uneven number of read / write heads (here: 3) and do not use a surface.

- Defective hard drives

With the replacement of Longitudinal Magnetic Recording by Perpendicular Magnetic Recording (PMR) - a storage principle known since the 1970s but not yet mastered at the time - intensive research since 2000 succeeded in further increasing the data density. The first hard disk with this storage technology came from Hitachi in 2005: a 1.8 ″ hard disk with 60 gigabytes. Most hard drives have been using this technology since 2008 (from 200 GB / disk at 3.5 ″). Since around 2014, some drives have been using Shingled Magnetic Recording (SMR), in which a data track extends into its two neighboring tracks; In this case, several parallel tracks may have to be written together or neighboring tracks have to be repaired after writing, which leads to a lower effective write data rate. This enables 3.5 ″ hard drives with a capacity of over 12 TB (status: 12/2017). By using " Two-Dimensional Magnetic Recording " (TDMR), the data density can be increased by another 10%, but this requires several read heads per side and more complex electronics, which is why the use of expensive drives with very large capacities is reserved.

Axis bearings and speeds

Hard drives used in personal computers or home PCs - currently mostly plates with ATA , SATA , SCSI or SAS interface - rotate at speeds from 5,400 to 10,000 min -1 . In the field of high performance computers and server hard disks are mainly used, the 10,000 or 15,000 min -1 reach. In the 2.5-inch hard disks for mobile devices spindle speeds in the range of 5400-7200 rpm are -1 , and 5400 min -1 is very common. The speed must be adhered to with high precision, as it determines the length of a bit over time; As with head positioning, a control loop is also used here . Servo used.

The axes of the disks of earlier hard drives (up to 2000) were ball-bearing ; Recently, hydrodynamic plain bearings ("fluid dynamic bearing" - FDB) are predominantly used. These are characterized by a longer service life, lower noise and lower manufacturing costs.

The read / write head unit

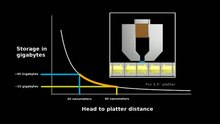

The read / write head ( magnetic head ) of the writing finger, in principle a tiny electromagnet , magnetizes tiny areas of the disc surface differently and thus writes the data on the hard drive. Due to an air cushion that is created by the friction of the air on the rotating disk surface, the read / write heads float (see ground effect ). The floating height in 2000 was in the range of about 20 nm. Because of this short distance, the air inside the hard drive housing must not contain any impurities. With newer hard disks with perpendicular recording technology, this distance shrinks to 5 to 6 nm. Currently announced disks (2011) with 1 terabyte / disk still allow flight heights of a maximum of 3 nm so that the signal is not weakened too much by loss of distance . Like semiconductors, hard drives are therefore manufactured in clean rooms . In this context, the ground effect is very useful for maintaining the correct flying height of the read / write head over the rotating disk.

Until about 1994, the data were read out by the induction effect of the magnetic field on the magnetized surface of the data carrier surface in the coil of the read / write head. Over the years, however, due to the increasing data density, the areas on which individual bits are stored have become smaller and smaller. To read this data, smaller and more sensitive read heads were required. These were developed after 1994: MR reading heads and a few years later GMR reading heads ( giant magnetoresistance ). The GMR read head is an application in spintronics .

Head positioning

In the early days of hard disks, the read / write heads were controlled by stepper motors , just like floppy disk drives ; the track spacing was still large (see also actuator ). Moving coil systems that were controlled via magnetic information on a dedicated disk surface in a control loop ( dedicated servo ), but like stepper motor systems, reacted sensitively to different thermal expansion and mechanical inaccuracies, achieved greater positioning accuracy and thus a higher track density .

Later and still common systems use magnetic position information that is embedded at regular intervals between the data sectors on each of the surfaces ( embedded servo ). This method is electronically more complex, but mechanically simpler and very insensitive to disruptive influences. In front of the servo information there is usually a special marker that switches the head signal from data mode to servo mode, reads the information, passes it on to the positioning and switches back to data mode with a final marker. The positioning accuracy achieved in this way is further below 1 µm. The track density of the Hitachi Deskstar 7K500 from 2005 is 5.3 tracks / µm and the bit density is 34.3 bits / µm. That is 182 bits / µm².

One-piece head support systems are limited in precision by the inertia of the head arm. Multi-stage positioning (e.g. "Triple Stage Actuator" at Western Digital) enables higher accuracy and thus higher data density.

Parking the heads

To protect the disk surfaces before the read / write heads are put on (the so-called head crash ), they drive into the landing zone before the hard disk is turned off , in which they are fixed. If the supply voltage suddenly fails , the drive is switched as a generator and the energy obtained in this way generates a swivel pulse for the head arm. This parking increases the impact resistance of the hard drives for transport or modification. The parking position can be outside the panes or inside the panels. The read / write head is placed on a predefined area of the hard disk that does not contain any data. The surface of this area has been specially pretreated to prevent the head from sticking and to enable the hard disk to restart later. The fixation takes place, for example, via a magnet that holds the read head in place.

In the case of older hard disks, the read / write heads were withdrawn from the disk stack on almost all models. Later (1990s, 2000s) an indoor parking position was increasingly preferred. In 2008 both variants occur. In the case of notebook disks, the parking position outside the disk stack offers additional protection against damage to the surfaces of the disks during transport (vibration) of the hard disk.

With older hard disks, the heads had to be parked explicitly by command from the operating system before being switched off - stepper motors required many coordinated impulses for parking, which were very difficult or impossible to generate after the supply voltage was lost. The heads of modern hard disks can also be parked explicitly, since the described automatic parking mechanism can lead to increased wear and tear after the supply voltage has been cut off. The park command is now automatically issued by the device driver when the system is shut down.

In modern laptops, an acceleration sensor ensures that the hard drive finger is parked during a possible free fall, in order to limit the damage if a computer falls.

Hard drive enclosures

The housing of a hard drive is very massive. It is usually a cast part made of an aluminum alloy and provided with a stainless steel sheet metal cover. If a hard disk is opened in normal, uncleaned air, even the smallest dust or smoke particles, fingerprints etc. can lead to irreparable damage to the disk surfaces and the read / write heads.

The housing is dust-tight, but usually not hermetically sealed in the case of air-filled drives. A small opening provided with a filter allows air to enter or exit in the event of fluctuations in air pressure (such as changes in temperature or changes in atmospheric pressure ) in order to compensate for the pressure differences. This opening - see adjacent figure - must not be closed. Since the air pressure in the housing decreases with increasing altitude above sea level , but a minimum pressure is required for operation, these hard drives may only be operated up to a certain maximum sea level. This is usually noted in the associated data sheet. The air is required to prevent direct contact between the read / write head and the data carrier surface ( head crash ); see also section The read / write head unit above. In newer drives, an elastic membrane is used instead of the filter, which the system can adapt to changing pressure conditions by bulging in one direction or the other.

Some hard drive models are filled with helium and, unlike the air-filled drives, are hermetically sealed. Compared to air, helium has a lower density and higher thermal conductivity . The lower density of helium results in fewer disruptive flow effects, which lead to reduced forces acting on the motor. Vibrations caused by the positioning of the support arms are also reduced. As a result, the distances between the individual disks can be reduced and more of them can be integrated with the same overall height, which leads to a higher storage density of these disk drives.

Installation

Up until around the 1990s, hard disks had to be installed in a defined position and, as a rule, only horizontal operation (but not "overhead") or vertical position ("on the edge") was permitted. This is no longer required for today's drives and is no longer specified; they can be operated in any position. All hard drives are sensitive to vibration during operation, as this can disturb the positioning of the heads. If a hard disk is stored elastically, this point must be particularly taken into account.

Saving and reading of bits

The disk with the magnetic layer in which the information is stored rotates past the read / write heads (see above). When reading, changes in the magnetization of the surface cause a voltage pulse in the read head through electromagnetic induction . Until the beginning of the 21st century, longitudinal recording was used almost exclusively , and only then was vertical recording introduced, which allows much higher writing densities, but produces smaller signals during reading, which makes it more difficult to control. When writing, the same head is used to write the information into the magnetic layer. A magnetic head should be designed differently for reading than for writing, for example with regard to the width of the magnetic gap; if it is used for both, compromises must be made that again limit the performance. There are newer approaches using special geometries and coil windings to make these compromises more efficient.

A read pulse is only generated when the magnetization changes (mathematically: the read head “sees”, so to speak, only the derivation of the magnetization according to the location coordinate). These impulses form a serial data stream that is evaluated by the reading electronics as with a serial interface . If, for example, there is data that just happens to have the logic level "0" over long distances, no change occurs for as long as there is no signal at the read head. Then the reading electronics can get out of step and read incorrect values. Various methods are used to remedy this, which insert additional bits with reversed polarity into the data stream in order to avoid an excessively long distance of uniform magnetization. Examples of these methods are MFM and RLL , the general technique is explained under Line Code.

During the write process, depending on the logic level of the bit, a current of different polarity is fed into the magnetic coil of the write head (opposite polarity, but the same strength). The current creates a magnetic field, the field is bundled and guided by the magnetic core of the head. In the gap of the write head, the magnetic field lines then pass into the surface of the hard disk and magnetize it in the desired direction.

Saving and reading of byte-organized data

Today's magnetic disks organize their data - in contrast to random access memories (the arranging them in bytes or in small groups of 2 to 8 bytes) - in blocks of data (such as 512, 2048 or 4096 bytes.), Which is why this method block-based addressing called will. The interface can only read and write entire data blocks or sectors. (With earlier SCSI hard drives, the drive electronics enabled byte-by-byte access.)

Blocks are read by specifying the linear sector number. The hard disk “knows” where this block is located and reads or writes it on request.

When writing blocks:

- these are first provided with error correction information ( forward error correction ),

- they are subjected to a modulation: In the past GCR , MFM , RLL were common, nowadays PRML and more recently EPRML have replaced them, then

- the read / write head carrier is driven near the track that is to be written,

- the read / write head assigned to the information-carrying surface reads the track signal and carries out the fine positioning. This includes, on the one hand, finding the right track, and on the other hand, hitting this track exactly in the middle.

- If the read / write head is stable on the track and the correct sector is under the read / write head, the block modulation is written.

- In the event of a suspected incorrect position, the writing process must be stopped immediately so that no neighboring tracks (sometimes irreparable) are destroyed.

When reading, these steps are reversed:

- Move the read / write head carrier near the track that is to be read.

- the read / write head, which is assigned to the information-carrying surface, reads the track signal and carries out the fine positioning.

- Now the track is read as long (or a little longer) until the desired sector has been successfully found.

- Sectors found in this process are first demodulated and then subjected to error correction using the forward error correction information generated during writing.

- Usually far more sectors are read than the requested sector. These usually end up in the hard drive cache (if not already there), as there is a high probability that they will be needed shortly.

- If a sector was difficult to read (several read attempts necessary, error correction showed a number of correctable errors), it is usually reassigned, i. H. stored in a different location.

- If the sector was no longer readable, a so-called CRC error is reported.

Logical structure of the slices

(A) track (also cylinder), (B) sector, (C) block, (D) cluster . Note: The grouping to the cluster has nothing to do with MFM, but takes place at the level of the file system .

The magnetization of the coating on the disks is the actual information carrier. It is generated by the read / write head on circular, concentric tracks while the disk rotates. A disc typically contains a few thousand such tracks, mostly on both sides. The totality of all the same, i.e. H. Traces of the individual plates (surfaces) on top of one another are called cylinders. Each track is divided into small logical units called blocks . A block traditionally contains 512 bytes of user data. Each block has control information ( checksums ) that ensure that the information was written or read correctly. The entirety of all blocks that have the same angular coordinates on the disks is called the sector (in MFM ). The construction of a special type of hard drive, i. H. the number of cylinders (tracks per surface), heads (surfaces) and sectors is called the hard disk geometry.

With the division into sectors, only a small amount of magnetic layer area is available for their inner blocks, which is sufficient, however, to store a data block. The outer blocks, however, are much larger and take up much more magnetic layer area than would be necessary. Since RLL , this space outside is no longer wasted, the data is written just as densely there as inside - a track in the outside area now contains more blocks than in the inside area, so sector division is no longer possible. At constant rotation speed, the hard disk electronics can and must read and write faster outdoors than indoors. As a result of this development, the term sector lost its original importance and is now often used synonymously for block (contrary to its actual meaning) .

Since - when the numbering of the blocks exceeded the word limit (16 bit) with increasing hard disk capacities - some operating systems reached their limits too early, clusters were introduced. These are groups of a fixed number of blocks each (e.g. 32) that are sensibly physically adjacent. The operating system then no longer addresses individual blocks, but uses these clusters as the smallest allocation unit at its (higher) level. This relationship is only resolved at the hardware driver level.

With modern hard drives, the true geometry , i.e. the number of sectors, heads and cylinders that are managed by the hard drive controller, is usually not visible to the outside (i.e. to the computer or the hard drive driver). In the past, a virtual hard disk with completely different geometry data was played back to the computer in order to overcome the limitations of the PC-compatible hardware. For example, a hard drive that actually had only four heads could be seen by the computer with 255 heads. Today a hard drive usually simply reports the number of its blocks in LBA mode .

Hard disks customary today divide the cylinders radially into zones internally , whereby the number of blocks per track within a zone is the same, but increases when the zone changes from the inside to the outside ( zone bit recording ). The innermost zone has the fewest blocks per track, the outermost zone the most, which is why the continuous transfer rate decreases when changing zones from the outside to the inside.

The hard disk controller can hide defective blocks in the so-called hot-fix area in order to then show a block from a reserve area. For the computer it always seems as if all blocks are free of defects and can be used. However, this process can be traced via SMART using the Reallocated Sector Count parameter . A hard drive whose RSC value increases noticeably in a short period of time will soon fail.

Advanced format

Hard disk models have increasingly been using a sectoring scheme with larger sectors with almost exclusively 4096 bytes ("4K") since 2010. The larger data blocks enable greater redundancy and thus a lower block error rate (BER) and / or lower overall overhead in relation to the amount of user data. In order to avoid compatibility problems after decades of (almost) exclusive use of blocks with 512 bytes, most drives emulate a block size of 512 bytes ("512e") at their interface. A physical block of 4096 bytes is emulated as eight logical blocks of 512 bytes - the drive firmware then independently carries out the additional write and read operations required. This basically ensures that it can be used with existing operating systems and drivers.

The 512e emulation ensures that Advanced Format drives are compatible with existing operating systems - there may be a performance penalty if physical blocks are only partially written (the firmware then has to read, change and write back the physical block). As long as the organizational units ( clusters ) of the file system exactly match the physical sectors, this is not a problem, but it is if the structures are offset from one another. With current Linux versions, with Windows from Vista SP1 and macOS from Snow Leopard , new partitions are created in the way that makes sense for advanced format drives; However, this was not the case with Windows XP, which was still relatively widespread until after the end of support in 2014 . In Windows XP, for example, partitions aligned to “4K” can be created with various additional tools; Usually such programs are provided by the drive manufacturer.

Although 48-bit LBA hard disks can address up to 128 PiB using 512-byte sectors, there are already limitations when using the MBR as a partition table for hard disks with more than 2 TiB . Due to its fields, which are only 32 bits in size, the maximum partition size is limited to 2 TiB (2 32 = 4,294,967,296 sectors with a block / sector size of 512 bytes); Furthermore, your first block must be in the first 2 TiB, which limits the maximum usable hard disk size to 4 TiB. For the unrestricted use of hard disks> 2 TiB, an operating system that supports GPT is required . Booting hard disks with GPT is subject to further restrictions depending on the operating system and firmware (BIOS, EFI, EFI + CSM).

An emulation is increasingly no longer necessary ("4K native" or "4Kn"). For example, external hard drives (such as the Elements series from Western Digital) are not restricted by any incompatible drive controllers. Due to the native addressing, these disks can also be used with an MBR partition table beyond the 4-TiB limit. Booting from such data media is supported by Windows from Windows 8 / Windows Server 2012.

speed

The hard drive is one of the slowest parts of a PC core system. Therefore, the speeds of individual hard disk functions are of particular importance. The most important technical parameters are the continuous transmission rate (sustained data rate) and the average access time (data access time) . The values can be found in the manufacturer's data sheets.

The continuous transfer rate is the amount of data per second that the hard disk transfers on average when reading successive blocks. The values when writing are mostly similar and are therefore usually not given. In earlier hard drives, the drive electronics needed more time to process a block than the pure hardware read time. Therefore, "logically consecutive" blocks were not physically stored consecutively on the platter, but with one or two blocks offset. In order to read all blocks of a track "consecutively", the platter had to rotate two or three times ( interleave factor 2 or 3). Today's hard disks have sufficiently fast electronics and store logically consecutive blocks also physically consecutively.

Both when writing and when reading, the read / write head of the disk must be moved to the desired track before accessing a certain block and then it must be waited until the correct block is passed under the head by the rotation of the disk. As of 2009, these mechanically caused delays are around 6–20 ms, which is a small eternity by the standards of other computer hardware. This results in the extremely high latency of hard disks compared to RAM , which still has to be taken into account at the software development and algorithmic level .

The access time is made up of several parts:

- the lane change time (seek time) ,

- the latency (latency) and

- the command latency (controller overhead) .

The lane change time is determined by the strength of the drive for the read / write head (servo) . Depending on the distance the head has to cover, there are different times. Usually only the mean value is given when changing from one random track to another random track (weighted according to the number of blocks on the tracks).

The latency period is a direct consequence of the speed of rotation. On average, it takes half a turn for a certain sector to pass under the head. This results in the fixed relationship:

or as a tailored size equation for the latency in milliseconds and the speed per minute:

The command latency is to interpret the time spent by the hard disk controller so that the command and coordinate the necessary actions. This time is negligible nowadays.

The significance of these technical parameters for the system speed is limited. That is why another key figure is used in the professional sector, namely Input / Output Operations Per Second (IOPS). In the case of small block sizes, this is mainly dominated by the access time. From the definition it is clear that two disks half the size of the same speed provide the same amount of data with twice the IOPS number.

| category | year | model | size | rotational speed | Data rate | Lane change |

latency | Medium access time |

|---|---|---|---|---|---|---|---|---|

| GB | min −1 | MB / s | ms | |||||

| server | 1993 | IBM 0662 | 1 | 5,400 | 5 | 8.5 | 5.6 | 15.4 |

| server | 2002 | Seagate Cheetah X15 36LP |

18 - 36 |

15,000 |

52 - 68 |

3.6 | 2.0 | 5.8 |

| server | 2007 | Seagate Cheetah 15k.6 |

146 - 450 |

15,000 |

112 -171 |

3.4 | 2.0 | 5.6 |

| server | 2017 | Seagate Exos E 2.5 ″ |

300 - 900 |

15,000 |

210 -315 |

2.0 | ||

| Desktop | 1989 | Seagate ST296N | 0.080 | 3,600 | 0.5 | 28 | 8.3 | 40 |

| Desktop | 1993 | Seagate Marathon 235 |

0.064 - 0.210 |

3,450 | 16 | 8.7 | 24 | |

| Desktop | 1998 | Seagate Medalist 2510-10240 |

2.5 - 10 |

5,400 | 10.5 | 5.6 | 16.3 | |

| Desktop | 2000 | IBM Deskstar 75GXP |

20 - 40 |

5,400 | 32 | 9, p | 5.6 | 15.3 |

| Desktop | 2009 | Seagate Barracuda 7200.12 |

160 -1000 |

7,200 | 125 | 8.5 | 4.2 | 12.9 |

| Desktop | 2019 | Western Digital Black WD6003FZBX | 6,000 | 7,200 | 201 | 4.2 | 14.4 | |

| Notebook | 1998 | Hitachi DK238A |

3.2 - 4.3 |

4,200 |

8.7 - 13.5 |

12th | 7.1 | 19.3 |

| Notebook | 2008 | Seagate Momentus 5400.6 |

120 - 500 |

5,400 |

39 - 83 |

14th | 5.6 | 18th |

The development of hard disk access time can no longer keep pace with that of other PC components such as CPU , RAM or graphics card , which is why it has become a bottleneck . In order to achieve high performance , a hard disk must, as far as possible, always read or write large amounts of data in successive blocks, because the read / write head does not have to be repositioned.

This is achieved, among other things, by performing as many operations as possible in the RAM and coordinating the positioning of the data on the disk with the access pattern. A large cache in the computer's main memory, which is made available by all modern operating systems, is used for this. In addition, the hard disk electronics have a cache (as of 2012 for disks from 1 to 2 TB mostly 32 or 64 MiB), which is mainly used to decouple the interface transfer rate from the unchangeable transfer rate of the read / write head.

In addition to using a cache, there are other software strategies for increasing performance. They are particularly effective in multitasking systems, where the hard disk system is confronted with several or many read and write requests at the same time. It is then usually more efficient to rearrange these requirements in a meaningful new order. It is controlled by a hard disk scheduler , often in conjunction with Native Command Queuing (NCQ) or Tagged Command Queuing (TCQ). The simplest principle follows the same strategy as an elevator control: The lanes are initially approached in one direction and the requirements, for example for monotonically increasing lane numbers, are processed. Only when all of these have been processed does the movement reverse and then work in the direction of monotonically decreasing track numbers, etc.

Until around 1990, hard disks usually had so little cache (0.5 to a maximum of 8 KiB ) that they could not buffer a complete track (at that time 8.5 KiB or 13 KiB). Therefore, the data access had to be slowed down or optimized by interleaving . This was not necessary for disks with high-quality SCSI or ESDI controllers or for the IDE disks emerging at the time.

The SSD (" Solid-State Disks"; they work with semiconductor technology, a sub-area of solid -state physics ), which has been in use since around 2008, have significantly shorter access times due to their principle. Since 2011 there have also been combined drives that - transparent to the computer - realize part of the capacity as SSD, which serves as a buffer for the conventional disk.

Partitions

From the point of view of the operating system, hard disks can be divided into several areas by partitioning . These are not real drives, they are only represented as such by the operating system. They can be thought of as virtual hard disks, which are represented by the hard disk driver as separate devices to the operating system. The hard disk itself does not “know” these partitions, it is a matter of the higher-level operating system.

Each partition is usually formatted with a file system by the operating system . Under certain circumstances, depending on the file system used, several blocks are combined into clusters , which are then the smallest logical unit for data that is written to the disk. The file system ensures that data can be stored on the disk in the form of files. A table of contents in the file system ensures that files can be found again and stored in a hierarchical manner. The file system driver manages the used, available and defective clusters. Well-known examples of file systems are FAT , NTFS (Windows), HFS Plus (Mac OS), and ext4 (Linux).

Alliances

If larger amounts of data or large amounts of data are to be stored on cheaper, small and long-term tried-and-tested disks instead of on a single, large, expensive disk , they can be combined as JBOD with a Logical Volume Manager , or failures can be additionally prevented with RAIDs and combined in disk arrays . This can be done in a server or a network attached storage , which can be used by several, or you can even set up your own storage area network . You can also use (mostly external) cloud computing , which uses the techniques mentioned so far.

Noise avoidance

In order to reduce the volume of the drives when accessing data, most ATA and SATA hard disks intended for desktop use have supported Automatic Acoustic Management (AAM) since around 2003 , which means that they offer the option of using a configuration to favor the access time to extend the noise level. If the hard disk is operated in a quiet mode, the read / write heads are accelerated less so that access is quieter. The running noise of the disk stack and the data transfer rate are not changed, but the access time is increased. Suspensions with elastic elements called “decoupling” or “decoupling” serve the same purpose, which are intended to prevent the vibrations of the hard disk from being transmitted to the housing components.

Interfaces, bus system and jumpers

Originally (until 1990/91) what is now understood as an interface to the hard disk was not on the hard disk of consumer disks. A controller in the form of an ISA plug-in card was necessary for this. Among other things, this controller addressed the disk via an ST506 interface (with the modulation standards MFM , RLL or ARLL ). The capacity of the disk was also dependent on the controller, the same applied to the data reliability. A 20 MB MFM disk could store 30 MB on an RLL controller, but with a higher error rate .

Due to the separation of controller and medium, the latter had to be low-level formatted before use (sectoring). In contrast to these earlier hard disks with stepper motors , more modern hard disks are equipped with linear motors, which require sectoring and, above all, the writing of the servo information during manufacture, and otherwise can no longer be low-level formatted.

With ESDI , part of the controller has been integrated into the drive to increase speed and reliability. With SCSI and IDE disks, the separation of controller and storage device ended. Instead of the earlier controllers, they use host bus adapters , which provide a much more universal interface. HBAs exist as separate plug-in cards as well as integrated on motherboards or in chipsets and are often still referred to as "controllers".

Serial ATA interfaces are almost exclusively used as interfaces for internal hard drives in the desktop area today . Until a few years ago, parallel ATA (or IDE, EIDE) interfaces were still common. However, the IDE interface is still widely used in game consoles and hard drive recorders .

In addition to SATA, SAS and Fiber Channel have become established for servers and workstations . For a long time, the mainboards were usually provided with two ATA interfaces (for a maximum of 4 drives), but these have now been almost completely replaced by (up to 10) SATA interfaces.

A fundamental problem with parallel transmissions is that, as the speed increases, it becomes more and more difficult to manage the different transit times of the individual bits through the cable as well as crosstalk . As a result, the parallel interfaces were increasingly reaching their limits. Serial lines, especially in connection with differential line pairs, now allow significantly higher transmission rates.

ATA (IDE)

With an ATA hard drive, jumpers determine whether it is the drive with address 0 or 1 of the ATA interface (device 0 or 1, often referred to as master or slave ). Some models allow the capacity of the drive reported to the operating system or BIOS to be restricted , so that the hard drive can still be used in the event of incompatibilities ; however, the unreported disk space is given away.

By defining the ATA bus address, two hard drives can be connected to an ATA interface on the mainboard . Most mainboards have two ATA interfaces, called primary ATA and secondary ATA , ie “first” and “second ATA interface”. Therefore, a total of up to four hard drives can be connected to both ATA interfaces on the motherboard. Older motherboard BIOS only allow the computer to be started from the first ATA interface, and only if the hard disk is jumpered as the master .

The ATA interfaces are not only used by hard drives, but also by CD-ROM and DVD drives. This means that the total number of hard disks plus loadable drives (CD-ROM, DVD) is limited to four (without an additional card) ( floppy disk drives have a different interface). CompactFlash cards can be connected via an adapter and used like a hard disk.

There are a few things to consider when expanding:

- The first drive is to be jumpered as the “master” - usually the default setting for drives; only a possibly second drive on a cable is jumpered to "slave". Some drives also have the third option, “Single Drive”. This is used when the drive is alone on the cable; if a "slave" drive is added, you have to jumper the first one as the "master". This option is often called “Master with Slave present” for explanation.

- Where master or slave are located (at the end of the cable or "in the middle") does not matter (unless both drives are jumpered to Cable Select ). “Slave alone” works most of the time, but is not considered to be properly configured and is often prone to failure. Exception: With the newer 80-pin cables, the slave should be connected in the middle; the plugs are labeled accordingly.

The ideal distribution of the drives on the individual connections is debatable. It should be noted that traditionally two devices on the same cable share the speed and that the slower device occupies the bus longer and can therefore brake the faster one. With the common configuration with a hard disk and a CD / DVD drive, it is therefore advantageous to use each of these two devices with its own cable to an interface on the motherboard. In addition to the jumpers, there is an automatic mode for determining the addresses ("cable select"), which however requires suitable connection cables that were not widely used in the past, but have been standard since ATA-5 (80-pin cable).

ESDI

Parallel SCSI

Unlike IDE hard disks, the address of parallel SCSI hard disks cannot only be selected between two, but between 7 or 15 addresses , depending on the controller used. For this purpose, there are three or four jumpers on older SCSI drives to define the address - called SCSI ID number - which allow up to 7 or 15 devices to be addressed individually per SCSI bus. The maximum number of possible devices results from the number of ID bits (three for SCSI or four for wide SCSI) taking into account address # 0 assigned by the controller itself. In addition to jumpers, the address setting was rarely found using a small rotary switch . In modern systems, the IDs are assigned automatically (according to the sequence on the cable), and the jumpers are only relevant if this assignment is to be influenced.

There are also other jumpers such as the (optional) write protection jumper, which allows a hard disk to be locked against writing. Furthermore, depending on the model, switch-on delays or the start behavior can be influenced.

SATA

Black: Ground (0 V)

Orange: 3.3 V

Red: 5 V

Yellow: 12 V

Hard disks with Serial ATA (S-ATA or SATA) interfaces have been available since 2002 . The advantages over ATA are the higher possible data throughput and the simplified cable routing. Extended versions of SATA have additional functions that are particularly relevant for professional applications, such as the ability to exchange data carriers during operation ( hot-plug ). In the meantime, SATA has practically established itself, the latest hard disks are no longer offered as IDE versions since the transfer rates theoretically possible with IDE have almost been reached.

In 2005, the first hard drives with Serial Attached SCSI (SAS) were presented as a potential successor to SCSI for the server and storage area. This standard is partially backwards compatible with SATA.

Serial Attached SCSI (SAS)

The SAS technology is based on the established SCSI technology, but sends the data serially and does not connect the devices via a common bus, but individually via dedicated ports (or port multipliers). In addition to the higher speed compared to conventional SCSI technology, over 16,000 devices can theoretically be addressed in a network. Another advantage is the maximum cable length of 10 meters. SATA connectors are compatible with SAS, as are SATA hard drives; However, SAS hard drives require a SAS controller.

Fiber Channel Interface

Communication via the fiber channel interface is even more powerful and originally developed primarily for use in storage subsystems. As with USB, the hard disks are not addressed directly, but via an FC controller, FC HUBs or FC switches.

Queuing in SCSI, SATA or SAS data transfer

So-called queues are used primarily with SCSI disks and newer SATA hard disks. These are software processes as part of the firmware that manage the data between the request from the computer and physical access to the storage disk and, if necessary, temporarily store it. When queuing, they put the requests to the data carrier in a list and sort them according to the physical position on the disk and the current position of the write heads in order to read as much data as possible with as few rotations and head positions as possible. The hard drive's own cache plays a major role here, as the queues are stored in it (see also: Tagged Command Queuing , Native Command Queuing ).

Forerunner of the serial high-speed interfaces

The first widespread serial interfaces for hard disks were SSA ( Serial Storage Architecture , developed by IBM ) and Fiber Channel in the variant FC-AL (Fiber Channel-Arbitrated Loop). SSA hard drives are virtually out of production today, but Fiber Channel hard drives continue to be built for use in large storage systems. Fiber Channel describes the protocol used, not the transmission medium. Therefore, despite their name, these hard drives do not have an optical, but an electrical interface.

external hard drives

External hard drives are available as local mass storage devices (block devices) or as network mass storage devices ( NAS ). In the first case, the hard disk is connected as a local drive via hardware interface adapters; in the second case, the drive is a remote resource offered by the NAS (a computer connected via a network).

Universal interfaces such as FireWire , USB or eSATA are used to connect external hard drives . For this purpose, there is a bridge between the hard drive and the interface , which has a PATA or SATA connection on one side for connecting the actual hard drive, and on the other side a USB, Firewire, eSATA connection or several of these connections for Connection to the computer. With such external hard disks, two hard disks are sometimes built into one housing, but they only appear as one drive in relation to the computer ( RAID system).

A network connection is available for NAS systems .

Data protection and data safety

Data protection and data security are extremely sensitive issues, especially for authorities and companies . Authorities and companies must apply security standards, especially in the field of external hard drives, which are used as mobile data storage devices. If sensitive data gets into unauthorized hands, this usually results in irreparable damage. To prevent this and to guarantee the highest level of data security for mobile data transport, the following main criteria must be observed:

- Encryption ,

- Access control and the

- Management of the cryptographic key.

Encryption

Data can be encrypted by the operating system or directly by the drive ( Microsoft eDrive , Self-Encrypting Drive ). Typical storage locations for the crypto key are USB sticks or smart cards. When stored within the computer system (on the hard drive, in the disk controller, in a Trusted Platform Module ), the actual access key is only generated by combining it with a password or similar (passphrase) to be entered.

Malicious programs (viruses) can cause massive damage to the user through encryption or setting the hardware password to an unknown value. There is then no longer any possibility for the user to access the contents of the hard disk without knowing the password - a ransom is usually required for its (supposed) transmission ( ransomware ).

Access control

Various hard disks offer the option of protecting the entire hard disk content with a password directly on the hardware level. However, this basically useful property is little known. An ATA password can be assigned to access a hard disk .

Causes of failure and service life

Typical causes of failure include:

- The vulnerability of hard disks, especially in the new, very fast rotating systems, is mainly due to thermal problems.

- If the read / write head is attached mechanically, the hard disk can be damaged ( head crash ). During operation, the head hovers on an air cushion above the plate and is only prevented from touching down by this cushion. The hard disk should therefore not be moved during operation and should not be exposed to vibrations.

- External magnetic fields can impair and even destroy the sectoring of the hard disk.

- Errors in the control electronics or wear and tear of the mechanics lead to failures.

- Conversely, prolonged downtime can also result in the mechanics getting stuck in lubricants and the disk not even starting up (“ sticky disk ”).

The average number of operating hours before a hard disk fails is called MTTF (Mean Time To Failure) for irreparable disks . For hard disks that can be repaired, an MTBF value (Mean Time Between Failures) is given. All information on shelf life are purely statistical values. The lifespan of a hard drive cannot therefore be predicted in individual cases, as it depends on many factors:

- Vibrations and shocks: Strong vibrations can lead to premature (bearing) wear and should therefore be avoided.

- Differences between different model series of a manufacturer: Depending on the respective model, certain series can be identified that are considered to be particularly reliable or error-prone. In order to be able to provide statistically accurate information on the reliability, a large number of identical plates are required, which are operated under similar conditions. System administrators who look after many systems can thus gain some experience over the years as to which hard drives tend to behave abnormally and thus prematurely fail.

- Number of accesses (read head movements): Frequent access causes the mechanics to wear out faster than if the plate is not used and only the stack of plates is rotating. However, this influence is only minor.

- If the hard disk is operated above the operating temperature specified by the manufacturer, usually 40–55 ° C, the service life suffers. According to a study by Google Inc. (which analyzed internal hard drive failures), there are no increased failures at the upper end of the permissible range.

In general, high-speed server hard disks are designed for a higher MTTF than typical desktop hard disks, so that theoretically a longer service life can be expected. However, continuous operation and frequent accesses can put this into perspective and the hard drives have to be replaced after a few years.

Notebook hard disks are particularly stressed by frequent transports and are accordingly specified with a smaller MTTF than desktop hard disks despite their more robust design.

The manufacturers do not specify the exact shelf life of the stored data. Only magneto-optical processes achieve a persistence of 50 years and more.

Preventive measures against data loss

As a preventive measure against data loss, the following measures are therefore often taken:

- There should always be a backup copy of important data on another data carrier (note the note on failure above under partitioning ).

- Systems that have to be highly available and in which a hard drive failure must not cause business interruption or loss of data, usually have RAID . One configuration is, for example, the mirror set ( RAID 1 ), in which the data is mirrored on two hard drives, thus increasing the reliability. More efficient configurations are RAID 5 and higher. A strip set ( RAID 0 ) of two hard drives increases the speed, but the risk of failure increases. RAID 0 is therefore not a measure to prevent data loss or to increase the availability of the system.

- ATA hard drives have had SMART , internal monitoring of the hard drive for reliability , since the late 1990s . The status can be queried from the outside. One disadvantage is that SMART is not a standard. Each manufacturer defines its own fault tolerance, i. H. SMART should only be viewed as a general guide. In addition, there are hard disks whose SMART function does not warn of problems even if these have already made themselves noticeable during operation as blocks that are no longer readable.

- Most hard drives can be mounted in any position; in case of doubt, the manufacturer's specifications should be observed. Furthermore, the hard disk must be screwed tightly in order to prevent natural oscillation.

- During installation, measures to protect against ESD should be taken .

Reliable deletion

Regardless of the storage medium used (in this case a hard drive), when a file is deleted, it is usually only noted in the file system that the corresponding data area is now free. However, the data itself remains physically on the hard drive until the corresponding area is overwritten with new data. With data recovery programs, deleted data can often be restored at least in part. This is often used in the preservation of evidence, for example by the investigative authorities (police, etc.).

When partitioning or (quick) formatting , the data area is not overwritten, only the partition table or the description structure of the file system. Formatting tools generally also offer the option of completely overwriting the data carrier.

In order to guarantee that sensitive data is securely deleted, various manufacturers offer software, so-called erasers , which can overwrite the data area several times when deleting. However, even a single overwriting does not leave any usable information behind. Alternatively, one of the numerous free Unix distributions that can be started directly from CD can be used (e.g. Knoppix ). In addition to universal programs such as dd and shred, there are various open source programs specifically for deleting such as Darik's Boot and Nuke (DBAN) for this purpose . In Apple's Mac OS X , the corresponding functions ("Securely delete trash" and "Overwrite volume with zeros") are already included. The entire free space on the hard disk can also be overwritten in order to prevent deleted but not overwritten data (or fragments or copies of it) from being restored. In order to prevent data with special hardware z. For example, having it restored by data recovery companies or authorities, a procedure was introduced in 1996 (the “ Gutmann method ”) to guarantee that the data was securely deleted by overwriting it several times. However, with today's hard drives, it is sufficient to overwrite them once.

Alternatively, when scrapping the computer, the mechanical destruction of the hard drive or the disks is a good option, a method recommended by the Federal Office for Information Security . That is why in some companies when switching to a new generation of computers, all hard drives are ground up in a shredder and the data is destroyed in this way.

If the entire hard disk is effectively encrypted, the effective discarding of the access key is largely equivalent to deletion.

Long-term archiving

story

The forerunner of the hard disk was the magnetic drum from 1932. Outside of universities and research institutions, this memory was used as “main memory” with 8192 32-bit words in the Zuse Z22 from 1958 . Tape devices provided experience with magnetic coatings . The first commercially available hard disk, the IBM 350 , was announced by IBM in 1956 as part of the IBM 305 RAMAC computer ("Random Access Method of Accounting and Control").

Chronological overview

- September 1956: IBM presents the first magnetic hard disk drive with the designation " IBM 350 " (5 MB (1 byte here comprises 6 bits, the drive thus a total of 30 MBit), 24 inches, 600 ms access time, 1200 min −1 , 500 kg , 10 kW ). The read / write heads were electronically and pneumatically controlled, which is why the cabinet-sized unit contained a compressed air compressor. The drive wasn't sold, it was rented for $ 650 a month. A copy of the IBM350 is in the museum of the IBM Club in Sindelfingen . Louis Stevens , William A. Goddard , and John Lynott played a key role in the development of the research center in San José (led by Reynold B. Johnson ) .

- 1973: IBM starts the "Winchester" project, which was concerned with developing a rotating memory with a permanently mounted medium ( IBM 3340 , 30 MB storage capacity, 30 ms access time). When starting and stopping the medium, the heads should rest on the medium, which made a loading mechanism superfluous. It was named after the Winchester rifle . This technique prevailed in the following years. Until the 1990s, the term Winchester drive was therefore occasionally used for hard drives .

- 1979: Presentation of the first 8 ″ Winchester drives. However, these were very heavy and expensive; nevertheless, sales rose continuously.

- 1980: Sale of the first 5.25 "-Winchester drives by the company Seagate Technology (" ST506 ", 6 MB, 3600 min -1 , selling price about 1000 US dollars). For many years this model designation ( ST506 ) became the name for this new interface, which all other companies had adopted as the new standard in the PC area. At the same time, in addition to the already existing Apple microcomputers, the first PC from IBM came onto the market, which caused the demand for these hard drives, which were compact compared to the Winchester drives, to rise rapidly.

- 1986: Specification of SCSI , one of the first standardized protocols for a hard disk interface.

- 1989: Standardization of IDE , also known as AT-Bus.

- 1991: first 2.5-inch hard disk with 100 MB storage capacity

- 1997: First use of the giant magneto resistance (English Giant Magnetoresistive Effect (GMR) ) for hard disks, thereby the memory capacity could be increased considerably. One of the first hard drives with GMR read heads IBM brought out in November 1997 ( IBM Deskstar 16GP DTTA-351680 , 3.5 ", 16.8 GB, 0.93 kg 9.5 ms, 5400 min -1 ).

- 2004: First SATA drives with Native Command Queuing from Seagate .

- 2005: Prototype of a 2.5-inch hybrid hard disk (abbreviated as H-HDD ), which is made up of a magnetic-mechanical part and an additional NAND flash memory that serves as a buffer for the data. Only when the buffer is full is the data written from the buffer to the hard disk's magnetic medium.

- 2006: First 2.5-inch notebook drives ( Momentus 5400.3 , 2.5 "160 GB, 0.1 kg 5.6 ms, 5400 min -1 , 2 Watts) Seagate perpendicular recording technology (Perpendicular Recording ) . With the same recording technology, 3.5-inch hard drives reached a capacity of 750 GB in April.

- 2007: Hitachi's first terabyte hard drive. (3,5 ', 1 TB, kg 0.7, 8.5 ms, 7200 min -1 , 11 Watt)

- 2009: First 2 TB hard drive from Western Digital ( Caviar Green , 5400 min -1 )

- 2010: First 3 TB hard drive from Western Digital ( Caviar Green ). Systems without UEFI cannot access this hard drive. WD supplies a special controller card with which the 2.5 TB and 3 TB hard disks of PCs with older BIOS can be fully addressed as secondary disks. Seagate avoids this problem by using larger sectors ( Advanced Format ).

- 2011: Floods destroyed a number of factories in Thailand and made the global dependencies, among others, the hard disk industry clear: delivery failures led to a shortage of components and the prices for hard disks on the end consumer market in Germany skyrocketed. Hitachi GST and Western Digital are delivering the first 4 TB 3.5 ″ hard drives Deskstar 5K4000 (internal) and Touro Desk (external USB variant) with 1 TB per platter in small quantities . Samsung is also announcing corresponding models with a capacity of 1 TB per platter. The first models are to be the Spinpoint F6 with 2 TB and 4 TB.

- 2014: First 6 and 8 TB hard drives with helium filling from HGST .

- 2015: First 10 TB hard drive with helium filling and Shingled Magnetic Recording from HGST.

Development of the storage capacities of the different sizes

| year | 5.25 ″ | 3.5 ″ | 2.5 ″ | 1.8 ″ | 1.0 ″ | 0.85 ″ | other size |

typical model (s) with high capacity | source |

|---|---|---|---|---|---|---|---|---|---|

| 1956 | 3.75 MB | IBM 350 (1200 min -1 , 61 cm diameter, 1 mass t) | |||||||

| 1962-1964 | ≈ 25/28 MB | IBM Ramac 1301 (1800 min -1 ) | |||||||

| 1980 | 5 MB | Seagate ST-506 , first 5.25 "disk | |||||||

| 1981 | 10 MB | Seagate ST-412 (5.25 ″ FH) (only in IBM PC XT ) | |||||||

| 1983 | - | 10 MB | Rodime RO352 (3.5 ″ HH) | ||||||

| 1984 | 20 MB | - | Seagate ST-225 (5.25 ″ HH) | ||||||

| 1987 | 300 MB | - | Maxtor with 300 MB (5.25 ″) for 16,800 DM (PC / AT) or 17,260 DM (PC / XT), January 1987 | ||||||

| 1988 | 360 MB | 60 MB | Maxtor XT-4380E (5.25 ″ FH) or IBM WD-387T (3.5 ″ HH, e.g. installed in the IBM PS / 2 series) | ||||||

| 1990 | 676 MB | 213 MB | Maxtor XT-8760E (5.25 ″ FH) or Conner CP3200 (3.5 ″ HH) | ||||||

| 1991 | 1.3 GB | 130 MB | 40 MB | Seagate ST41600N (5.25 ″ FH), Maxtor 7131 (3.5 ″), Conner CP2044PK (2.5 ″) | |||||

| 1992 | 2 GB | 525 MB | 120 MB | - | - | - | 20 MB (1.3 ″) | Digital DSP-5200S ('RZ73', 5.25 "), Quantum Corporation ProDrive LPS 525S (3.5" LP) or Conner CP2124 (2.5 "), Hewlett-Packard HP3013" Kittyhawk "(1.3" ) | |

| 1993 | - | 1.06 GB | - | - | - | - | Digital RZ26 (3.5 ″) | ||

| 1994 | - | 2.1 GB | - | - | - | - | Digital RZ28 (3.5 ″) | ||

| 1995 | 9.1 GB | 2.1 GB | 422 MB | - | - | - | Seagate ST410800N (5.25 ″ FH), Quantum Corporation Atlas XP32150 (3.5 ″ LP), Seagate ST12400N or Conner CFL420A (2.5 ″) | ||

| 1997 | 12 GB | 16.8 GB | 4.8 GB | - | - | - | Quantum Bigfoot (12 GB, 5.25 ″), Nov. 1997, IBM Deskstar 16GP (3.5 ″) or Fujitsu MHH2048AT (2.5 ″) | ||

| 1998 | 47 GB | - | - | - | - | - | Seagate ST446452W (47 GB, 5.25 ″) Q1 1998 | ||

| 2001 | † | 180 GB | 40 GB | - | 340 MB | - | Seagate Barracuda 180 (ST1181677LW) | ||

| 2002 | † | 320 GB | 60 GB | - | - | - | Maxtor MaXLine-Plus-II (320 GB, 3.5 ″), end of 2002; IBM IC25T060 AT-CS | ||

| 2005 | † | 500 GB | 120 GB | 60 GB | 8 GB | 6 GB | Hitachi Deskstar 7K500 (500 GB, 3.5 ″), July 2005 | ||

| 2006 | † |

750 GB * |

200 GB | 80 GB | 8 GB | † | Western Digital WD7500KS, Seagate Barracuda 7200.10 750 GB, and others. | ||

| 2007 | † |

1 TB * |

320 GB * |

160 GB | 8 GB | † | Hitachi Deskstar 7K1000 (1000 GB, 3.5 ″), January 2007 | ||

| 2008 | † |

1.5 TB * |

500 GB * |

250 GB * |

† | † | Seagate ST31500341AS (1500 GB, 3.5 "), July 2008 Samsung Spinpoint M6 HM500LI (500 GB, 2.5"), June 2008 Toshiba MK2529GSG (250 GB, 1.8 "), September 2008 LaCie LF (40 GB, 1.3 ″), December 2008 |

|

|

| 2009 | † |

2 TB * |

1 TB * |

250 GB * |

† | † | Western Digital Caviar Green WD20EADS (2000 GB, 3.5 "), January 2009, Seagate Barracuda LP ST32000542AS (2 TB, 3.5", 5900 min -1 ) Western Digital Scorpio Blue WD10TEVT (1000GB, 2.5 " Height 12.5 mm), July 2009 as well as WD Caviar Black WD2001FASS and RE4 (both 2 TB, September 2009) Hitachi Deskstar 7K2000 (2000 GB, 3.5 ″), August 2009 |

|

|

| 2010 | † |

3 TB * |

1.5 TB * |

320 GB * |

† | † | Hitachi Deskstar 7K3000 & Western Digital Caviar Green (3.5 ″) Seagate FreeAgent GoFlex Desk (2.5 ″), June 2010 Toshiba MK3233GSG (1.8 ″) |

||

| 2011 | † |

4 TB * |

1.5 TB * |

320 GB * |

† | † | Seagate FreeAgent® GoFlex ™ Desk (4TB, 3.5 ″) September 2011 | ||

| 2012 | † |

4 TB * |

2 TB * |

320 GB * |

† | † | Western Digital Scorpio Green 2000 GB, SATA II (WD20NPVT), August 2012 | ||

| 2013 | † |

6 TB * |

2 TB * |

320 GB * |

† | † |

HGST Travelstar 5K1500 1.5 TB, SATA 6 Gbit / s, 9.5 mm, 2.5 inches (0J28001), August 2013 Samsung Spinpoint M9T, 2 TB, SATA 6 Gbit / s, 9.5 mm, 2.5 inches, November 2013 HGST Ultrastar He6, 6 TB, 3.5 ″, seven platters with helium filling, November 2013 |

|

|

| 2014 | † |

8 TB * |

2 TB * |

320 GB * |

† | † | HGST Ultrastar He8, 8 TB, 3.5 ", seven platters with helium filling, October 2014 Seagate Archive HDD v2, 8 TB, 3.5", six platters with air filling, using Shingled Magnetic Recording , December 2014 |

|

|

| 2015 | † |

10 TB ** |

4 TB * |

† | † | † | HGST Ultrastar Archive Ha10, 10 TB, 3.5 ", seven platters with helium filling, June 2015 Toshiba Stor.E Canvio Basics, 3 TB, 15mm, 2.5", four platters , April 2015 Samsung Spinpoint M10P, 4 TB, 15 mm, 2.5 ″, five platter, June 2015 |

|

|

| 2016 | † |

12 TB * |

5 TB ** |

† | † | † | Seagate Barracuda Compute, 5 TB, 15 mm, 2.5 ", five platters , October 2016 HGST Ultrastar He10 12 TB, 3.5", eight helium-filled platters, December 2016 |

|

|

| 2017 | † | 14 TB * | 5 TB ** | † | † | † | Toshiba Enterprise MG06ACA, 10 TB, 3.5 ", seven platters with air filling, September 2017 Toshiba Enterprise MG07ACA, 14 TB, 3.5", nine platters with helium filling, December 2017 |

|

|

| 2019 | † | 16 TB * | 5 TB ** | † | † | † | Seagate Exos X16, 16 TB, 3.5 ″, 9 platter with TDMR and helium filling, June 2019 |

|

|

| 2020 | † | 18 TB * | 5 TB ** | † | † | † | Western Digital Ultrastar DC HC550, 18 TB, 3.5 ″, 9 platter with TDMR, EAMR and helium filling, July 2020 |

|

Remarks:

- The capacity information always relates to the largest hard disk drive available for sale in the respective year , regardless of its speed or interface.

- Hard disk manufacturers use SI prefixes (1000 B = 1 kB etc.) for storage capacities . This corresponds to the recommendation of the International Electrotechnical Commission (IEC), but is in contrast to some software, which can lead to apparent size differences. See the Storage Capacity section for details .

- †: size outdated; no longer in use

- *: using perpendicular recording

- **: using Shingled Magnetic Recording

Manufacturer

In the second quarter of 2013, 133 million hard disk drives with a total capacity of 108 exabytes (108 million terabytes) were sold worldwide . In 2014, 564 million hard disk drives with a total capacity of 529 exabytes were produced worldwide. 87.7 million hard disk drives were sold worldwide in the fourth quarter of 2018.

| Surname | |

|---|---|

| Seagate | 40% |

| Western Digital | 37% |

| Toshiba / Kioxia | 23% |

Former manufacturers:

- Seagate acquired Conner Peripherals in 1996.

- Quantum was acquired by Maxtor in 2000 and Maxtor by Seagate in 2005.

- IBM's hard drive division was taken over by Hitachi in 2002.

- Fujitsu's hard drive division was taken over by Toshiba in 2010.

- ExcelStor produced some models for IBM and later for HGST / Hitachi.

- Samsung's hard drive division was acquired by Seagate in October 2011.

- HGST (Hitachi) was taken over by Western Digital in March 2012, with WD having to sell part of the 3.5 ″ production facilities to Toshiba under pressure from the antitrust authorities.

- See also category: Former hard drive manufacturer

Web links

- 50 years hard drive . tecchannel.de, September 13, 2006

- HDD from inside: Main parts - photo series with description based on a 3.5 ″ SATA hard drive (English)

- Overview of hard drive interfaces

- A brief History of Areal Density

- Technical data of a cinema hard drive at the film blog Kinogucker

Individual evidence

- ↑ geizhals.eu

- ↑ geizhals.eu

- ^ Fritz Haber Institute: Museum of the Common Network Center (GNZ), Berlin . Fhi-berlin.mpg.de. Archived from the original on July 27, 2010. Retrieved on August 11, 2010.

- ↑ Hitachi Introduces 1-Terabyte Hard Drive . PCWorld

- ↑ IronWolf and IronWolf Pro NAS Hard Drives | Seagate US | Seagate US. Retrieved December 20, 2019 (American English).

- ↑ 2.5 "vs. 3.5": Hard disk models: Paradigm shift: 2.5 "hard disks in the corporate sector . tomshardware.de

- ↑ Data sheet ST1181677 (PDF; 1.4 MiB) Retrieved on December 28, 2010.

- ↑ Josh Shaman: WD Announces 5mm WD Blue HDDs and WD Black SSHDs on storagereview.com dated April 23, 2013, accessed April 25, 2013

- ↑ Boi Feddern: 2.5 ″ high-end hard drive with Serial ATA, 300 GByte and heat sink. In: heise online. Heise Zeitschriften Verlag, April 21, 2008, accessed on April 21, 2008 .

- ↑ Platter Substrate Materials

- ↑ Hard Drive Data Recovery - A Basic Understanding of Platter Substrate Material ( Memento of March 24, 2008 in the Internet Archive )

- ↑ Joyce Y. Wong: Perpendicular patterned media for high density magnetic storage . California Institute of Technology, 2000 (English, caltech.edu [PDF; accessed January 5, 2011] PhD thesis).

- ↑ tecchannel.de

- ↑ Continuous Innovation for Highest Capacities and Lower TCO . Western Digital. July 2020.

- ↑ chap. 11.4.2 "Emergency unload", Hard Disk Drive Specification Hitachi Travelstar 80GN ( Memento from July 18, 2011 in the Internet Archive ) (PDF; 963 KiB). The above specifications describe that parking by simply switching off the power (with the drive acting as a generator) is not as easy to control as when it is triggered by an ATA command, and therefore places greater demands on the mechanics; it is intended as a makeshift only for occasional use; With this type of drive, an emergency parking process wears out the mechanics more than a hundred normal parking processes. Elsewhere in the document (Section 6.3.6.1), 20,000 emergency parking processes are guaranteed.

- ↑ a b c Christof Windeck: The first 6 terabyte hard drive comes with a helium filling. In: heise online. Heise Zeitschriften Verlag, November 4, 2013, accessed December 30, 2014 .

- ↑ Receipt missing, see discussion: Hard disk drive

- ↑ Switching to advanced format hard drives with 4K sectors . Seagate. Retrieved June 1, 2015.

- ^ The Advent of Advanced Format . IDEMA. Retrieved June 1, 2015.

- ↑ ADVANCED FORMAT Hard Disk Drives . IDEMA. May 7, 2011. Retrieved June 17, 2015.

- ↑ Microsoft Policy for Large Hard Drives with 4K Sectors in Windows , Microsoft Knowledge Base Entry KB2510009