Elector

The electors elect Heinrich of Luxembourg as king. These are, recognizable by their coats of arms (from left to right), the Archbishops of Cologne, Mainz and Trier, the Count Palatine of the Rhine, the Duke of Saxony, the Margrave of Brandenburg and the King of Bohemia, who was actually not present when Heinrich was elected .

An elector ( Latin princeps elector imperii or elector ) was one of the originally seven, later nine and finally ten highest-ranking princes of the Holy Roman Empire , who had the sole right to elect the Roman-German king since the 13th century . This royal title was traditionally associated with the right to be crowned Roman-German Emperor by the Pope .

The term Kurfürst goes back to the Middle High German word kur or kure for choice , from which the New High German küren arose.

The heir to the throne was referred to as the electoral prince , the prince regent for an elector as the spa administrator .

Composition of the Electors College

In the Middle Ages and in the early modern period , the Electoral College consisted of seven, later nine, imperial princes . One of the imperial offices was assigned to each elector . The original college included:

three spiritual prince-bishops :

- the Archbishop of Mainz as Imperial Chancellor for Germany

- the Archbishop of Cologne as Imperial Arch Chancellor for Italy

- the Archbishop of Trier as Imperial Chancellor for Burgundy

as well as four secular princes:

- the King of Bohemia as an ore cupbearer

- the Count Palatine near Rhine as an ore trustee

- the Duke of Saxony as arch marshal

- the Margrave of Brandenburg as treasurer

In the 17th century, two more imperial princes obtained the electoral dignity:

- 1623 the Duke of Bavaria instead of the Count Palatine, who in 1648 received a new, eighth electoral vote as well as the newly created office of Arch Treasurer and

- 1692 the Duke of Braunschweig-Lüneburg (Hanover) as bearer of the ore banner .

After Bavaria fell by inheritance to the Count Palatinate near Rhine in 1777, the Palatinate electoral dignity was extinguished, while the Bavarian one continued to exist. The Reichsdeputationshauptschluss of 1803 abolished the two spiritual cures of Cologne and Trier, the Kurerzkanzler received the newly created Principality of Regensburg as a replacement for Mainz, which had been lost to France . Four imperial princes, on the other hand, received new electoral dignity. These were:

After the Duchy of Salzburg fell to the Austrian Empire in the Peace of Pressburg in 1805, the electoral dignity associated with it was transferred to the newly created Grand Duchy of Würzburg . However, all changes since 1803 became irrelevant as early as 1806 with the dissolution of the Holy Roman Empire of the German Nation .

History of the Electoral College

From the origins to the double election in 1198

The tradition of the free election of kings in Eastern France , later the Holy Roman Empire, began in 911 when the last king of the Carolingian dynasty had died. At that time, the imperial princes, the so-called greats of the empire, did not appoint the Carolingian ruler of western France, legitimized under inheritance law, as his successor, but with Conrad I one of their own. This was not unusual at the time, because in western France too, the king had been elected by the great since 888. If he was strong, he was usually able to get his son to be his successor while he was still alive. Since the kings of the Capetian dynasty, who had ruled since 987, left sons as successors for centuries, the kingdom of France eventually developed into a hereditary monarchy . In Eastern Franconia, on the other hand, there were repeated changes of dynasty, as many kings did not leave a direct male heir. In 1002, 1024, 1125, 1137 and 1152 kings were elected who, although mostly closely related to their respective predecessors, were not their sons. As early as 1002, in addition to Duke Heinrich von Bayern from the Liudolfinger family, there were other competitors who had similar family ties with his predecessor Otto III. exhibited. After 1024, the Salian dynasty, with four successive kings, seemed to establish itself as the only one entitled to inheritance, until it also became extinct in the male line in 1125. In the ensuing King Lothar of Supplinburg , pure suffrage prevailed for the first time. When Lothar died in 1138, his son-in-law Heinrich the Proud was his closest relative under inheritance law. But instead of him, the choice fell on the Staufer Konrad III. In 1152 it was not Konrad's son who was elected, but his nephew Friedrich Barbarossa . With each cure, the tradition of free choice was strengthened and the inheritance laws weakened.

Originally, since 911, all imperial princes, the greats of the empire , were entitled to participate in the election of a king . Although it was not precisely determined who belonged to this group, there has always been a small number of pre- selectors (laudatores) to whom a preliminary decision was entitled. These did not necessarily include the most powerful but the most distinguished princes of the empire, who came closest to the king in rank and dignity. Among them were the three archbishops of Mainz, Cologne and Trier as well as the Count Palatine near Rhine, because their territories lay on old Franconian tribal soil. An election was only legitimate if the selection had also approved it. The later electoral college probably developed from this group of pre-selectors.

Gradual formation of the electoral college

With the death of Emperor Heinrich VI. (1165–1197) also failed his inheritance plan , the last attempt to transform the empire into a hereditary monarchy. In the ensuing German throne dispute between the Staufers and Welfen , two candidates for the throne were elected twice. The Staufer candidate Philipp von Schwaben was able to refer to the larger number of voters. The Archbishop of Cologne, Adolf von Altena , stood against him and was determined to get his candidate Otto von Braunschweig through. The initially defeated Otto asked Pope Innocent III. an arbitration award. Since the German kingship was linked to the Roman imperial dignity since Otto the Great's coronation in 962, the popes always had a great interest in having a right to participate in the German election. But as long as the outcome of the conflict was open, the Pope held back so as not to be on the loser's side.

In order to give his decision more weight, according to recent research a Guelph group of princes around Archbishop Adolf of Cologne suggested that two ecclesiastical and two secular princes - the Archbishops of Cologne and Mainz as well as the Count Palatine near Rhine and the Duke of Saxony - should be analogous to an arbitration committee with equal representation - should form the decisive election committee. At the beginning of the 13th century, a further ecclesiastical and a secular prince joined these four: the Archbishop of Trier and the Margrave of Brandenburg. According to older research opinion, Innocent III. took the view that the consent of the three Rhenish archbishops and the Count Palatine near Rhine was essential for a legitimate election, and that the Duke of Saxony and the Margrave of Brandenburg were added at the beginning of the 13th century.

Around 1230, the Sachsenspiegel of Eike von Repgow stated: "At the emperor's cure the first should be the bishop of Mainz, the second that of Trier, the third that of Cologne." Then the three secular princes follow. The work expressly denies the King of Bohemia the right to vote “because he is not a German”. Newer theories assume that he was only counted among the royal electors from 1252, when the Kurkollegium had prevailed as the sole electoral authority and stalemates should be avoided.

The Kurkollegium appeared for the first time in 1257, after the death of King Wilhelm of Holland , as an exclusive institution that excluded all other imperial princes from the election. In a double election, Alfonso of Castile and Richard of Cornwall chose William's successor. Each candidate received three votes. Ottokar II , King of Bohemia, gave both of them his vote. Neither of the two elected could actually exercise their power of rule, so that the interregnum lasted until Rudolf von Habsburg was elected in 1273. The time of the interregnum strengthened the position of the electors considerably, which was to be seen especially in the 14th century. The King of Bohemia also participated in the election of Rudolf I. He was only able to enforce his permanent membership in the college in 1289. During the Hussite Wars in the 15th century, the Bohemian electoral dignity was suspended again.

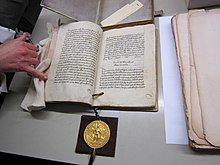

With the election of 1308, in which all six electors present elected Heinrich of Luxembourg as Roman-German king, the new self-image of the electoral college became visible. Together with the new king, his decision was only made known to Pope Clement V without asking for papal approbation . This made it clear that his decision was sufficient for a valid king's election and that this no longer required additional confirmation. The election also made it clear that the electors, following their experiences with Adolf von Nassau and Albrecht I , both of whom had pursued a domestic power policy partly directed against the electors , strictly observed the protection of their rights and required the new king to respect them. The scope of action of the kingship was thereby considerably restricted, even if Henry VII tried to strengthen his power by securing Bohemia as a domestic power and striving for the renewal of the empire in Italy .

Kurverein zu Rhense 1338

In 1338 the electors joined forces in the Kurverein zu Rhense in order to coordinate with each other before the elections for a king. The Electoral Council of the Reichstag later emerged from the Kurverein . In addition, the electors in Rhens determined that the Pope did not have the right to approve medicine and that the person they had elected to be king did not need his consent. In the so-called Rhenser Weistum of July 16, 1338, it says:

“According to the law and the custom of the kingdom, which has been tried and tested since time immemorial, someone who is elected as Roman king by the electors of the kingdom or, even in the event of disagreement, by the majority of them, does not require any nomination, approval, confirmation, consent or authority of the apostolic see for them Administration of the goods and rights of the empire or for the acceptance of the royal title. "

This development came to an end in 1508, when Maximilian I called himself "Elected Roman Emperor" with the consent of the Pope , but without being crowned by him. The title “ Roman King ”, which the rulers of the empire had carried since 1125 between their election as king and their coronation as emperor , was from then on reserved for the successor chosen during the lifetime of an emperor.

After Charles V , no emperor was enthroned by the Pope . The coronation of the Roman-German kings and emperors originally took place, from 936 to 1531, in Aachen by the Archbishop of Cologne. From the election of a king . Maximilian II in 1562 until the end of the old empire the election and coronation were usually held in Frankfurt, most recently in 1792. At the coronation the electors exercised - later only their deputies - the so-called Erzämter (archiofficia) , which fixed were associated with the electoral dignity.

Provisions of the Golden Bull 1356

Since the death of the Hohenstaufen emperor Frederick II , the electors had switched from the dynastic principle, i.e. from the election of a member of the ruling dynasty, to so-called "jumping elections". This meant that practically every imperial prince was one of the possible candidates for the throne. The crown pretenders had to buy the election through extensive concessions, such as the granting of privileges to the electors, which were precisely recorded in electoral capitulations . In addition, since the end of the 12th century, the candidates had to make sometimes immense monetary payments to the electors. All of this strengthened the power and independence of the sovereigns in the empire at the expense of the royal central authority and resulted in a progressive territorial fragmentation of Germany.

In order to avoid feuds of succession to the throne and the establishment of opposing kings in the future, Emperor Charles IV had the precise rights and duties of the electors and the procedure for electing German kings, which had been established under customary law, legally fixed in the Golden Bull in 1356 . The bull fulfilled its satisfactory effect and formed the basis of the constitutional order of the old empire until 1806 .

It stipulated that the Archbishop of Mainz, as Arch Chancellor for Germany, had to convene the electors in Frankfurt am Main within 30 days of the death of the last king . Before they proceeded to choose a successor there, in the Imperial Cathedral of St. Bartholomew , they had to swear to make their decision "without any secret agreement, reward or remuneration". In the electoral regulations, which were modeled on the conclave for the papal election, it further said:

“If the electors or their envoys have taken this oath in the form and manner mentioned above, they should vote and henceforth not leave the former city of Frankfurt before the majority of them of the world or Christianity have elected a secular head, namely a Roman one King and future emperor. If, however, they have not yet done this within thirty days from the date on which the oath was taken, from then on, after these thirty days, they should only enjoy bread and water and under no circumstances leave the city before they or the majority have chosen from them a ruler or a secular head of the believers, as stated above. "

The electors cast their votes according to their rank: the Archbishop of Trier voted first, the Archbishop of Cologne second, who also had the right to coronation, as long as Aachen, which was in his archdiocese, was the coronation city. The third was the King of Bohemia as a crowned secular prince, the fourth was the Count Palatine of the Rhine, who was imperial vicar during a vacant throne or in the absence of the Emperor from Germany . H. as the king's representative in all countries where Frankish law was applicable. In addition, he acted as a royal judge for violations of the law by the ruler. The Duke of Saxony followed in fifth place as imperial vicar for all states under Saxon law and in sixth the Margrave of Brandenburg. Although the highest-ranking elector, the Archbishop of Mainz voted last, so that his vote could be decisive in the event of a tie.

Like the Kurverein von Rhense, the Golden Bull also declared that the election of a king without the consent of the Pope was legally valid. The majority decision implemented in the Kurverein instead of the unanimity previously considered necessary was confirmed again.

The Golden Bull also established an annual meeting of all electors in which they should consult with the emperor. Further provisions concerned the special privileges and regulations of the electors: They received immunity , the right to coin , the customs law , the Jewish shelf as well as the Privilegium de non evocando and the Privilegium de non appellando . That means: Neither was allowed to move a lawsuit in itself, which fell under the jurisdiction of an elector of the Emperor, nor could appeal against judgments of their highest courts appeal to the imperial courts of their subjects, even with the created in the 16th century Imperial Court and the Reichshofrat . An elector came of age at the age of 18 , and attacks on him were considered a crime of majesty .

In order to prevent a fragmentation or an increase in the number of votes, the electorates were declared indivisible territories ( Kurpräzipuum ). In the narrower sense, the electorate is understood to mean only the electoral precinct, i.e. the territory to which the electoral dignity was bound. This means, for example, that when the Electorate of Saxony was mentioned, it actually only meant the small Duchy of Saxony-Wittenberg , the so-called Kurkreis . Due to the meaning of the title of an elector, the term electorate was always extended to the entire area ruled by an elector. From the 15th century onwards, the Electorate of Saxony essentially consisted of the old Margraviate of Meißen and Landgrave-Thuringian areas and later of Lusatia, with the Kurpräzipuum, the actual core Electorate around Wittenberg, only a small part of the so-called Electoral Saxon territory, the hereditary lands , mattered. The term electorate moved up the Elbe, owing to the much larger area south of the Kurpräzipuum. Strictly speaking, the holder of the electoral dignity is elector of the empire and continues to be a duke, margrave or palatine in his territorial conglomerates. The electoral title, however, allowed the elector to use it skillfully for national territorial standardization. Until the beginning of the 18th century, electors succeeded in integrating mostly all territories in their possession into the electoral state under the mantle of electoral dignity and thereby standardizing them in terms of constitutional and administrative law.

The indivisibility was de facto and de jure limited to the Kurpräzipuum itself, which means that other territories that belonged to the property of the Elector could of course continue to be inherited divided. This illustrates the case Elector Johann Georg I of Saxony , which partly erbländische so already integrated in the Gesamtkurstaat territories, partly constitutionally still strongly autonomous regions, such as former episcopal possession, leached out from the heritage of the electoral prince and his posthumous sons in his will as secundogenitures zusprach . However, the district with the electoral dignity and the large majority of the remaining territorial property remained with the firstborn. A similar legal situation led to the division of Leipzig in 1485 , in which the electorate was de facto divided, namely already well-integrated areas such as most of the margraviate of Meißen came to the second-born Duke Albrecht, while the majority of Thuringian areas with spa district and electoral dignity went to the first-born Went seriously. The sole successor of a secular elector in the Kurpräzipuum could only be his eldest legitimate son or, if he had no legitimate male descendant, his next male agnate . The Kurerben and heir of a secular electors were Elector called the spiritual electors were selected during the lifetime coadjutor , but still to be confirmed by the cathedral chapter needed. When an elector was a minor, his next adult male agnate, for example the elector's uncle, ruled as the spa administrator .

The second part of the bull, the Metz Code , dealt in particular with questions of protocol, the collection of taxes and the penalties for conspiracies against electors.

Electors in the early modern period

In the early modern period between 1500 and 1806 a total of 131 people held the electoral dignity.

Political role

Despite hostility from other imperial princes, the electors were able to retain the exclusive right to elect a king and the formulation of electoral surrenders until the end of the early modern period. If the emperors did not want to jeopardize the chance of their successors electing a king, they had to rely on a good relationship with the electors. This mostly determined imperial behavior in the 16th and 17th centuries. Since political coordination between the emperor and the imperial estates was difficult at a time when there was no permanent Reichstag, the heads of the empire consulted with the electors if they did not want to give the impression of being too autocratic. Ferdinand I and Maximilian II followed this line . In contrast, consultation during the time of Charles V or Rudolf II was much less pronounced. As the most important partners of the emperors in imperial politics, the electors were also referred to as "innermost councilors". The Kurkolleg was considered a "cardo imperii", as a hinge between the emperor and the imperial estates. The Electoral Days played an important role in this.

The amalgamation of the electors in the Kurverein , which was renewed in 1558 , required a strong political commitment and a pronounced sense of responsibility for the whole of the empire. Even if it was not an obligation, most of the electors were sworn in on the principles of the Kurverein. The Kurverein also served as an authority to defend the electoral class interests and to preserve special privileges.

The power position of the electors was already criticized by their contemporaries. Gottfried Wilhelm Leibniz in particular saw the Electoral College as an overpowering oligarchy . However, the importance of the electors fluctuated significantly in the course of the early modern period. Until 1630, their political role depended heavily on the willingness of the respective emperors to involve the electors in imperial politics or not.

The religious division in the age of confessionalization at the end of the 16th and beginning of the 17th century led to a deep crisis in the Kurfürstenkolleg. Increasingly, the different denominational interests played a more important role than the common concern for the empire. In particular, the Rhenish clergy electors acted as a bloc to protect Catholic interests. This partly changed again during the Thirty Years War . The electors and the electoral days partially took over the functions of the paralyzed Reichstag and turned against the temporarily strengthening imperial power. However, when the electors voluntarily announced an imperial tax in 1636, this led to resistance from the other large imperial estates. The dispute between electors and imperial princes was also carried out for almost half a century in terms of propaganda. By the 1680s at the latest, the electors with the claim to a political pioneering role had in fact failed, but they did not lose their ceremonial privileges. It was characteristic that after 1640 elector days only took place on the occasion of the royal elections.

Change of the Electors College

The first expansion of the Electoral College took place during the Thirty Years' War. Duke Maximilian I of Bavaria demanded the electoral dignity of his Wittelsbach cousin for the help he had given Emperor Ferdinand II in the expulsion of the so-called Winter King, the Palatinate Elector Friedrich V , from Bohemia . With the Upper Palatinate , the Duke was given the fourth cure - initially only to him personally in 1623, and also for his descendants in 1628. The dispute over the Palatinate cure played an important role in the negotiations for the Peace of Westphalia . It was finally settled in 1648 through the establishment of an eighth electoral dignity for the count palatine. The Habsburgs, on the other hand, were just as unable to push through a ninth cure for Austria as the votum decisivum , the decisive vote for Bohemia in the event of a tie in the electoral college .

In contrast, Duke Ernst August von Braunschweig-Lüneburg had success in striving for a ninth cure in 1692 . He had demanded the title increase from Emperor Leopold I as compensation for his weapons aid in the Palatinate War of Succession against France . It also played a role that, after the Electoral Palatinate passed to a Catholic line of the House of Wittelsbach, the Protestant element in the Electoral College was to be strengthened. When the emperor arbitrarily granted the duke the electoral dignity for his partial principality of Calenberg , the remaining, mostly Catholic, electors protested. As a result, Leopold I succeeded in enforcing the readmission (re-admission) of his own Bohemian course vote as a denominational compensation. The Habsburgs, as kings of Bohemia, could henceforth again take part in all electoral deliberations, which they had been denied from the late 15th century onwards, except for royal elections. In 1708 the Reichstag approved both, the reactivation of the Bohemian and the approval of the new electoral dignity of the Dukes of Braunschweig and Lüneburg.

Since the Electors of Hanover , as they were unofficially called, came to the British throne with George I in 1714 and from then on both offices were held in personal union, the kings of England from then on had a say in the German election.

When the male line of the Bavarian Wittelsbach family died out in 1777, their fourth electoral dignity fell to their heirs, who were also Wittelsbach family, in accordance with the provisions of the Peace of Westphalia in 1648 and the Wittelsbach house agreements of 1329 ( Treaty of Pavia ), 1724 ( Wittelsbach house union ), 1776, 1771 and 1774 (now Catholic) Count Palatine near Rhine. Their own Palatinate electoral dignity, the eighth cure, expired. This was accomplished with the Peace of Teschen in 1779.

End of the office of elector

During the Napoleonic Wars , France annexed the entire left bank of the Rhine and thus large areas of the four Rhenish electors. In the Reichsdeputationshauptschluss of 1803, the spiritual cures of Trier and Cologne were canceled and the Mainz electoral dignity was transferred to the Principality of Regensburg-Aschaffenburg . For into a secular Duchy of Salzburg converted archbishopric of Salzburg , for Wuerttemberg , the Margraviate of Baden and Hesse-Kassel four new treatments have been set up so that their number now increased to ten. In the Kurkollegium, in which there had always been a Catholic predominance, parity now prevailed for the first time: the five Protestant electors of Brandenburg, Hanover, Württemberg, Baden and Hesse-Kassel were matched by just as many Catholics: those of Saxony, who had been back since 1697 were Catholic, Palatinate-Bavaria, Bohemia and Salzburg as well as the Kurerzkanzler with Regensburg-Aschaffenburg. Just two years after this new regulation, in the Peace of Pressburg , the Duchy of Salzburg, which was ruled by Elector Ferdinand as a Habsburg secondary school , fell to the Austrian Empire . In order to compensate Ferdinand, the Grand Duchy of Würzburg was created for him on December 26th, 1805 , to which the Salzburg electoral dignity also passed. However, none of these new regulations had any impact on imperial politics, as none of the new electors could participate in an imperial election. In 1806, in response to the formation of the Rhine Confederation , Emperor Franz II laid down the crown of the Holy Roman Empire of the German Nation , which ceased to exist. This also meant that the electoral office lost its function.

Electorate of Hesse-Kassel

After the end of Napoleonic rule, at the Congress of Vienna , Landgrave Wilhelm von Hessen-Kassel, who had received the electoral dignity in 1803 , sought the title of "King of Chats ". The name was based on the Germanic tribal name of the Hessians. Despite substantial bribes, he was unable to enforce this claim. However, he was allowed to keep the title of “Elector”, with the personal title “Royal Highness”. Thereafter, the term "Electorate of Hesse" (colloquially also for short: "Electorate Hesse") became widely used to distinguish it from the former Landgraviate of Hesse-Darmstadt, which was raised to the Grand Duchy of Hesse by Napoleon . In a broader sense, the Electorate of Hesse or the Electorate of Hesse referred to the entirety of the territories ruled by the Elector, which were only placed under uniform administration with the administrative reform of 1821. The Electorate of Hesse was after its defeat in the 1866 war of Prussia annexed and took it under. Nevertheless, the name survived in some names, such as that of the Evangelical Church of Kurhessen-Waldeck .

Elector's regalia

The elector's robe consisted of the cure coat, a wide, coat-like skirt with wide sleeves or arm slits, completely lined with ermine fur - a symbol of royal dignity. There was also a wide ermine collar, purple gloves and the Kurhut , a velvet cap with an ermine border. The sleeve skirt and the round electoral hat of the secular electors were made of dark crimson-colored velvet, the arm slit skirt and the square cap of the clergymen were made of dark scarlet cloth. The insignia also included a course sword .

The depiction of the elector in electoral regalia on contemporary coins used in ordinary payment transactions is shown in the coin image of the Erbländisches Taler .

Figure of an elector on the south portal of the Liebfrauenkirche in Worms (13th century)

The "Great Elector" Friedrich Wilhelm von Brandenburg in the elector's robe (around 1652)

Karl Theodor , Elector of the Palatinate and of Bavaria in elector's regalia (1744)

The royal bohemian elector's robe in the Vienna treasury

Kurhut (detail from a state portrait of Karl Theodors of Bavaria , 1781)

Ernst of Saxony with the Kurschwertwappen

Erbländischer Taler : Elector Johann Georg I and Johann the Steadfast in the cure regalia with shouldered cure sword ( Dresden Mint , 1630)

See also

- List of the elections of the Roman-German kings for a historical overview since the Golden Bull

- Elector's fable

literature

- Winfried Becker : The Electoral Council. Main features of its development in the imperial constitution and its position at the Westphalian peace congress . Aschendorff, Münster 1973.

- Alexander Begert: The origin and development of the Kurkolleg. From the beginning to the early 15th century. Duncker & Humblot, Berlin 2010, ISBN 978-3-428-13222-5 , ( writings on constitutional history 81).

- Alexander Begert: Bohemia, the Bohemian cure and the empire from the High Middle Ages to the end of the Old Kingdom. Studies on electoral dignity and the constitutional status of Bohemia. Matthiesen, Husum 2003, ISBN 3-7868-1475-9 , ( historical studies 475).

- Hans Boldt : German constitutional history . Volume 1: From the beginning to the end of the older German Empire in 1806 . 2nd reviewed and updated edition. Deutscher Taschenbuch-Verlag, Munich 1990, ISBN 3-432-04424-1 .

- Arno Buschmann (ed.): Kaiser and Reich. Classical texts and documents on the constitutional history of the Holy Roman Empire of the German Nation . 2 volumes. 2nd supplemented edition. Nomos-Verlags-Gesellschaft, Munich 1994.

- Franz-Reiner Erkens : Electors and the election of a king. To new theories about the king's election paragraph in the Sachsenspiegel and the formation of the electoral college . Hahn, Hannover 2002, ISBN 3-7752-5730-6 , (Studies and Texts / Monumenta Germaniae Historica , 30).

- Axel Gotthard: pillars of the empire. The Electors in the Early Modern Reich Association. Matthiesen, Husum 1998, ISBN 3-7868-1457-0 .

- Klaus-Frédéric Johannes : Comments on the Golden Bull of Emperor Charles IV and the practice of electing a king 1356–1410. In: FS Jürgen Keddigkeit, 2012, pp. 105–120.

- Klaus-Frédéric Johannes: The golden bull and the practice of the king's election 1356-1410. In: Archives for Medieval Philosophy and Culture . Vol. 14 (2008) pp. 179-199.

- Martin Lenz: Consensus and Dissent. German royal election (1273–1349) and contemporary historiography . Vandenhoeck & Ruprecht, Göttingen 2002, ISBN 3-525-35424-X , ( forms of memory 5) , review .

- Hans K. Schulze : Basic structures of the constitution in the Middle Ages . Volume 3: Emperor and Empire . Kohlhammer, Stuttgart a. a. 1998, ISBN 3-17-013053-6 .

- Hans K. Schulze: Basic structures of the constitution in the Middle Ages . Volume 4: The Royalty . Kohlhammer, Stuttgart a. a. 2011.

- Armin Wolf : The emergence of the Kurfürstenkolleg 1198–1298. For the 700th anniversary of the first union of the seven electors. 2nd edited edition. Schulz-Kirchner, Idstein 2000, ISBN 3-8248-0031-4 , ( History Seminar NF 11).

- Armin Wolf (ed.): Royal daughter tribes, king voters and electors . Klostermann, Frankfurt am Main 2002, ISBN 978-3-465-03200-7 , ( Studies on European Legal History 152).

Web links

- Helmut Assing : The way of the Saxon and Brandenburg Ascanians to electoral dignity , 2007, essay with fundamental considerations on the formation of the electoral college ( memento of October 27, 2012 in the Internet Archive ) (PDF, 283 KiB)

Remarks

- ^ Mirror of the Saxons . In: World Digital Library . 1295-1363. Retrieved August 13, 2013.

- ↑ Helmut Neuhaus : The Empire in the Early Modern Age (= Encyclopedia of German History. Volume 42). 2nd Edition. Oldenbourg, Munich 2003, p. 23.

- ↑ Axel Gotthard : The Old Empire 1495–1806. Darmstadt 2009, ISBN 978-3-534-23039-6 , p. 13 f.

- ↑ Axel Gotthard: The Old Empire 1495–1806. Darmstadt 2009, ISBN 978-3-534-23039-6 , p. 15.

- ↑ Axel Gotthard: The Old Empire 1495–1806. Darmstadt 2009, ISBN 978-3-534-23039-6 , pp. 15 f., 24 f., 72 f.

- ↑ In Article III of the Treaty of Osnabrück it was stipulated: If it were to happen / that the Wilhelmische Mannliche Lini aussturbe / and the Palatinate remained / then not only the Ober-Pfaltz / but also the Chur-Dignitet, which the Hertzians had in Bäyern / to the still living Pfaltzgraffen / so in between being enfeoffed / fall home / and the eighth Chur -stelle extinguish completely. So, however, the Ober-Pfaltz / vff should get this case to the [18] still living Pfaltzgraffen / that the human-humble heirs of the Lord Elector in Bäyern have annual claims / and Beneficia, so they are legally due / reserved. The same regulation can be found in the Treaty of Münster

- ↑ Helmut Neuhaus : The Empire in the Early Modern Age (= Encyclopedia of German History. Volume 42). 2nd Edition. Oldenbourg, Munich 2003, p. 23 ; Dieter Schäfer : 200 years ago: The "Tuscany period" begins. Würzburg becomes the last electorate of the Holy Roman Empire. In: Andreas Mettenleiter (Ed.): Tempora mutantur et nos? Festschrift for Walter M. Brod on his 95th birthday. With contributions from friends, companions and contemporaries (= From Würzburg's city and university history. Volume 2). Akamedon, Pfaffenhofen 2007, ISBN 3-940072-01-X , pp. 195-199.